Have a thesis expert improve your writing

Check your thesis for plagiarism in 10 minutes, generate your apa citations for free.

- Knowledge Base

- Null and Alternative Hypotheses | Definitions & Examples

Null and Alternative Hypotheses | Definitions & Examples

Published on 5 October 2022 by Shaun Turney . Revised on 6 December 2022.

The null and alternative hypotheses are two competing claims that researchers weigh evidence for and against using a statistical test :

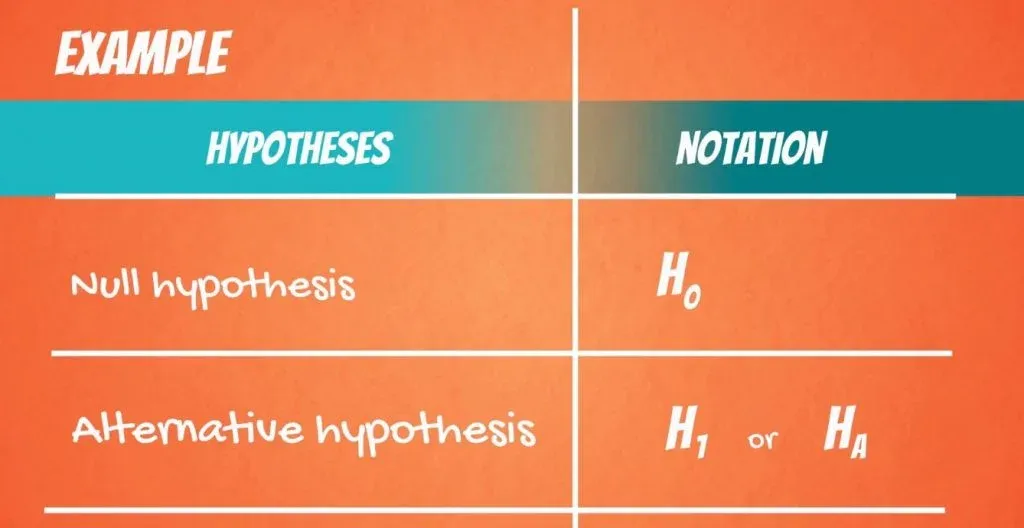

- Null hypothesis (H 0 ): There’s no effect in the population .

- Alternative hypothesis (H A ): There’s an effect in the population.

The effect is usually the effect of the independent variable on the dependent variable .

Table of contents

Answering your research question with hypotheses, what is a null hypothesis, what is an alternative hypothesis, differences between null and alternative hypotheses, how to write null and alternative hypotheses, frequently asked questions about null and alternative hypotheses.

The null and alternative hypotheses offer competing answers to your research question . When the research question asks “Does the independent variable affect the dependent variable?”, the null hypothesis (H 0 ) answers “No, there’s no effect in the population.” On the other hand, the alternative hypothesis (H A ) answers “Yes, there is an effect in the population.”

The null and alternative are always claims about the population. That’s because the goal of hypothesis testing is to make inferences about a population based on a sample . Often, we infer whether there’s an effect in the population by looking at differences between groups or relationships between variables in the sample.

You can use a statistical test to decide whether the evidence favors the null or alternative hypothesis. Each type of statistical test comes with a specific way of phrasing the null and alternative hypothesis. However, the hypotheses can also be phrased in a general way that applies to any test.

The null hypothesis is the claim that there’s no effect in the population.

If the sample provides enough evidence against the claim that there’s no effect in the population ( p ≤ α), then we can reject the null hypothesis . Otherwise, we fail to reject the null hypothesis.

Although “fail to reject” may sound awkward, it’s the only wording that statisticians accept. Be careful not to say you “prove” or “accept” the null hypothesis.

Null hypotheses often include phrases such as “no effect”, “no difference”, or “no relationship”. When written in mathematical terms, they always include an equality (usually =, but sometimes ≥ or ≤).

Examples of null hypotheses

The table below gives examples of research questions and null hypotheses. There’s always more than one way to answer a research question, but these null hypotheses can help you get started.

| ( ) | ||

| Does tooth flossing affect the number of cavities? | Tooth flossing has on the number of cavities. | test: The mean number of cavities per person does not differ between the flossing group (µ ) and the non-flossing group (µ ) in the population; µ = µ . |

| Does the amount of text highlighted in the textbook affect exam scores? | The amount of text highlighted in the textbook has on exam scores. | : There is no relationship between the amount of text highlighted and exam scores in the population; β = 0. |

| Does daily meditation decrease the incidence of depression? | Daily meditation the incidence of depression.* | test: The proportion of people with depression in the daily-meditation group ( ) is greater than or equal to the no-meditation group ( ) in the population; ≥ . |

*Note that some researchers prefer to always write the null hypothesis in terms of “no effect” and “=”. It would be fine to say that daily meditation has no effect on the incidence of depression and p 1 = p 2 .

The alternative hypothesis (H A ) is the other answer to your research question . It claims that there’s an effect in the population.

Often, your alternative hypothesis is the same as your research hypothesis. In other words, it’s the claim that you expect or hope will be true.

The alternative hypothesis is the complement to the null hypothesis. Null and alternative hypotheses are exhaustive, meaning that together they cover every possible outcome. They are also mutually exclusive, meaning that only one can be true at a time.

Alternative hypotheses often include phrases such as “an effect”, “a difference”, or “a relationship”. When alternative hypotheses are written in mathematical terms, they always include an inequality (usually ≠, but sometimes > or <). As with null hypotheses, there are many acceptable ways to phrase an alternative hypothesis.

Examples of alternative hypotheses

The table below gives examples of research questions and alternative hypotheses to help you get started with formulating your own.

| Does tooth flossing affect the number of cavities? | Tooth flossing has an on the number of cavities. | test: The mean number of cavities per person differs between the flossing group (µ ) and the non-flossing group (µ ) in the population; µ ≠ µ . |

| Does the amount of text highlighted in a textbook affect exam scores? | The amount of text highlighted in the textbook has an on exam scores. | : There is a relationship between the amount of text highlighted and exam scores in the population; β ≠ 0. |

| Does daily meditation decrease the incidence of depression? | Daily meditation the incidence of depression. | test: The proportion of people with depression in the daily-meditation group ( ) is less than the no-meditation group ( ) in the population; < . |

Null and alternative hypotheses are similar in some ways:

- They’re both answers to the research question

- They both make claims about the population

- They’re both evaluated by statistical tests.

However, there are important differences between the two types of hypotheses, summarized in the following table.

| A claim that there is in the population. | A claim that there is in the population. | |

|

| ||

| Equality symbol (=, ≥, or ≤) | Inequality symbol (≠, <, or >) | |

| Rejected | Supported | |

| Failed to reject | Not supported |

To help you write your hypotheses, you can use the template sentences below. If you know which statistical test you’re going to use, you can use the test-specific template sentences. Otherwise, you can use the general template sentences.

The only thing you need to know to use these general template sentences are your dependent and independent variables. To write your research question, null hypothesis, and alternative hypothesis, fill in the following sentences with your variables:

Does independent variable affect dependent variable ?

- Null hypothesis (H 0 ): Independent variable does not affect dependent variable .

- Alternative hypothesis (H A ): Independent variable affects dependent variable .

Test-specific

Once you know the statistical test you’ll be using, you can write your hypotheses in a more precise and mathematical way specific to the test you chose. The table below provides template sentences for common statistical tests.

| ( ) | ||

| test

with two groups | The mean dependent variable does not differ between group 1 (µ ) and group 2 (µ ) in the population; µ = µ . | The mean dependent variable differs between group 1 (µ ) and group 2 (µ ) in the population; µ ≠ µ . |

| with three groups | The mean dependent variable does not differ between group 1 (µ ), group 2 (µ ), and group 3 (µ ) in the population; µ = µ = µ . | The mean dependent variable of group 1 (µ ), group 2 (µ ), and group 3 (µ ) are not all equal in the population. |

| There is no correlation between independent variable and dependent variable in the population; ρ = 0. | There is a correlation between independent variable and dependent variable in the population; ρ ≠ 0. | |

| There is no relationship between independent variable and dependent variable in the population; β = 0. | There is a relationship between independent variable and dependent variable in the population; β ≠ 0. | |

| Two-proportions test | The dependent variable expressed as a proportion does not differ between group 1 ( ) and group 2 ( ) in the population; = . | The dependent variable expressed as a proportion differs between group 1 ( ) and group 2 ( ) in the population; ≠ . |

Note: The template sentences above assume that you’re performing one-tailed tests . One-tailed tests are appropriate for most studies.

The null hypothesis is often abbreviated as H 0 . When the null hypothesis is written using mathematical symbols, it always includes an equality symbol (usually =, but sometimes ≥ or ≤).

The alternative hypothesis is often abbreviated as H a or H 1 . When the alternative hypothesis is written using mathematical symbols, it always includes an inequality symbol (usually ≠, but sometimes < or >).

A research hypothesis is your proposed answer to your research question. The research hypothesis usually includes an explanation (‘ x affects y because …’).

A statistical hypothesis, on the other hand, is a mathematical statement about a population parameter. Statistical hypotheses always come in pairs: the null and alternative hypotheses. In a well-designed study , the statistical hypotheses correspond logically to the research hypothesis.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the ‘Cite this Scribbr article’ button to automatically add the citation to our free Reference Generator.

Turney, S. (2022, December 06). Null and Alternative Hypotheses | Definitions & Examples. Scribbr. Retrieved 9 June 2024, from https://www.scribbr.co.uk/stats/null-and-alternative-hypothesis/

Is this article helpful?

Shaun Turney

Other students also liked, levels of measurement: nominal, ordinal, interval, ratio, the standard normal distribution | calculator, examples & uses, types of variables in research | definitions & examples.

If you're seeing this message, it means we're having trouble loading external resources on our website.

If you're behind a web filter, please make sure that the domains *.kastatic.org and *.kasandbox.org are unblocked.

To log in and use all the features of Khan Academy, please enable JavaScript in your browser.

AP®︎/College Statistics

Course: ap®︎/college statistics > unit 10.

- Idea behind hypothesis testing

Examples of null and alternative hypotheses

- Writing null and alternative hypotheses

- P-values and significance tests

- Comparing P-values to different significance levels

- Estimating a P-value from a simulation

- Estimating P-values from simulations

- Using P-values to make conclusions

Want to join the conversation?

- Upvote Button navigates to signup page

- Downvote Button navigates to signup page

- Flag Button navigates to signup page

Video transcript

Null Hypothesis and Alternative Hypothesis

- Inferential Statistics

- Statistics Tutorials

- Probability & Games

- Descriptive Statistics

- Applications Of Statistics

- Math Tutorials

- Pre Algebra & Algebra

- Exponential Decay

- Worksheets By Grade

- Ph.D., Mathematics, Purdue University

- M.S., Mathematics, Purdue University

- B.A., Mathematics, Physics, and Chemistry, Anderson University

Hypothesis testing involves the careful construction of two statements: the null hypothesis and the alternative hypothesis. These hypotheses can look very similar but are actually different.

How do we know which hypothesis is the null and which one is the alternative? We will see that there are a few ways to tell the difference.

The Null Hypothesis

The null hypothesis reflects that there will be no observed effect in our experiment. In a mathematical formulation of the null hypothesis, there will typically be an equal sign. This hypothesis is denoted by H 0 .

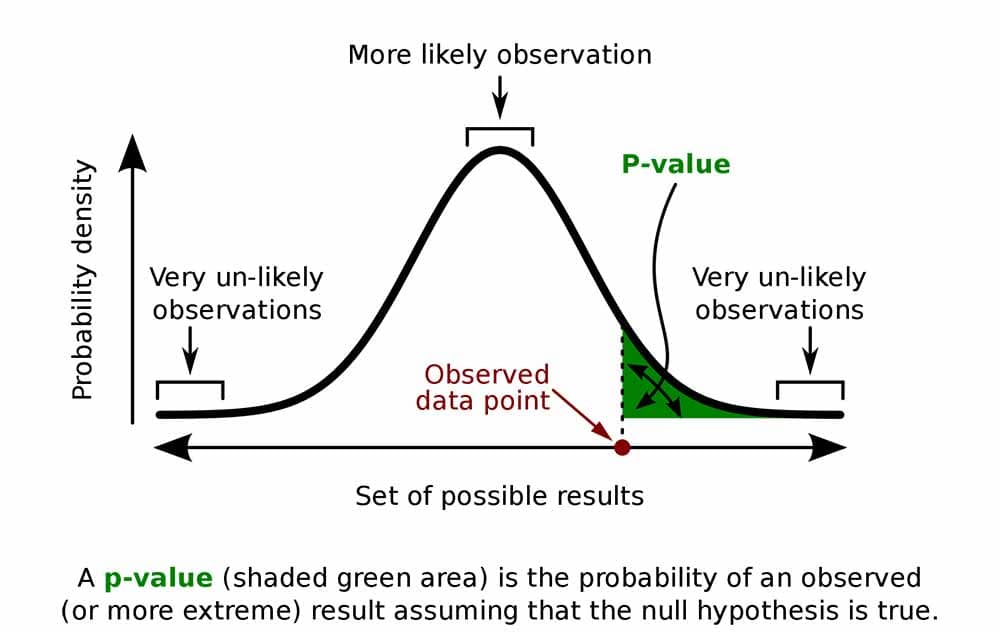

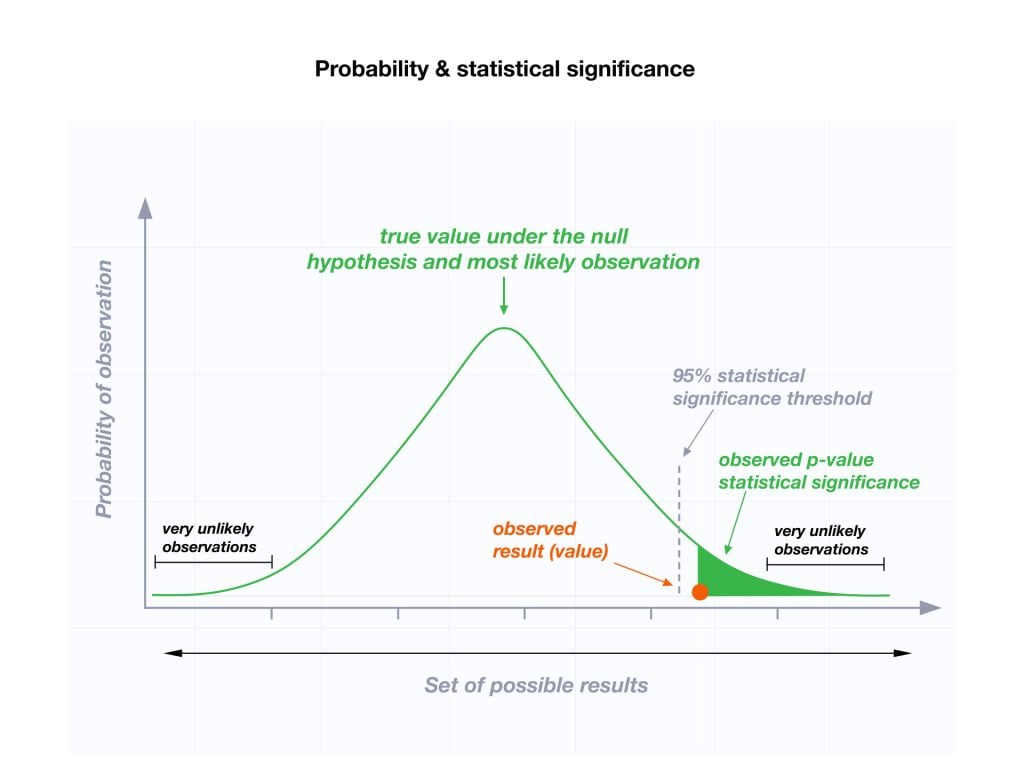

The null hypothesis is what we attempt to find evidence against in our hypothesis test. We hope to obtain a small enough p-value that it is lower than our level of significance alpha and we are justified in rejecting the null hypothesis. If our p-value is greater than alpha, then we fail to reject the null hypothesis.

If the null hypothesis is not rejected, then we must be careful to say what this means. The thinking on this is similar to a legal verdict. Just because a person has been declared "not guilty", it does not mean that he is innocent. In the same way, just because we failed to reject a null hypothesis it does not mean that the statement is true.

For example, we may want to investigate the claim that despite what convention has told us, the mean adult body temperature is not the accepted value of 98.6 degrees Fahrenheit . The null hypothesis for an experiment to investigate this is “The mean adult body temperature for healthy individuals is 98.6 degrees Fahrenheit.” If we fail to reject the null hypothesis, then our working hypothesis remains that the average adult who is healthy has a temperature of 98.6 degrees. We do not prove that this is true.

If we are studying a new treatment, the null hypothesis is that our treatment will not change our subjects in any meaningful way. In other words, the treatment will not produce any effect in our subjects.

The Alternative Hypothesis

The alternative or experimental hypothesis reflects that there will be an observed effect for our experiment. In a mathematical formulation of the alternative hypothesis, there will typically be an inequality, or not equal to symbol. This hypothesis is denoted by either H a or by H 1 .

The alternative hypothesis is what we are attempting to demonstrate in an indirect way by the use of our hypothesis test. If the null hypothesis is rejected, then we accept the alternative hypothesis. If the null hypothesis is not rejected, then we do not accept the alternative hypothesis. Going back to the above example of mean human body temperature, the alternative hypothesis is “The average adult human body temperature is not 98.6 degrees Fahrenheit.”

If we are studying a new treatment, then the alternative hypothesis is that our treatment does, in fact, change our subjects in a meaningful and measurable way.

The following set of negations may help when you are forming your null and alternative hypotheses. Most technical papers rely on just the first formulation, even though you may see some of the others in a statistics textbook.

- Null hypothesis: “ x is equal to y .” Alternative hypothesis “ x is not equal to y .”

- Null hypothesis: “ x is at least y .” Alternative hypothesis “ x is less than y .”

- Null hypothesis: “ x is at most y .” Alternative hypothesis “ x is greater than y .”

- Null Hypothesis Examples

- An Example of a Hypothesis Test

- Hypothesis Test for the Difference of Two Population Proportions

- What Is a P-Value?

- How to Conduct a Hypothesis Test

- Hypothesis Test Example

- Maslow's Hierarchy of Needs Explained

- Chi-Square Goodness of Fit Test

- What Level of Alpha Determines Statistical Significance?

- Popular Math Terms and Definitions

- How to Do Hypothesis Tests With the Z.TEST Function in Excel

- The Difference Between Type I and Type II Errors in Hypothesis Testing

- Type I and Type II Errors in Statistics

- The Runs Test for Random Sequences

- What 'Fail to Reject' Means in a Hypothesis Test

- What Is the Difference Between Alpha and P-Values?

Module 9: Hypothesis Testing With One Sample

Null and alternative hypotheses, learning outcomes.

- Describe hypothesis testing in general and in practice

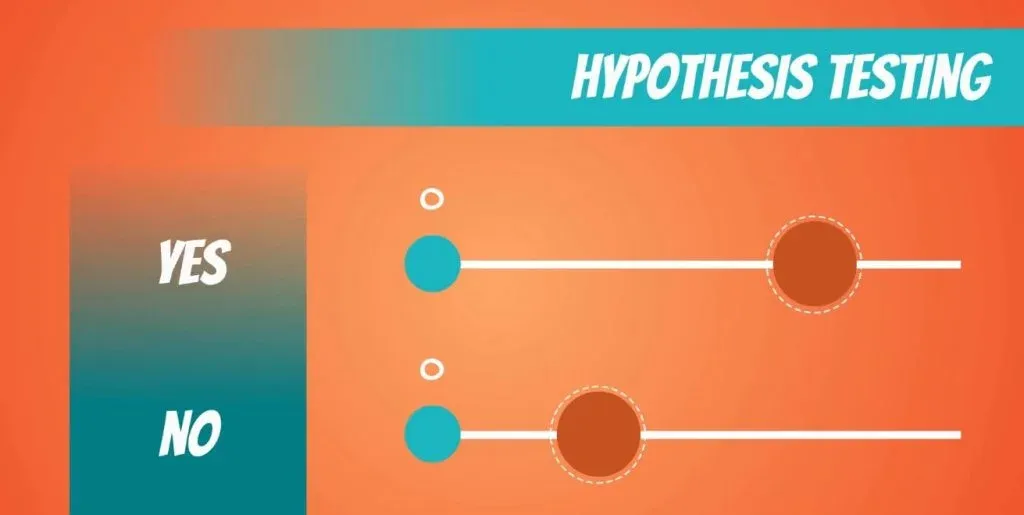

The actual test begins by considering two hypotheses . They are called the null hypothesis and the alternative hypothesis . These hypotheses contain opposing viewpoints.

H 0 : The null hypothesis: It is a statement about the population that either is believed to be true or is used to put forth an argument unless it can be shown to be incorrect beyond a reasonable doubt.

H a : The alternative hypothesis : It is a claim about the population that is contradictory to H 0 and what we conclude when we reject H 0 .

Since the null and alternative hypotheses are contradictory, you must examine evidence to decide if you have enough evidence to reject the null hypothesis or not. The evidence is in the form of sample data.

After you have determined which hypothesis the sample supports, you make adecision. There are two options for a decision . They are “reject H 0 ” if the sample information favors the alternative hypothesis or “do not reject H 0 ” or “decline to reject H 0 ” if the sample information is insufficient to reject the null hypothesis.

Mathematical Symbols Used in H 0 and H a :

| equal (=) | not equal (≠) greater than (>) less than (<) |

| greater than or equal to (≥) | less than (<) |

| less than or equal to (≤) | more than (>) |

H 0 always has a symbol with an equal in it. H a never has a symbol with an equal in it. The choice of symbol depends on the wording of the hypothesis test. However, be aware that many researchers (including one of the co-authors in research work) use = in the null hypothesis, even with > or < as the symbol in the alternative hypothesis. This practice is acceptable because we only make the decision to reject or not reject the null hypothesis.

H 0 : No more than 30% of the registered voters in Santa Clara County voted in the primary election. p ≤ 30

H a : More than 30% of the registered voters in Santa Clara County voted in the primary election. p > 30

A medical trial is conducted to test whether or not a new medicine reduces cholesterol by 25%. State the null and alternative hypotheses.

H 0 : The drug reduces cholesterol by 25%. p = 0.25

H a : The drug does not reduce cholesterol by 25%. p ≠ 0.25

We want to test whether the mean GPA of students in American colleges is different from 2.0 (out of 4.0). The null and alternative hypotheses are:

H 0 : μ = 2.0

H a : μ ≠ 2.0

We want to test whether the mean height of eighth graders is 66 inches. State the null and alternative hypotheses. Fill in the correct symbol (=, ≠, ≥, <, ≤, >) for the null and alternative hypotheses. H 0 : μ __ 66 H a : μ __ 66

- H 0 : μ = 66

- H a : μ ≠ 66

We want to test if college students take less than five years to graduate from college, on the average. The null and alternative hypotheses are:

H 0 : μ ≥ 5

H a : μ < 5

We want to test if it takes fewer than 45 minutes to teach a lesson plan. State the null and alternative hypotheses. Fill in the correct symbol ( =, ≠, ≥, <, ≤, >) for the null and alternative hypotheses. H 0 : μ __ 45 H a : μ __ 45

- H 0 : μ ≥ 45

- H a : μ < 45

In an issue of U.S. News and World Report , an article on school standards stated that about half of all students in France, Germany, and Israel take advanced placement exams and a third pass. The same article stated that 6.6% of U.S. students take advanced placement exams and 4.4% pass. Test if the percentage of U.S. students who take advanced placement exams is more than 6.6%. State the null and alternative hypotheses.

H 0 : p ≤ 0.066

H a : p > 0.066

On a state driver’s test, about 40% pass the test on the first try. We want to test if more than 40% pass on the first try. Fill in the correct symbol (=, ≠, ≥, <, ≤, >) for the null and alternative hypotheses. H 0 : p __ 0.40 H a : p __ 0.40

- H 0 : p = 0.40

- H a : p > 0.40

Concept Review

In a hypothesis test , sample data is evaluated in order to arrive at a decision about some type of claim. If certain conditions about the sample are satisfied, then the claim can be evaluated for a population. In a hypothesis test, we: Evaluate the null hypothesis , typically denoted with H 0 . The null is not rejected unless the hypothesis test shows otherwise. The null statement must always contain some form of equality (=, ≤ or ≥) Always write the alternative hypothesis , typically denoted with H a or H 1 , using less than, greater than, or not equals symbols, i.e., (≠, >, or <). If we reject the null hypothesis, then we can assume there is enough evidence to support the alternative hypothesis. Never state that a claim is proven true or false. Keep in mind the underlying fact that hypothesis testing is based on probability laws; therefore, we can talk only in terms of non-absolute certainties.

Formula Review

H 0 and H a are contradictory.

- OpenStax, Statistics, Null and Alternative Hypotheses. Provided by : OpenStax. Located at : http://cnx.org/contents/[email protected]:58/Introductory_Statistics . License : CC BY: Attribution

- Introductory Statistics . Authored by : Barbara Illowski, Susan Dean. Provided by : Open Stax. Located at : http://cnx.org/contents/[email protected] . License : CC BY: Attribution . License Terms : Download for free at http://cnx.org/contents/[email protected]

- Simple hypothesis testing | Probability and Statistics | Khan Academy. Authored by : Khan Academy. Located at : https://youtu.be/5D1gV37bKXY . License : All Rights Reserved . License Terms : Standard YouTube License

Hypothesis Testing: Null Hypothesis and Alternative Hypothesis

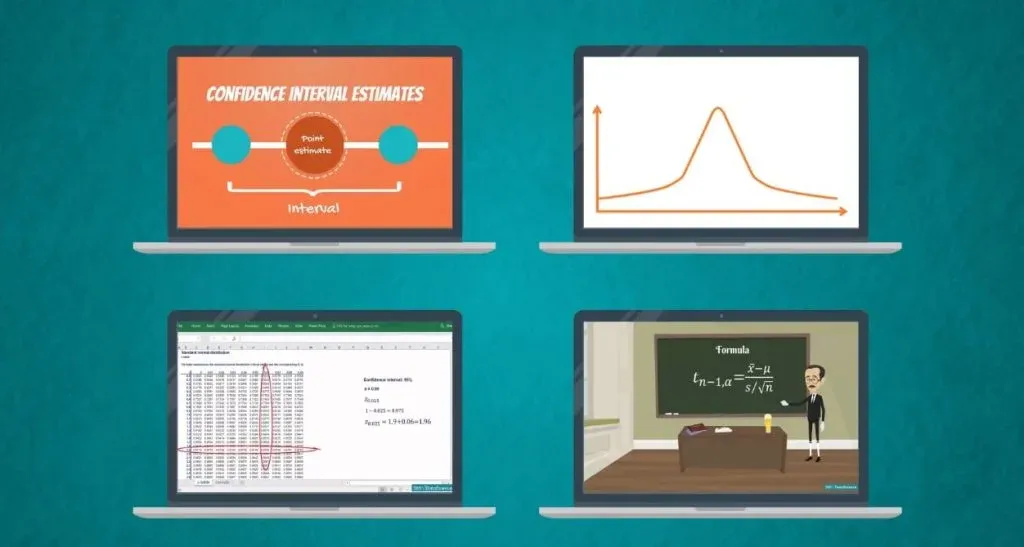

Join over 2 million students who advanced their careers with 365 Data Science. Learn from instructors who have worked at Meta, Spotify, Google, IKEA, Netflix, and Coca-Cola and master Python, SQL, Excel, machine learning, data analysis, AI fundamentals, and more.

Figuring out exactly what the null hypothesis and the alternative hypotheses are is not a walk in the park. Hypothesis testing is based on the knowledge that you can acquire by going over what we have previously covered about statistics in our blog.

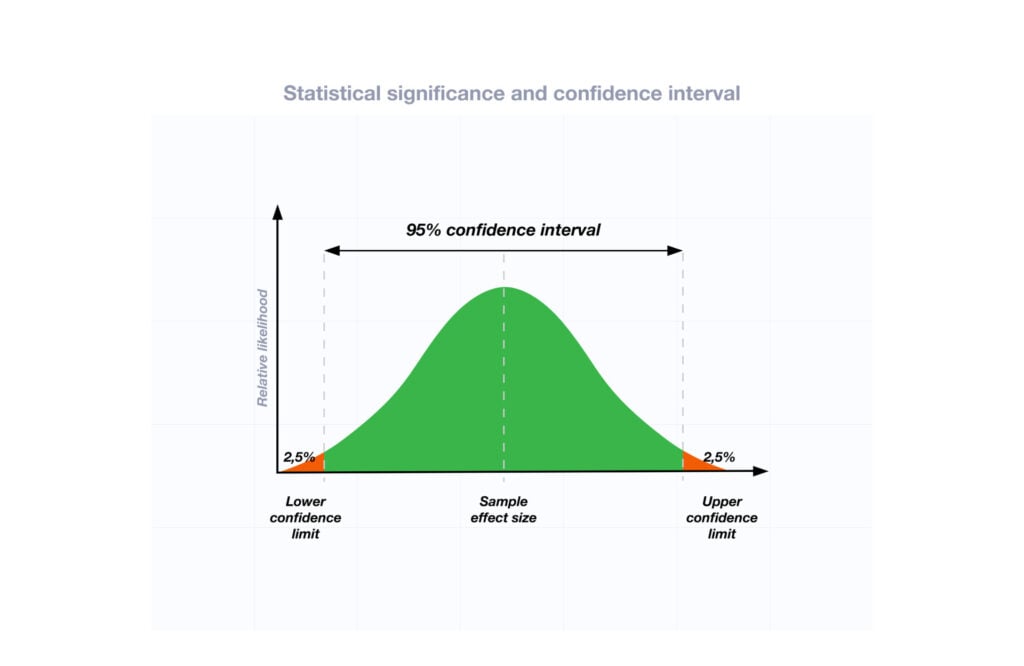

So, if you don’t want to have a hard time keeping up, make sure you have read all the tutorials about confidence intervals , distributions , z-tables and t-tables .

We've also made a video on null hypothesis vs alternative hypothesis - you can watch it below or just scroll down if you prefer reading.

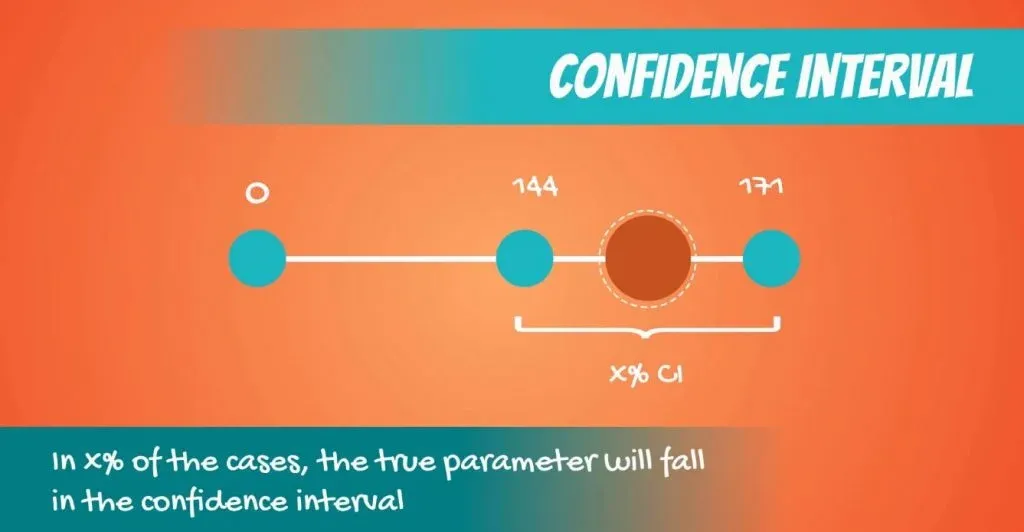

Confidence intervals provide us with an estimation of where the parameters are located. You can obtain them with our confidence interval calculator and learn more about them in the related article.

However, when we are making a decision, we need a yes or no answer. The correct approach, in this case, is to use a test .

Here we will start learning about one of the fundamental tasks in statistics - hypothesis testing !

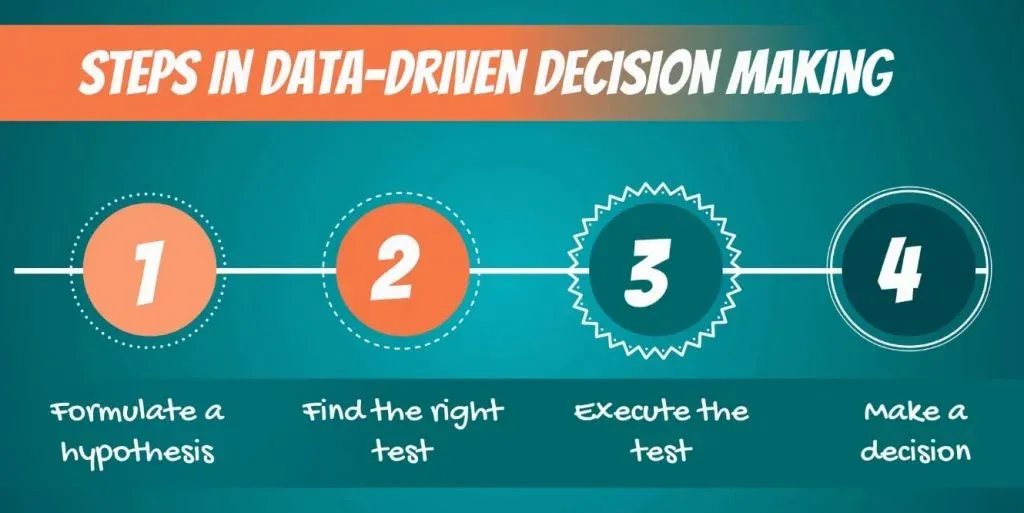

The Hypothesis Testing Process

First off, let’s talk about data-driven decision-making. It consists of the following steps:

- First, we must formulate a hypothesis .

- After doing that, we have to find the right test for our hypothesis .

- Then, we execute the test.

- Finally, we make a decision based on the result.

Let’s start from the beginning.

What is a Hypothesis?

Though there are many ways to define it, the most intuitive must be:

“A hypothesis is an idea that can be tested.”

This is not the formal definition, but it explains the point very well.

So, if we say that apples in New York are expensive, this is an idea or a statement. However, it is not testable, until we have something to compare it with.

For instance, if we define expensive as: any price higher than $1.75 dollars per pound, then it immediately becomes a hypothesis .

What Cannot Be a Hypothesis?

An example may be: would the USA do better or worse under a Clinton administration, compared to a Trump administration? Statistically speaking, this is an idea , but there is no data to test it. Therefore, it cannot be a hypothesis of a statistical test.

Actually, it is more likely to be a topic of another discipline.

Conversely, in statistics, we may compare different US presidencies that have already been completed. For example, the Obama administration and the Bush administration, as we have data on both.

A Two-Sided Test

Alright, let’s get out of politics and get into hypotheses . Here’s a simple topic that CAN be tested.

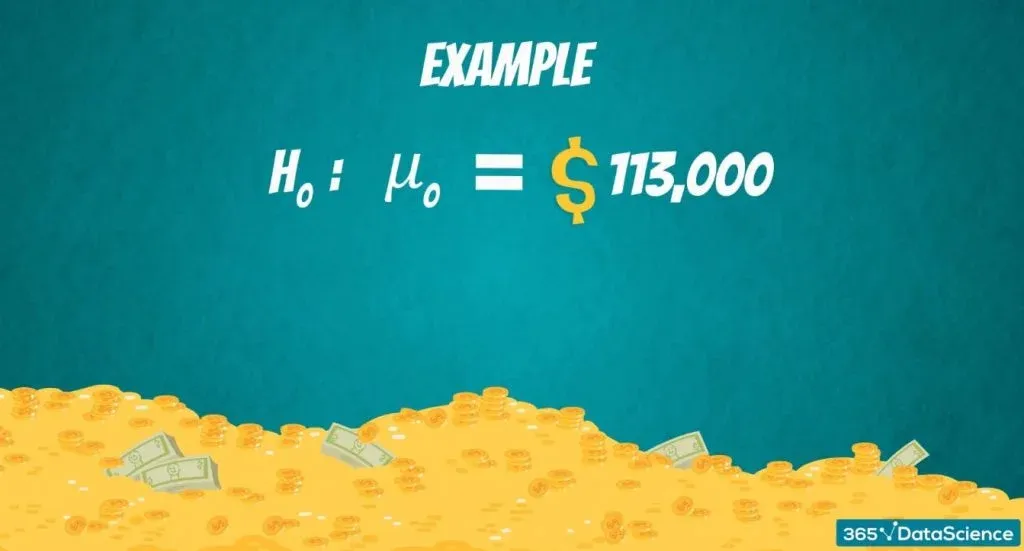

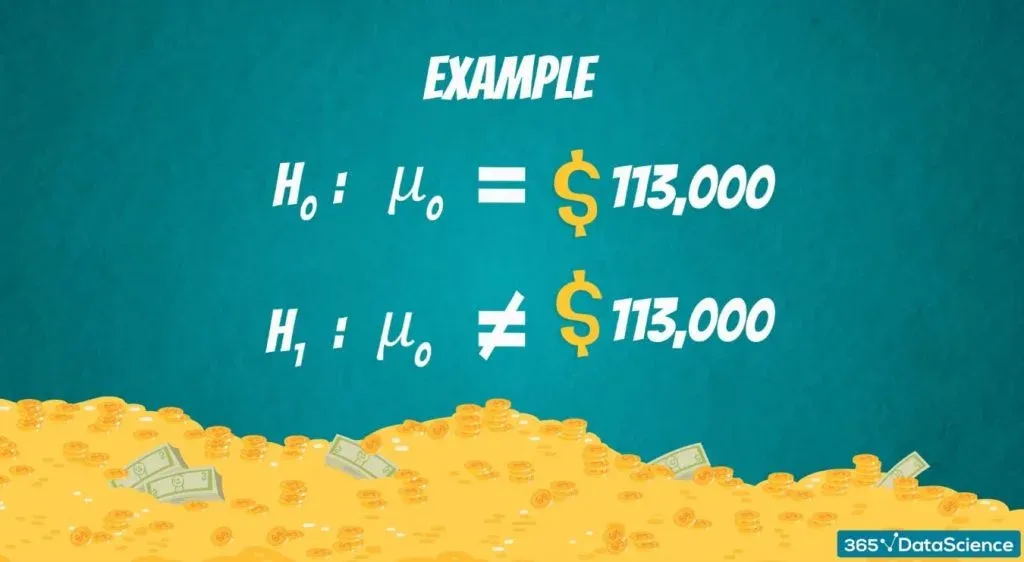

According to Glassdoor (the popular salary information website), the mean data scientist salary in the US is 113,000 dollars.

So, we want to test if their estimate is correct.

The Null and Alternative Hypotheses

There are two hypotheses that are made: the null hypothesis , denoted H 0 , and the alternative hypothesis , denoted H 1 or H A .

The null hypothesis is the one to be tested and the alternative is everything else. In our example:

The null hypothesis would be: The mean data scientist salary is 113,000 dollars.

While the alternative : The mean data scientist salary is not 113,000 dollars.

Author's note: If you're interested in a data scientist career, check out our articles Data Scientist Career Path , 5 Business Basics for Data Scientists , Data Science Interview Questions , and 15 Data Science Consulting Companies Hiring Now .

An Example of a One-Sided Test

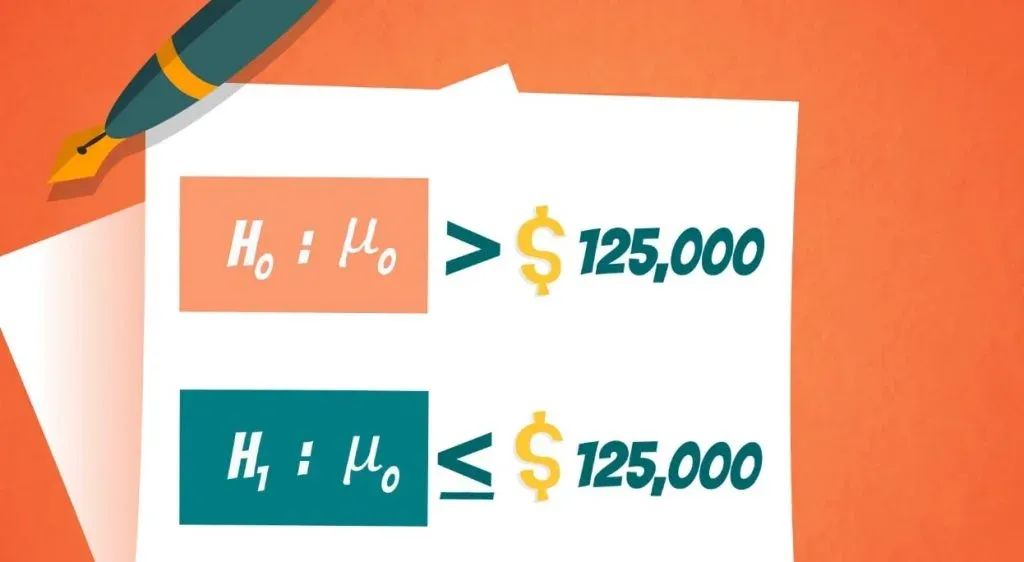

You can also form one-sided or one-tailed tests.

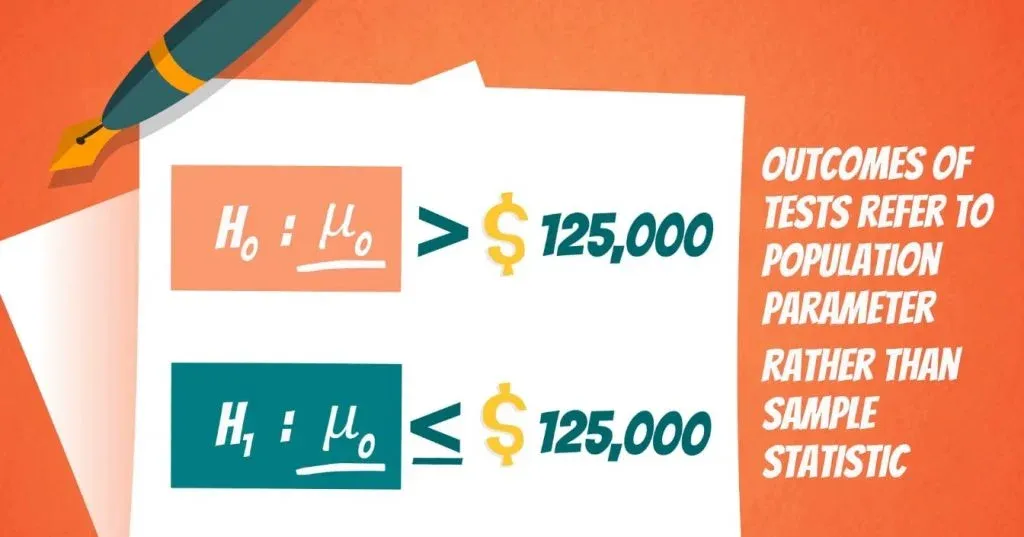

Say your friend, Paul, told you that he thinks data scientists earn more than 125,000 dollars per year. You doubt him, so you design a test to see who’s right.

The null hypothesis of this test would be: The mean data scientist salary is more than 125,000 dollars.

The alternative will cover everything else, thus: The mean data scientist salary is less than or equal to 125,000 dollars.

Important: The outcomes of tests refer to the population parameter rather than the sample statistic! So, the result that we get is for the population.

Important: Another crucial consideration is that, generally, the researcher is trying to reject the null hypothesis . Think about the null hypothesis as the status quo and the alternative as the change or innovation that challenges that status quo. In our example, Paul was representing the status quo, which we were challenging.

Let’s go over it once more. In statistics, the null hypothesis is the statement we are trying to reject. Therefore, the null hypothesis is the present state of affairs, while the alternative is our personal opinion.

Why Hypothesis Testing Works

Right now, you may be feeling a little puzzled. This is normal because this whole concept is counter-intuitive at the beginning. However, there is an extremely easy way to continue your journey of exploring it. By diving into the linked tutorial, you will find out why hypothesis testing actually works.

Interested in learning more? You can take your skills from good to great with our statistics course!

Try statistics course for free

Next Tutorial: Hypothesis Testing: Significance Level and Rejection Region

World-Class

Data Science

Learn with instructors from:

Iliya Valchanov

Co-founder of 365 Data Science

Iliya is a finance graduate with a strong quantitative background who chose the exciting path of a startup entrepreneur. He demonstrated a formidable affinity for numbers during his childhood, winning more than 90 national and international awards and competitions through the years. Iliya started teaching at university, helping other students learn statistics and econometrics. Inspired by his first happy students, he co-founded 365 Data Science to continue spreading knowledge. He authored several of the program’s online courses in mathematics, statistics, machine learning, and deep learning.

We Think you'll also like

Statistics Tutorials

False Positive vs. False Negative: Type I and Type II Errors in Statistical Hypothesis Testing

Hypothesis Testing with Z-Test: Significance Level and Rejection Region

Calculating and Using Covariance and Linear Correlation Coefficient

Examples of Numerical and Categorical Variables

Statistics Resources

- Excel - Tutorials

- Basic Probability Rules

- Single Event Probability

- Complement Rule

- Intersections & Unions

- Compound Events

- Levels of Measurement

- Independent and Dependent Variables

- Entering Data

- Central Tendency

- Data and Tests

- Displaying Data

- Discussing Statistics In-text

- SEM and Confidence Intervals

- Two-Way Frequency Tables

- Empirical Rule

- Finding Probability

- Accessing SPSS

- Chart and Graphs

- Frequency Table and Distribution

- Descriptive Statistics

- Converting Raw Scores to Z-Scores

- Converting Z-scores to t-scores

- Split File/Split Output

- Partial Eta Squared

- Downloading and Installing G*Power: Windows/PC

- Correlation

- Testing Parametric Assumptions

- One-Way ANOVA

- Two-Way ANOVA

- Repeated Measures ANOVA

- Goodness-of-Fit

- Test of Association

- Pearson's r

- Point Biserial

- Mediation and Moderation

- Simple Linear Regression

- Multiple Linear Regression

- Binomial Logistic Regression

- Multinomial Logistic Regression

- Independent Samples T-test

- Dependent Samples T-test

- Testing Assumptions

- T-tests using SPSS

- T-Test Practice

- Predictive Analytics This link opens in a new window

- Quantitative Research Questions

- Null & Alternative Hypotheses

- One-Tail vs. Two-Tail

- Alpha & Beta

- Associated Probability

- Decision Rule

- Statement of Conclusion

- Statistics Group Sessions

ASC Chat Hours

ASC Chat is usually available at the following times ( Pacific Time):

| Days | Hours (Pacific time) |

|---|---|

| Mon. | 9 am - 8 pm |

| Tue. | 7 am - 1 pm 3 pm - 10 pm |

| Wed. | 7 am - 1 pm 3 pm - 10 pm |

| Thurs. | 7 am - 1 pm 2 pm - 10 pm |

| Fri. | 9 am - 1 pm 3 pm - 5 pm 6 pm - 8 pm |

| Sat. | 7 am - 1 pm 6 pm - 9 pm |

| Sun. | 10 am - 1 pm 5 pm - 9 pm |

If there is not a coach on duty, submit your question via one of the below methods:

928-440-1325

Ask a Coach

Search our FAQs on the Academic Success Center's Ask a Coach page.

Once you have developed a clear and focused research question or set of research questions, you’ll be ready to conduct further research, a literature review, on the topic to help you make an educated guess about the answer to your question(s). This educated guess is called a hypothesis.

In research, there are two types of hypotheses: null and alternative. They work as a complementary pair, each stating that the other is wrong.

- Null Hypothesis (H 0 ) – This can be thought of as the implied hypothesis. “Null” meaning “nothing.” This hypothesis states that there is no difference between groups or no relationship between variables. The null hypothesis is a presumption of status quo or no change.

- Alternative Hypothesis (H a ) – This is also known as the claim. This hypothesis should state what you expect the data to show, based on your research on the topic. This is your answer to your research question.

Null Hypothesis: H 0 : There is no difference in the salary of factory workers based on gender. Alternative Hypothesis : H a : Male factory workers have a higher salary than female factory workers.

Null Hypothesis : H 0 : There is no relationship between height and shoe size. Alternative Hypothesis : H a : There is a positive relationship between height and shoe size.

Null Hypothesis : H 0 : Experience on the job has no impact on the quality of a brick mason’s work. Alternative Hypothesis : H a : The quality of a brick mason’s work is influenced by on-the-job experience.

Was this resource helpful?

- << Previous: Hypothesis Testing

- Next: One-Tail vs. Two-Tail >>

- Last Updated: May 29, 2024 9:48 AM

- URL: https://resources.nu.edu/statsresources

User Preferences

Content preview.

Arcu felis bibendum ut tristique et egestas quis:

- Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris

- Duis aute irure dolor in reprehenderit in voluptate

- Excepteur sint occaecat cupidatat non proident

Keyboard Shortcuts

10.1 - setting the hypotheses: examples.

A significance test examines whether the null hypothesis provides a plausible explanation of the data. The null hypothesis itself does not involve the data. It is a statement about a parameter (a numerical characteristic of the population). These population values might be proportions or means or differences between means or proportions or correlations or odds ratios or any other numerical summary of the population. The alternative hypothesis is typically the research hypothesis of interest. Here are some examples.

Example 10.2: Hypotheses with One Sample of One Categorical Variable Section

About 10% of the human population is left-handed. Suppose a researcher at Penn State speculates that students in the College of Arts and Architecture are more likely to be left-handed than people found in the general population. We only have one sample since we will be comparing a population proportion based on a sample value to a known population value.

- Research Question : Are artists more likely to be left-handed than people found in the general population?

- Response Variable : Classification of the student as either right-handed or left-handed

State Null and Alternative Hypotheses

- Null Hypothesis : Students in the College of Arts and Architecture are no more likely to be left-handed than people in the general population (population percent of left-handed students in the College of Art and Architecture = 10% or p = .10).

- Alternative Hypothesis : Students in the College of Arts and Architecture are more likely to be left-handed than people in the general population (population percent of left-handed students in the College of Arts and Architecture > 10% or p > .10). This is a one-sided alternative hypothesis.

Example 10.3: Hypotheses with One Sample of One Measurement Variable Section

A generic brand of the anti-histamine Diphenhydramine markets a capsule with a 50 milligram dose. The manufacturer is worried that the machine that fills the capsules has come out of calibration and is no longer creating capsules with the appropriate dosage.

- Research Question : Does the data suggest that the population mean dosage of this brand is different than 50 mg?

- Response Variable : dosage of the active ingredient found by a chemical assay.

- Null Hypothesis : On the average, the dosage sold under this brand is 50 mg (population mean dosage = 50 mg).

- Alternative Hypothesis : On the average, the dosage sold under this brand is not 50 mg (population mean dosage ≠ 50 mg). This is a two-sided alternative hypothesis.

Example 10.4: Hypotheses with Two Samples of One Categorical Variable Section

Many people are starting to prefer vegetarian meals on a regular basis. Specifically, a researcher believes that females are more likely than males to eat vegetarian meals on a regular basis.

- Research Question : Does the data suggest that females are more likely than males to eat vegetarian meals on a regular basis?

- Response Variable : Classification of whether or not a person eats vegetarian meals on a regular basis

- Explanatory (Grouping) Variable: Sex

- Null Hypothesis : There is no sex effect regarding those who eat vegetarian meals on a regular basis (population percent of females who eat vegetarian meals on a regular basis = population percent of males who eat vegetarian meals on a regular basis or p females = p males ).

- Alternative Hypothesis : Females are more likely than males to eat vegetarian meals on a regular basis (population percent of females who eat vegetarian meals on a regular basis > population percent of males who eat vegetarian meals on a regular basis or p females > p males ). This is a one-sided alternative hypothesis.

Example 10.5: Hypotheses with Two Samples of One Measurement Variable Section

Obesity is a major health problem today. Research is starting to show that people may be able to lose more weight on a low carbohydrate diet than on a low fat diet.

- Research Question : Does the data suggest that, on the average, people are able to lose more weight on a low carbohydrate diet than on a low fat diet?

- Response Variable : Weight loss (pounds)

- Explanatory (Grouping) Variable : Type of diet

- Null Hypothesis : There is no difference in the mean amount of weight loss when comparing a low carbohydrate diet with a low fat diet (population mean weight loss on a low carbohydrate diet = population mean weight loss on a low fat diet).

- Alternative Hypothesis : The mean weight loss should be greater for those on a low carbohydrate diet when compared with those on a low fat diet (population mean weight loss on a low carbohydrate diet > population mean weight loss on a low fat diet). This is a one-sided alternative hypothesis.

Example 10.6: Hypotheses about the relationship between Two Categorical Variables Section

- Research Question : Do the odds of having a stroke increase if you inhale second hand smoke ? A case-control study of non-smoking stroke patients and controls of the same age and occupation are asked if someone in their household smokes.

- Variables : There are two different categorical variables (Stroke patient vs control and whether the subject lives in the same household as a smoker). Living with a smoker (or not) is the natural explanatory variable and having a stroke (or not) is the natural response variable in this situation.

- Null Hypothesis : There is no relationship between whether or not a person has a stroke and whether or not a person lives with a smoker (odds ratio between stroke and second-hand smoke situation is = 1).

- Alternative Hypothesis : There is a relationship between whether or not a person has a stroke and whether or not a person lives with a smoker (odds ratio between stroke and second-hand smoke situation is > 1). This is a one-tailed alternative.

This research question might also be addressed like example 11.4 by making the hypotheses about comparing the proportion of stroke patients that live with smokers to the proportion of controls that live with smokers.

Example 10.7: Hypotheses about the relationship between Two Measurement Variables Section

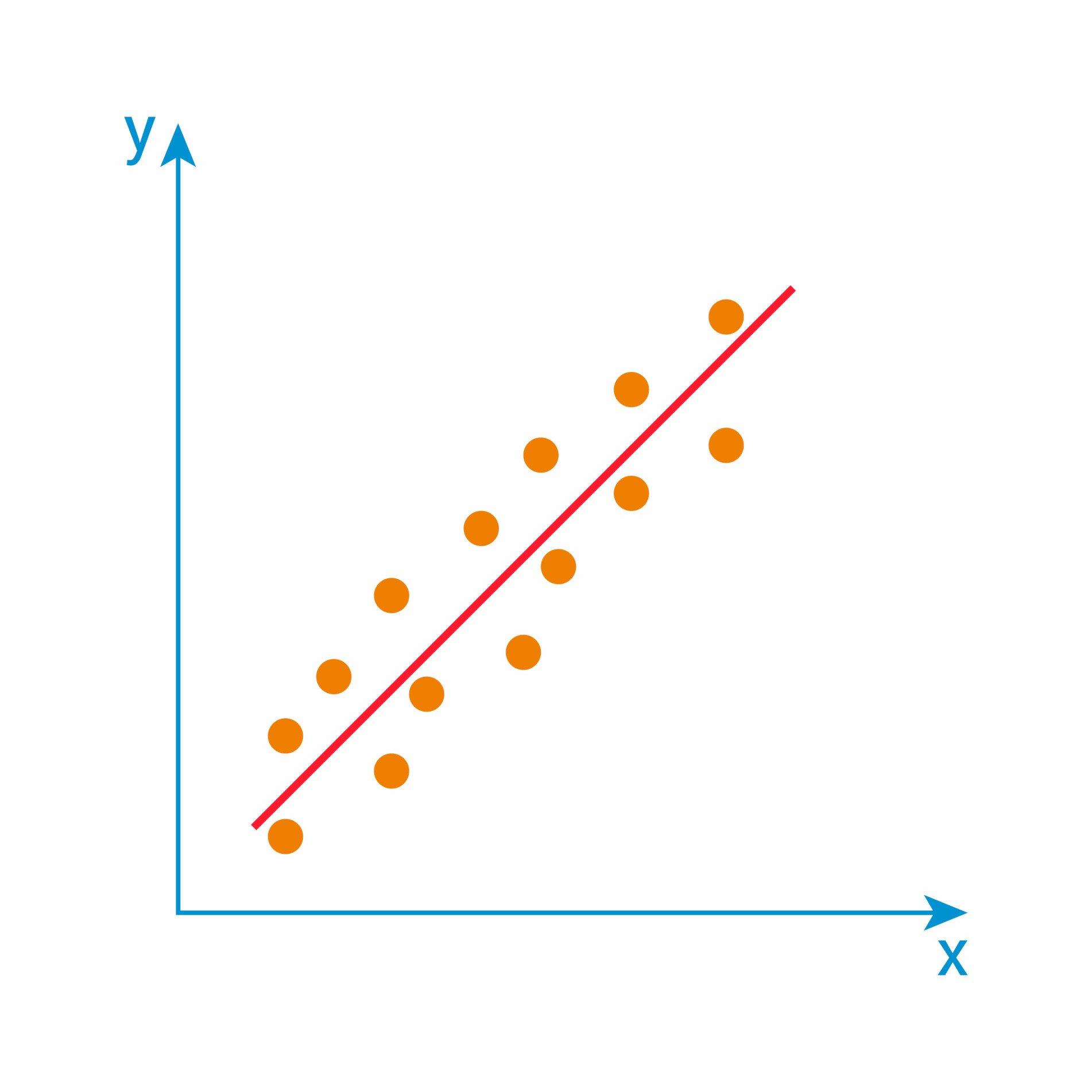

- Research Question : A financial analyst believes there might be a positive association between the change in a stock's price and the amount of the stock purchased by non-management employees the previous day (stock trading by management being under "insider-trading" regulatory restrictions).

- Variables : Daily price change information (the response variable) and previous day stock purchases by non-management employees (explanatory variable). These are two different measurement variables.

- Null Hypothesis : The correlation between the daily stock price change (\$) and the daily stock purchases by non-management employees (\$) = 0.

- Alternative Hypothesis : The correlation between the daily stock price change (\$) and the daily stock purchases by non-management employees (\$) > 0. This is a one-sided alternative hypothesis.

Example 10.8: Hypotheses about comparing the relationship between Two Measurement Variables in Two Samples Section

- Research Question : Is there a linear relationship between the amount of the bill (\$) at a restaurant and the tip (\$) that was left. Is the strength of this association different for family restaurants than for fine dining restaurants?

- Variables : There are two different measurement variables. The size of the tip would depend on the size of the bill so the amount of the bill would be the explanatory variable and the size of the tip would be the response variable.

- Null Hypothesis : The correlation between the amount of the bill (\$) at a restaurant and the tip (\$) that was left is the same at family restaurants as it is at fine dining restaurants.

- Alternative Hypothesis : The correlation between the amount of the bill (\$) at a restaurant and the tip (\$) that was left is the difference at family restaurants then it is at fine dining restaurants. This is a two-sided alternative hypothesis.

Null Hypothesis Definition and Examples, How to State

What is the null hypothesis, how to state the null hypothesis, null hypothesis overview.

Why is it Called the “Null”?

The word “null” in this context means that it’s a commonly accepted fact that researchers work to nullify . It doesn’t mean that the statement is null (i.e. amounts to nothing) itself! (Perhaps the term should be called the “nullifiable hypothesis” as that might cause less confusion).

Why Do I need to Test it? Why not just prove an alternate one?

The short answer is, as a scientist, you are required to ; It’s part of the scientific process. Science uses a battery of processes to prove or disprove theories, making sure than any new hypothesis has no flaws. Including both a null and an alternate hypothesis is one safeguard to ensure your research isn’t flawed. Not including the null hypothesis in your research is considered very bad practice by the scientific community. If you set out to prove an alternate hypothesis without considering it, you are likely setting yourself up for failure. At a minimum, your experiment will likely not be taken seriously.

- Null hypothesis : H 0 : The world is flat.

- Alternate hypothesis: The world is round.

Several scientists, including Copernicus , set out to disprove the null hypothesis. This eventually led to the rejection of the null and the acceptance of the alternate. Most people accepted it — the ones that didn’t created the Flat Earth Society !. What would have happened if Copernicus had not disproved the it and merely proved the alternate? No one would have listened to him. In order to change people’s thinking, he first had to prove that their thinking was wrong .

How to State the Null Hypothesis from a Word Problem

You’ll be asked to convert a word problem into a hypothesis statement in statistics that will include a null hypothesis and an alternate hypothesis . Breaking your problem into a few small steps makes these problems much easier to handle.

Step 2: Convert the hypothesis to math . Remember that the average is sometimes written as μ.

H 1 : μ > 8.2

Broken down into (somewhat) English, that’s H 1 (The hypothesis): μ (the average) > (is greater than) 8.2

Step 3: State what will happen if the hypothesis doesn’t come true. If the recovery time isn’t greater than 8.2 weeks, there are only two possibilities, that the recovery time is equal to 8.2 weeks or less than 8.2 weeks.

H 0 : μ ≤ 8.2

Broken down again into English, that’s H 0 (The null hypothesis): μ (the average) ≤ (is less than or equal to) 8.2

How to State the Null Hypothesis: Part Two

But what if the researcher doesn’t have any idea what will happen.

Example Problem: A researcher is studying the effects of radical exercise program on knee surgery patients. There is a good chance the therapy will improve recovery time, but there’s also the possibility it will make it worse. Average recovery times for knee surgery patients is 8.2 weeks.

Step 1: State what will happen if the experiment doesn’t make any difference. That’s the null hypothesis–that nothing will happen. In this experiment, if nothing happens, then the recovery time will stay at 8.2 weeks.

H 0 : μ = 8.2

Broken down into English, that’s H 0 (The null hypothesis): μ (the average) = (is equal to) 8.2

Step 2: Figure out the alternate hypothesis . The alternate hypothesis is the opposite of the null hypothesis. In other words, what happens if our experiment makes a difference?

H 1 : μ ≠ 8.2

In English again, that’s H 1 (The alternate hypothesis): μ (the average) ≠ (is not equal to) 8.2

That’s How to State the Null Hypothesis!

Check out our Youtube channel for more stats tips!

Gonick, L. (1993). The Cartoon Guide to Statistics . HarperPerennial. Kotz, S.; et al., eds. (2006), Encyclopedia of Statistical Sciences , Wiley.

Want to create or adapt books like this? Learn more about how Pressbooks supports open publishing practices.

8.2 Null and Alternative Hypotheses

Learning objectives.

- Describe hypothesis testing in general and in practice.

A hypothesis test begins by considering two hypotheses . They are called the null hypothesis and the alternative hypothesis . These hypotheses contain opposing viewpoints and only one of these hypotheses is true. The hypothesis test determines which hypothesis is most likely true.

- The null hypothesis is a claim that a population parameter equals some value. For example, [latex]H_0: \mu=5[/latex].

- The alternative hypothesis is a claim that a population parameter is greater than, less than, or not equal to some value. For example, [latex]H_a: \mu>5[/latex], [latex]H_a: \mu<5[/latex], or [latex]H_a: \mu \neq 5[/latex]. The form of the alternative hypothesis depends on the wording of the hypothesis test.

- An alternative notation for [latex]H_a[/latex] is [latex]H_1[/latex].

Because the null and alternative hypotheses are contradictory, we must examine evidence to decide if we have enough evidence to reject the null hypothesis or not reject the null hypothesis. The evidence is in the form of sample data. After we have determined which hypothesis the sample data supports, we make a decision. There are two options for a decision . They are “ reject [latex]H_0[/latex] ” if the sample information favors the alternative hypothesis or “ do not reject [latex]H_0[/latex] ” if the sample information is insufficient to reject the null hypothesis.

Watch this video: Simple hypothesis testing | Probability and Statistics | Khan Academy by Khan Academy [6:24]

A candidate in a local election claims that 30% of registered voters voted in a recent election. Information provided by the returning office suggests that the percentage is higher than the 30% claimed.

The parameter under study is the proportion of registered voters, so we use [latex]p[/latex] in the statements of the hypotheses. The hypotheses are

[latex]\begin{eqnarray*} \\ H_0: & & p=30\% \\ \\ H_a: & & p \gt 30\% \\ \\ \end{eqnarray*}[/latex]

- The null hypothesis [latex]H_0[/latex] is the claim that the proportion of registered voters that voted equals 30%.

- The alternative hypothesis [latex]H_a[/latex] is the claim that the proportion of registered voters that voted is greater than (i.e. higher) than 30%.

A medical researcher believes that a new medicine reduces cholesterol by 25%. A medical trial suggests that the percent reduction is different than claimed. State the null and alternative hypotheses.

[latex]\begin{eqnarray*} H_0: & & p=25\% \\ \\ H_a: & & p \neq 25\% \end{eqnarray*}[/latex]

We want to test whether the mean GPA of students in American colleges is different from 2.0 (out of 4.0). State the null and alternative hypotheses.

[latex]\begin{eqnarray*} H_0: & & \mu=2 \mbox{ points} \\ \\ H_a: & & \mu \neq 2 \mbox{ points} \end{eqnarray*}[/latex]

We want to test whether or not the mean height of eighth graders is 66 inches. State the null and alternative hypotheses.

[latex]\begin{eqnarray*} H_0: & & \mu=66 \mbox{ inches} \\ \\ H_a: & & \mu \neq 66 \mbox{ inches} \end{eqnarray*}[/latex]

We want to test if college students take less than five years to graduate from college, on the average. The null and alternative hypotheses are:

[latex]\begin{eqnarray*} H_0: & & \mu=5 \mbox{ years} \\ \\ H_a: & & \mu \lt 5 \mbox{ years} \end{eqnarray*}[/latex]

We want to test if it takes fewer than 45 minutes to teach a lesson plan. State the null and alternative hypotheses.

[latex]\begin{eqnarray*} H_0: & & \mu=45 \mbox{ minutes} \\ \\ H_a: & & \mu \lt 45 \mbox{ minutes} \end{eqnarray*}[/latex]

In an issue of U.S. News and World Report , an article on school standards stated that about half of all students in France, Germany, and Israel take advanced placement exams and a third pass. The same article stated that 6.6% of U.S. students take advanced placement exams and 4.4% pass. Test if the percentage of U.S. students who take advanced placement exams is more than 6.6%. State the null and alternative hypotheses.

[latex]\begin{eqnarray*} H_0: & & p=6.6\% \\ \\ H_a: & & p \gt 6.6\% \end{eqnarray*}[/latex]

On a state driver’s test, about 40% pass the test on the first try. We want to test if more than 40% pass on the first try. State the null and alternative hypotheses.

[latex]\begin{eqnarray*} H_0: & & p=40\% \\ \\ H_a: & & p \gt 40\% \end{eqnarray*}[/latex]

Concept Review

In a hypothesis test , sample data is evaluated in order to arrive at a decision about some type of claim. If certain conditions about the sample are satisfied, then the claim can be evaluated for a population. In a hypothesis test, we evaluate the null hypothesis , typically denoted with [latex]H_0[/latex]. The null hypothesis is not rejected unless the hypothesis test shows otherwise. The null hypothesis always contain an equal sign ([latex]=[/latex]). Always write the alternative hypothesis , typically denoted with [latex]H_a[/latex] or [latex]H_1[/latex], using less than, greater than, or not equals symbols ([latex]\lt[/latex], [latex]\gt[/latex], [latex]\neq[/latex]). If we reject the null hypothesis, then we can assume there is enough evidence to support the alternative hypothesis. But we can never state that a claim is proven true or false. All we can conclude from the hypothesis test is which of the hypothesis is most likely true. Because the underlying facts about hypothesis testing is based on probability laws, we can talk only in terms of non-absolute certainties.

Attribution

“ 9.1 Null and Alternative Hypotheses “ in Introductory Statistics by OpenStax is licensed under a Creative Commons Attribution 4.0 International License.

Introduction to Statistics Copyright © 2022 by Valerie Watts is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License , except where otherwise noted.

Statistics Made Easy

What is an Alternative Hypothesis in Statistics?

Often in statistics we want to test whether or not some assumption is true about a population parameter .

For example, we might assume that the mean weight of a certain population of turtle is 300 pounds.

To determine if this assumption is true, we’ll go out and collect a sample of turtles and weigh each of them. Using this sample data, we’ll conduct a hypothesis test .

The first step in a hypothesis test is to define the null and alternative hypotheses .

These two hypotheses need to be mutually exclusive, so if one is true then the other must be false.

These two hypotheses are defined as follows:

Null hypothesis (H 0 ): The sample data is consistent with the prevailing belief about the population parameter.

Alternative hypothesis (H A ): The sample data suggests that the assumption made in the null hypothesis is not true. In other words, there is some non-random cause influencing the data.

Types of Alternative Hypotheses

There are two types of alternative hypotheses:

A one-tailed hypothesis involves making a “greater than” or “less than ” statement. For example, suppose we assume the mean height of a male in the U.S. is greater than or equal to 70 inches.

The null and alternative hypotheses in this case would be:

- Null hypothesis: µ ≥ 70 inches

- Alternative hypothesis: µ < 70 inches

A two-tailed hypothesis involves making an “equal to” or “not equal to” statement. For example, suppose we assume the mean height of a male in the U.S. is equal to 70 inches.

- Null hypothesis: µ = 70 inches

- Alternative hypothesis: µ ≠ 70 inches

Note: The “equal” sign is always included in the null hypothesis, whether it is =, ≥, or ≤.

Examples of Alternative Hypotheses

The following examples illustrate how to define the null and alternative hypotheses for different research problems.

Example 1: A biologist wants to test if the mean weight of a certain population of turtle is different from the widely-accepted mean weight of 300 pounds.

The null and alternative hypothesis for this research study would be:

- Null hypothesis: µ = 300 pounds

- Alternative hypothesis: µ ≠ 300 pounds

If we reject the null hypothesis, this means we have sufficient evidence from the sample data to say that the true mean weight of this population of turtles is different from 300 pounds.

Example 2: An engineer wants to test whether a new battery can produce higher mean watts than the current industry standard of 50 watts.

- Null hypothesis: µ ≤ 50 watts

- Alternative hypothesis: µ > 50 watts

If we reject the null hypothesis, this means we have sufficient evidence from the sample data to say that the true mean watts produced by the new battery is greater than the current industry standard of 50 watts.

Example 3: A botanist wants to know if a new gardening method produces less waste than the standard gardening method that produces 20 pounds of waste.

- Null hypothesis: µ ≥ 20 pounds

- Alternative hypothesis: µ < 20 pounds

If we reject the null hypothesis, this means we have sufficient evidence from the sample data to say that the true mean weight produced by this new gardening method is less than 20 pounds.

When to Reject the Null Hypothesis

Whenever we conduct a hypothesis test, we use sample data to calculate a test-statistic and a corresponding p-value.

If the p-value is less than some significance level (common choices are 0.10, 0.05, and 0.01), then we reject the null hypothesis.

This means we have sufficient evidence from the sample data to say that the assumption made by the null hypothesis is not true.

If the p-value is not less than some significance level, then we fail to reject the null hypothesis.

This means our sample data did not provide us with evidence that the assumption made by the null hypothesis was not true.

Additional Resource: An Explanation of P-Values and Statistical Significance

Featured Posts

Hey there. My name is Zach Bobbitt. I have a Masters of Science degree in Applied Statistics and I’ve worked on machine learning algorithms for professional businesses in both healthcare and retail. I’m passionate about statistics, machine learning, and data visualization and I created Statology to be a resource for both students and teachers alike. My goal with this site is to help you learn statistics through using simple terms, plenty of real-world examples, and helpful illustrations.

Leave a Reply Cancel reply

Your email address will not be published. Required fields are marked *

Join the Statology Community

Sign up to receive Statology's exclusive study resource: 100 practice problems with step-by-step solutions. Plus, get our latest insights, tutorials, and data analysis tips straight to your inbox!

By subscribing you accept Statology's Privacy Policy.

Frequently asked questions

What are null and alternative hypotheses.

Null and alternative hypotheses are used in statistical hypothesis testing . The null hypothesis of a test always predicts no effect or no relationship between variables, while the alternative hypothesis states your research prediction of an effect or relationship.

Frequently asked questions: Statistics

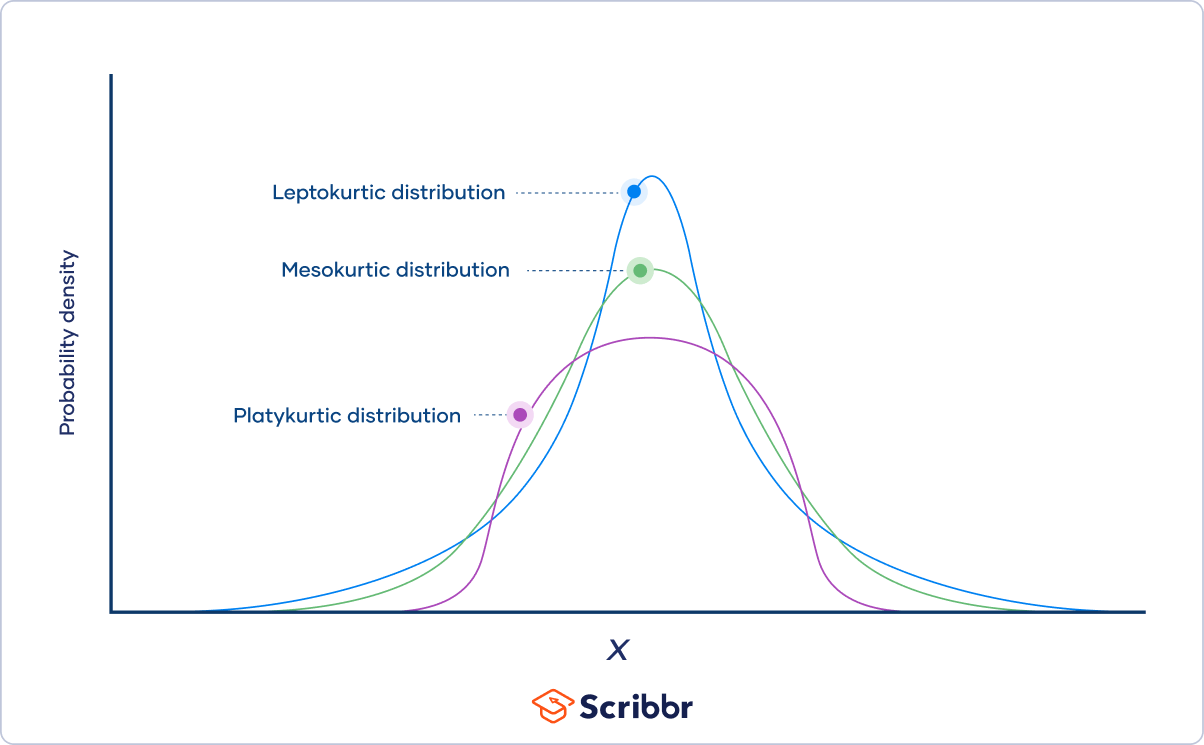

As the degrees of freedom increase, Student’s t distribution becomes less leptokurtic , meaning that the probability of extreme values decreases. The distribution becomes more and more similar to a standard normal distribution .

The three categories of kurtosis are:

- Mesokurtosis : An excess kurtosis of 0. Normal distributions are mesokurtic.

- Platykurtosis : A negative excess kurtosis. Platykurtic distributions are thin-tailed, meaning that they have few outliers .

- Leptokurtosis : A positive excess kurtosis. Leptokurtic distributions are fat-tailed, meaning that they have many outliers.

Probability distributions belong to two broad categories: discrete probability distributions and continuous probability distributions . Within each category, there are many types of probability distributions.

Probability is the relative frequency over an infinite number of trials.

For example, the probability of a coin landing on heads is .5, meaning that if you flip the coin an infinite number of times, it will land on heads half the time.

Since doing something an infinite number of times is impossible, relative frequency is often used as an estimate of probability. If you flip a coin 1000 times and get 507 heads, the relative frequency, .507, is a good estimate of the probability.

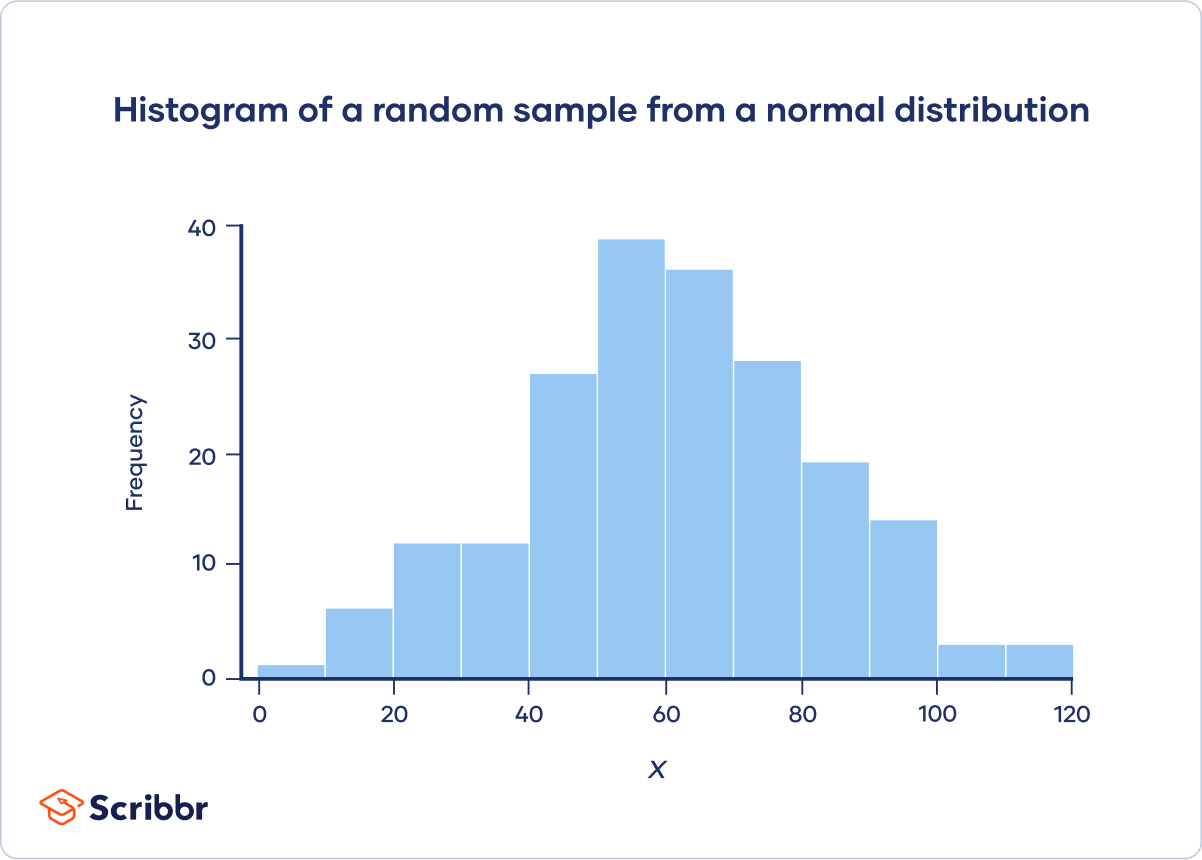

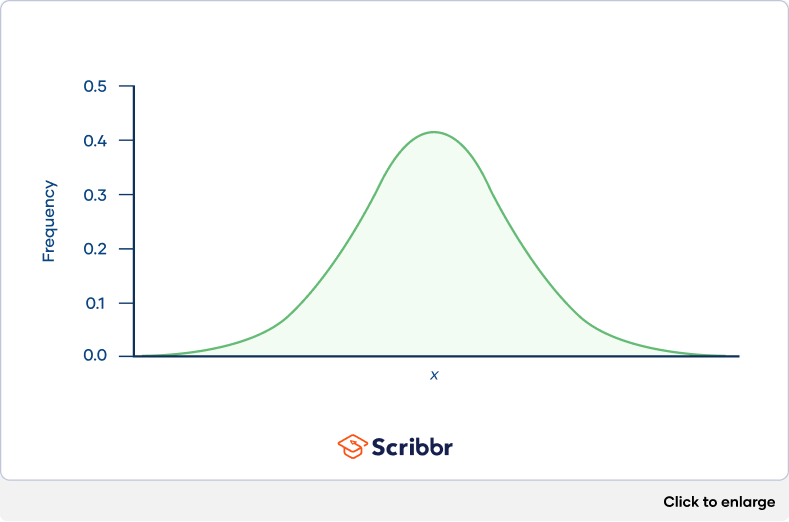

Categorical variables can be described by a frequency distribution. Quantitative variables can also be described by a frequency distribution, but first they need to be grouped into interval classes .

A histogram is an effective way to tell if a frequency distribution appears to have a normal distribution .

Plot a histogram and look at the shape of the bars. If the bars roughly follow a symmetrical bell or hill shape, like the example below, then the distribution is approximately normally distributed.

You can use the CHISQ.INV.RT() function to find a chi-square critical value in Excel.

For example, to calculate the chi-square critical value for a test with df = 22 and α = .05, click any blank cell and type:

=CHISQ.INV.RT(0.05,22)

You can use the qchisq() function to find a chi-square critical value in R.

For example, to calculate the chi-square critical value for a test with df = 22 and α = .05:

qchisq(p = .05, df = 22, lower.tail = FALSE)

You can use the chisq.test() function to perform a chi-square test of independence in R. Give the contingency table as a matrix for the “x” argument. For example:

m = matrix(data = c(89, 84, 86, 9, 8, 24), nrow = 3, ncol = 2)

chisq.test(x = m)

You can use the CHISQ.TEST() function to perform a chi-square test of independence in Excel. It takes two arguments, CHISQ.TEST(observed_range, expected_range), and returns the p value.

Chi-square goodness of fit tests are often used in genetics. One common application is to check if two genes are linked (i.e., if the assortment is independent). When genes are linked, the allele inherited for one gene affects the allele inherited for another gene.

Suppose that you want to know if the genes for pea texture (R = round, r = wrinkled) and color (Y = yellow, y = green) are linked. You perform a dihybrid cross between two heterozygous ( RY / ry ) pea plants. The hypotheses you’re testing with your experiment are:

- This would suggest that the genes are unlinked.

- This would suggest that the genes are linked.

You observe 100 peas:

- 78 round and yellow peas

- 6 round and green peas

- 4 wrinkled and yellow peas

- 12 wrinkled and green peas

Step 1: Calculate the expected frequencies

To calculate the expected values, you can make a Punnett square. If the two genes are unlinked, the probability of each genotypic combination is equal.

| RRYY | RrYy | RRYy | RrYY | |

| RrYy | rryy | Rryy | rrYy | |

| RRYy | Rryy | RRyy | RrYy | |

| RrYY | rrYy | RrYy | rrYY |

The expected phenotypic ratios are therefore 9 round and yellow: 3 round and green: 3 wrinkled and yellow: 1 wrinkled and green.

From this, you can calculate the expected phenotypic frequencies for 100 peas:

| Round and yellow | 78 | 100 * (9/16) = 56.25 |

| Round and green | 6 | 100 * (3/16) = 18.75 |

| Wrinkled and yellow | 4 | 100 * (3/16) = 18.75 |

| Wrinkled and green | 12 | 100 * (1/16) = 6.21 |

Step 2: Calculate chi-square

| − | − | ||||

| Round and yellow | 78 | 56.25 | 21.75 | 473.06 | 8.41 |

| Round and green | 6 | 18.75 | −12.75 | 162.56 | 8.67 |

| Wrinkled and yellow | 4 | 18.75 | −14.75 | 217.56 | 11.6 |

| Wrinkled and green | 12 | 6.21 | 5.79 | 33.52 | 5.4 |

Χ 2 = 8.41 + 8.67 + 11.6 + 5.4 = 34.08

Step 3: Find the critical chi-square value

Since there are four groups (round and yellow, round and green, wrinkled and yellow, wrinkled and green), there are three degrees of freedom .

For a test of significance at α = .05 and df = 3, the Χ 2 critical value is 7.82.

Step 4: Compare the chi-square value to the critical value

Χ 2 = 34.08

Critical value = 7.82

The Χ 2 value is greater than the critical value .

Step 5: Decide whether the reject the null hypothesis

The Χ 2 value is greater than the critical value, so we reject the null hypothesis that the population of offspring have an equal probability of inheriting all possible genotypic combinations. There is a significant difference between the observed and expected genotypic frequencies ( p < .05).

The data supports the alternative hypothesis that the offspring do not have an equal probability of inheriting all possible genotypic combinations, which suggests that the genes are linked

You can use the chisq.test() function to perform a chi-square goodness of fit test in R. Give the observed values in the “x” argument, give the expected values in the “p” argument, and set “rescale.p” to true. For example:

chisq.test(x = c(22,30,23), p = c(25,25,25), rescale.p = TRUE)

You can use the CHISQ.TEST() function to perform a chi-square goodness of fit test in Excel. It takes two arguments, CHISQ.TEST(observed_range, expected_range), and returns the p value .

Both correlations and chi-square tests can test for relationships between two variables. However, a correlation is used when you have two quantitative variables and a chi-square test of independence is used when you have two categorical variables.

Both chi-square tests and t tests can test for differences between two groups. However, a t test is used when you have a dependent quantitative variable and an independent categorical variable (with two groups). A chi-square test of independence is used when you have two categorical variables.

The two main chi-square tests are the chi-square goodness of fit test and the chi-square test of independence .

A chi-square distribution is a continuous probability distribution . The shape of a chi-square distribution depends on its degrees of freedom , k . The mean of a chi-square distribution is equal to its degrees of freedom ( k ) and the variance is 2 k . The range is 0 to ∞.

As the degrees of freedom ( k ) increases, the chi-square distribution goes from a downward curve to a hump shape. As the degrees of freedom increases further, the hump goes from being strongly right-skewed to being approximately normal.

To find the quartiles of a probability distribution, you can use the distribution’s quantile function.

You can use the quantile() function to find quartiles in R. If your data is called “data”, then “quantile(data, prob=c(.25,.5,.75), type=1)” will return the three quartiles.

You can use the QUARTILE() function to find quartiles in Excel. If your data is in column A, then click any blank cell and type “=QUARTILE(A:A,1)” for the first quartile, “=QUARTILE(A:A,2)” for the second quartile, and “=QUARTILE(A:A,3)” for the third quartile.

You can use the PEARSON() function to calculate the Pearson correlation coefficient in Excel. If your variables are in columns A and B, then click any blank cell and type “PEARSON(A:A,B:B)”.

There is no function to directly test the significance of the correlation.

You can use the cor() function to calculate the Pearson correlation coefficient in R. To test the significance of the correlation, you can use the cor.test() function.

You should use the Pearson correlation coefficient when (1) the relationship is linear and (2) both variables are quantitative and (3) normally distributed and (4) have no outliers.

The Pearson correlation coefficient ( r ) is the most common way of measuring a linear correlation. It is a number between –1 and 1 that measures the strength and direction of the relationship between two variables.

This table summarizes the most important differences between normal distributions and Poisson distributions :

| Characteristic | Normal | Poisson |

|---|---|---|

| Continuous | ||

| Mean (µ) and standard deviation (σ) | Lambda (λ) | |

| Shape | Bell-shaped | Depends on λ |

| Symmetrical | Asymmetrical (right-skewed). As λ increases, the asymmetry decreases. | |

| Range | −∞ to ∞ | 0 to ∞ |

When the mean of a Poisson distribution is large (>10), it can be approximated by a normal distribution.

In the Poisson distribution formula, lambda (λ) is the mean number of events within a given interval of time or space. For example, λ = 0.748 floods per year.

The e in the Poisson distribution formula stands for the number 2.718. This number is called Euler’s constant. You can simply substitute e with 2.718 when you’re calculating a Poisson probability. Euler’s constant is a very useful number and is especially important in calculus.

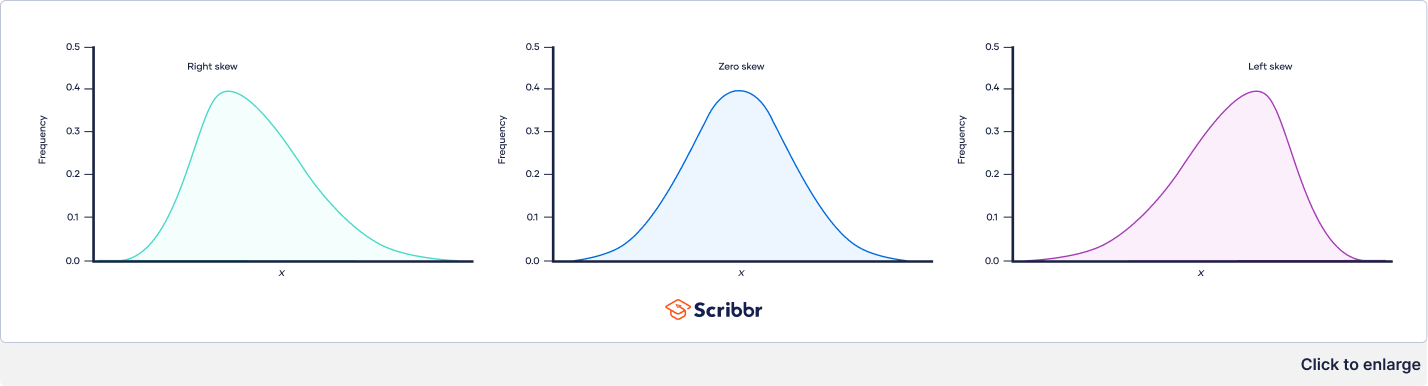

The three types of skewness are:

- Right skew (also called positive skew ) . A right-skewed distribution is longer on the right side of its peak than on its left.

- Left skew (also called negative skew). A left-skewed distribution is longer on the left side of its peak than on its right.

- Zero skew. It is symmetrical and its left and right sides are mirror images.

Skewness and kurtosis are both important measures of a distribution’s shape.

- Skewness measures the asymmetry of a distribution.

- Kurtosis measures the heaviness of a distribution’s tails relative to a normal distribution .

A research hypothesis is your proposed answer to your research question. The research hypothesis usually includes an explanation (“ x affects y because …”).

A statistical hypothesis, on the other hand, is a mathematical statement about a population parameter. Statistical hypotheses always come in pairs: the null and alternative hypotheses . In a well-designed study , the statistical hypotheses correspond logically to the research hypothesis.

The alternative hypothesis is often abbreviated as H a or H 1 . When the alternative hypothesis is written using mathematical symbols, it always includes an inequality symbol (usually ≠, but sometimes < or >).

The null hypothesis is often abbreviated as H 0 . When the null hypothesis is written using mathematical symbols, it always includes an equality symbol (usually =, but sometimes ≥ or ≤).

The t distribution was first described by statistician William Sealy Gosset under the pseudonym “Student.”

To calculate a confidence interval of a mean using the critical value of t , follow these four steps:

- Choose the significance level based on your desired confidence level. The most common confidence level is 95%, which corresponds to α = .05 in the two-tailed t table .

- Find the critical value of t in the two-tailed t table.

- Multiply the critical value of t by s / √ n .

- Add this value to the mean to calculate the upper limit of the confidence interval, and subtract this value from the mean to calculate the lower limit.

To test a hypothesis using the critical value of t , follow these four steps:

- Calculate the t value for your sample.

- Find the critical value of t in the t table .

- Determine if the (absolute) t value is greater than the critical value of t .

- Reject the null hypothesis if the sample’s t value is greater than the critical value of t . Otherwise, don’t reject the null hypothesis .

You can use the T.INV() function to find the critical value of t for one-tailed tests in Excel, and you can use the T.INV.2T() function for two-tailed tests.

You can use the qt() function to find the critical value of t in R. The function gives the critical value of t for the one-tailed test. If you want the critical value of t for a two-tailed test, divide the significance level by two.

You can use the RSQ() function to calculate R² in Excel. If your dependent variable is in column A and your independent variable is in column B, then click any blank cell and type “RSQ(A:A,B:B)”.

You can use the summary() function to view the R² of a linear model in R. You will see the “R-squared” near the bottom of the output.

There are two formulas you can use to calculate the coefficient of determination (R²) of a simple linear regression .

The coefficient of determination (R²) is a number between 0 and 1 that measures how well a statistical model predicts an outcome. You can interpret the R² as the proportion of variation in the dependent variable that is predicted by the statistical model.

There are three main types of missing data .

Missing completely at random (MCAR) data are randomly distributed across the variable and unrelated to other variables .

Missing at random (MAR) data are not randomly distributed but they are accounted for by other observed variables.

Missing not at random (MNAR) data systematically differ from the observed values.

To tidy up your missing data , your options usually include accepting, removing, or recreating the missing data.

- Acceptance: You leave your data as is

- Listwise or pairwise deletion: You delete all cases (participants) with missing data from analyses

- Imputation: You use other data to fill in the missing data

Missing data are important because, depending on the type, they can sometimes bias your results. This means your results may not be generalizable outside of your study because your data come from an unrepresentative sample .

Missing data , or missing values, occur when you don’t have data stored for certain variables or participants.

In any dataset, there’s usually some missing data. In quantitative research , missing values appear as blank cells in your spreadsheet.

There are two steps to calculating the geometric mean :

- Multiply all values together to get their product.

- Find the n th root of the product ( n is the number of values).

Before calculating the geometric mean, note that:

- The geometric mean can only be found for positive values.

- If any value in the data set is zero, the geometric mean is zero.

The arithmetic mean is the most commonly used type of mean and is often referred to simply as “the mean.” While the arithmetic mean is based on adding and dividing values, the geometric mean multiplies and finds the root of values.

Even though the geometric mean is a less common measure of central tendency , it’s more accurate than the arithmetic mean for percentage change and positively skewed data. The geometric mean is often reported for financial indices and population growth rates.

The geometric mean is an average that multiplies all values and finds a root of the number. For a dataset with n numbers, you find the n th root of their product.

Outliers are extreme values that differ from most values in the dataset. You find outliers at the extreme ends of your dataset.

It’s best to remove outliers only when you have a sound reason for doing so.

Some outliers represent natural variations in the population , and they should be left as is in your dataset. These are called true outliers.

Other outliers are problematic and should be removed because they represent measurement errors , data entry or processing errors, or poor sampling.

You can choose from four main ways to detect outliers :

- Sorting your values from low to high and checking minimum and maximum values

- Visualizing your data with a box plot and looking for outliers

- Using the interquartile range to create fences for your data

- Using statistical procedures to identify extreme values

Outliers can have a big impact on your statistical analyses and skew the results of any hypothesis test if they are inaccurate.

These extreme values can impact your statistical power as well, making it hard to detect a true effect if there is one.

No, the steepness or slope of the line isn’t related to the correlation coefficient value. The correlation coefficient only tells you how closely your data fit on a line, so two datasets with the same correlation coefficient can have very different slopes.

To find the slope of the line, you’ll need to perform a regression analysis .

Correlation coefficients always range between -1 and 1.

The sign of the coefficient tells you the direction of the relationship: a positive value means the variables change together in the same direction, while a negative value means they change together in opposite directions.

The absolute value of a number is equal to the number without its sign. The absolute value of a correlation coefficient tells you the magnitude of the correlation: the greater the absolute value, the stronger the correlation.

These are the assumptions your data must meet if you want to use Pearson’s r :

- Both variables are on an interval or ratio level of measurement

- Data from both variables follow normal distributions

- Your data have no outliers

- Your data is from a random or representative sample

- You expect a linear relationship between the two variables

A correlation coefficient is a single number that describes the strength and direction of the relationship between your variables.

Different types of correlation coefficients might be appropriate for your data based on their levels of measurement and distributions . The Pearson product-moment correlation coefficient (Pearson’s r ) is commonly used to assess a linear relationship between two quantitative variables.

There are various ways to improve power:

- Increase the potential effect size by manipulating your independent variable more strongly,

- Increase sample size,

- Increase the significance level (alpha),

- Reduce measurement error by increasing the precision and accuracy of your measurement devices and procedures,

- Use a one-tailed test instead of a two-tailed test for t tests and z tests.

A power analysis is a calculation that helps you determine a minimum sample size for your study. It’s made up of four main components. If you know or have estimates for any three of these, you can calculate the fourth component.

- Statistical power : the likelihood that a test will detect an effect of a certain size if there is one, usually set at 80% or higher.

- Sample size : the minimum number of observations needed to observe an effect of a certain size with a given power level.

- Significance level (alpha) : the maximum risk of rejecting a true null hypothesis that you are willing to take, usually set at 5%.

- Expected effect size : a standardized way of expressing the magnitude of the expected result of your study, usually based on similar studies or a pilot study.

Statistical analysis is the main method for analyzing quantitative research data . It uses probabilities and models to test predictions about a population from sample data.

The risk of making a Type II error is inversely related to the statistical power of a test. Power is the extent to which a test can correctly detect a real effect when there is one.

To (indirectly) reduce the risk of a Type II error, you can increase the sample size or the significance level to increase statistical power.

The risk of making a Type I error is the significance level (or alpha) that you choose. That’s a value that you set at the beginning of your study to assess the statistical probability of obtaining your results ( p value ).

The significance level is usually set at 0.05 or 5%. This means that your results only have a 5% chance of occurring, or less, if the null hypothesis is actually true.

To reduce the Type I error probability, you can set a lower significance level.

In statistics, a Type I error means rejecting the null hypothesis when it’s actually true, while a Type II error means failing to reject the null hypothesis when it’s actually false.

In statistics, power refers to the likelihood of a hypothesis test detecting a true effect if there is one. A statistically powerful test is more likely to reject a false negative (a Type II error).

If you don’t ensure enough power in your study, you may not be able to detect a statistically significant result even when it has practical significance. Your study might not have the ability to answer your research question.

While statistical significance shows that an effect exists in a study, practical significance shows that the effect is large enough to be meaningful in the real world.

Statistical significance is denoted by p -values whereas practical significance is represented by effect sizes .

There are dozens of measures of effect sizes . The most common effect sizes are Cohen’s d and Pearson’s r . Cohen’s d measures the size of the difference between two groups while Pearson’s r measures the strength of the relationship between two variables .

Effect size tells you how meaningful the relationship between variables or the difference between groups is.

A large effect size means that a research finding has practical significance, while a small effect size indicates limited practical applications.

Using descriptive and inferential statistics , you can make two types of estimates about the population : point estimates and interval estimates.

- A point estimate is a single value estimate of a parameter . For instance, a sample mean is a point estimate of a population mean.

- An interval estimate gives you a range of values where the parameter is expected to lie. A confidence interval is the most common type of interval estimate.

Both types of estimates are important for gathering a clear idea of where a parameter is likely to lie.