Data Science Journal

- Download PDF (English) XML (English)

- Alt. Display

Research Papers

Black hole clustering: gravity-based approach with no predetermined parameters.

- Belal K. ELFarra

- Mamoun A. A. Salaha

- Wesam M. Ashour

Clustering is a fundamental technique in data mining and machine learning, aiming to group data elements into related clusters. However, traditional clustering algorithms, such as K-means, suffer from limitations such as the need for user-defined parameters and sensitivity to initial conditions.

This paper introduces a novel clustering algorithm called Black Hole Clustering (BHC), which leverages the concept of gravity to identify clusters. Inspired by the behavior of masses in the physical world, gravity-based clustering treats data points as mass points that attract each other based on distance. This approach enables the detection of high-density clusters of arbitrary shapes and sizes without the need for predefined parameters. We extensively evaluate BHC on synthetic and real-world datasets, demonstrating its effectiveness in handling complex data structures and varying point densities. Notably, BHC excels in accurate prediction of the number of clusters and achieves competitive clustering accuracy rates. Moreover, its parameter-free nature enhances clustering accuracy, robustness, and scalability. These findings represent a significant contribution to advanced clustering techniques and pave the way for further research and application of gravity-based clustering in diverse fields. BHC offers a promising approach to addressing clustering challenges in complex datasets, opening up new possibilities for improved data analysis and pattern discovery.

- gravity-based clustering

- density-based clustering

- machine learning

- data mining

I. Introduction

Clustering is a data analysis technique used to group similar data points together based on certain features or characteristics. It is used for pattern recognition, data compression, anomaly detection, recommendation systems, image segmentation, customer segmentation, and genomics analysis. However, it faces challenges, such as selecting the right number of clusters, handling irregular cluster shapes, scalability issues, sensitivity to outliers, and the need for appropriate evaluation methods. Researchers continually work on improving clustering algorithms to address these challenges and make clustering more effective for various applications.

However, commonly employed clustering algorithms like K-means and expectation maximization (EM) face challenges such as dependence on user-defined parameters and sensitivity to initial conditions. In high-dimensional data, determining the optimal number of clusters (k) in K-means can pose a particularly challenging task ( Cai et al. 2023 ; Ghazal et al. 2021 ).

To overcome these limitations, density-based clustering ( Ester, et al., 1996 ) techniques have emerged, defining clusters based on regions of high densities separated by regions of low densities. Among these techniques, gravity-based clustering stands out as a variant that exploits the concept of gravity to detect clusters. By treating data points as mass points that attract each other based on distance, gravity-based clustering forms high-density clusters where points are closely related ( Huang et al. 2019 ; Kuwil et al. 2020 ).

The inspiration for gravity-based clustering stems from the role of gravity in the physical world, where it governs the behavior of masses in the universe ( Cadiou et al. 2020 ). Researchers have leveraged this concept to develop innovative clustering algorithms capable of effectively identifying clusters in diverse datasets. Gravity-based clustering finds applications in various fields, including time series analysis, astrophysics, and data mining ( Jankowiak et al. 2017 ), enabling the identification of clusters with arbitrary shapes and sizes, making it particularly valuable for datasets characterized by varying point densities.

This paper introduces the Black Hole Clustering (BHC) algorithm, a novel approach that harnesses the concept of gravity to classify unsupervised datasets and autonomously predict cluster numbers, eliminating the need for predefined parameters. BHC demonstrates robust performance in predicting cluster numbers and consistently achieves competitive accuracy rates. Comparative evaluations against established clustering methods consistently highlight BHC’s superiority across various scenarios. When applied to real-world datasets from diverse domains, BHC consistently proves its effectiveness, emphasizing its reliability and potential for further research and practical applications. This contribution advances the field of clustering algorithms capable of handling complex data structures.

The remaining sections of this paper are organized as follows: The next section is dedicated to the literature review, where we begin with a discussion on the statement of the problem, followed by an exploration of related works. Subsequently, we delve into the methodology, starting with the proposed idea and then presenting the proposed algorithm. The experiments and results sections showcase the outcomes of our research. Finally, we conclude the paper by summarizing the key findings and contributions of our study, along with highlighting potential directions for future research.

II. Literature Review

A. statement of the problem.

The problem addressed in this research is the development of a clustering approach that does not rely on predetermined parameters and can identify clusters with non-linear boundaries. The proposed solution leverages the concept of black holes to model the clustering of data points. The goal is to determine the number of clusters and perform clustering for each data point without using any parameters. The identification of the cluster center is a significant challenge, and the datapoints with max dens are suggested as the efficient cluster centroids. The objective function evaluates the contribution of every variable to achieve optimized clustering, and the centroids get relocated to find the optimum grouping, such that the data points within a cluster are closest to their centroid.

B. Related Works

In the field of clustering, Xu and Wunsch ( Xu & Wunsch II 2005 ) provided a comprehensive review of various algorithms that aim to identify clusters in data. These algorithms can be classified into distribution-based, hierarchical-based, density-based, and grid-based approaches, with the choice depending on the characteristics and prior knowledge of the dataset ( Xu et al. 2016 ; Liu, et al., 2007 ; Liu & Hou 2016 ; Louhichi et al. 2017 ). However, the challenge arises when dealing with big data, which is often heterogeneous and challenging to exploit.

Clustering methods offer a promising solution to tackle the complexities of big data. Density-based clustering methods, in particular, are widely used due to their ability to handle large databases and effectively handle noisy data ( Ester et al. 1996 ; Hai-Feng et al. 2023 ). One such algorithm is DBSCAN, which has been extended to include variants such as OPTICS, ST-DBSCAN, and MR-DBSCAN ( Ankerst et al. 1999 ; Birant & Kut 2007 ; He et al. 2011 ). While these methods perform well on spatial data, they have limitations when applied to high-dimensional data. Subspace clustering algorithms, like DENCLUE and CLIQUE, address this issue by detecting clusters within low-dimensional subspaces of high-dimensional data ( Hinneburg & Keim 1998 ; Agrawal et al. 1998 ). However, DENCLUE suffers from slow execution time due to its hill-climbing method, which slows down convergence to local maxima.

Several new clustering algorithms have been proposed to address the limitations of existing methods. The Multi-Elitist PSO algorithm combines particle swarm optimization with clustering ( Das et al. 2008 ), while PSO-Km integrates PSO with the K-means method ( Dhawan & Dai 2018 ). An improved method ( Elfarra et al. 2013 ) uses the concept of gravity to discover clusters in data, where each data point is attracted to the closest point with higher gravity. However, this method requires the specification of two predetermined parameters.

Another notable clustering algorithm is Density Peak Clustering (DPC), which identifies cluster centers based on their density and assigns points to clusters accordingly ( Rodriguez & Laio 2014 ). Several improved versions of DPC, such as MDPC, PPC, FDP Cluster, and DPCG, have been proposed ( Cai et al. 2018 ; Ni et al. 2019 ; Yan et al. 2016 ; Xu et al. 2016 ). However, these algorithms tend to select high-density points as initial cluster centers, which may lead to incorrect assignments or treat low-density points as noise.

The Shared Nearest Neighbors (SNN) algorithm addresses the issue of multiple-density clusters by considering the number of shared neighbors between objects ( Jarvis & Patrick 1973 ). However, identifying clusters without significant separation zones may not be accurate, as the k-nearest neighbors based on distance may not be at the same level as the object ( Ertöz et al. 2003 ). Although the SNN and DPC algorithms have been integrated into SNN-DPC, accurately identifying clusters without evident separation zones remains a challenge, and user input regarding the number of clusters or center points is often required ( Liu et al. 2018 ).

In summary, the field of clustering algorithms offers various approaches to address the challenges posed by big data. From density-based methods like DBSCAN and OPTICS to subspace clustering algorithms like DENCLUE and CLIQUE, each approach has its strengths and limitations ( Chen et al. 2022 ). Newer algorithms, such as Multi-Elitist PSO, PSO-Km, and gravity-based methods, strive to improve clustering performance. However, accurately identified clusters without evident separation zones and the need for user-specified parameters remain ongoing challenges in the field.

III. Methodology

A. proposed idea.

Our clustering approach utilizes the concept of black holes to model data points, akin to the gravitational force exerted by black holes in space. In our approach, we designate prototypes as black holes that attract nearby data points. Each data point generates gravity for each link between itself and any data point that is identified as its nearest neighbor. By selecting prototypes with the highest gravity, we attract the nearest data points, which, in turn, pull their nearest data points towards the prototypes, resulting in the formation of clusters.

The gravity of a data point X is determined by the number of data points Y that consider X as their nearest neighbor. Our objective is to determine the optimal number of clusters and classify each data point without relying on predefined parameters.

B. Challenges and novel algorithms

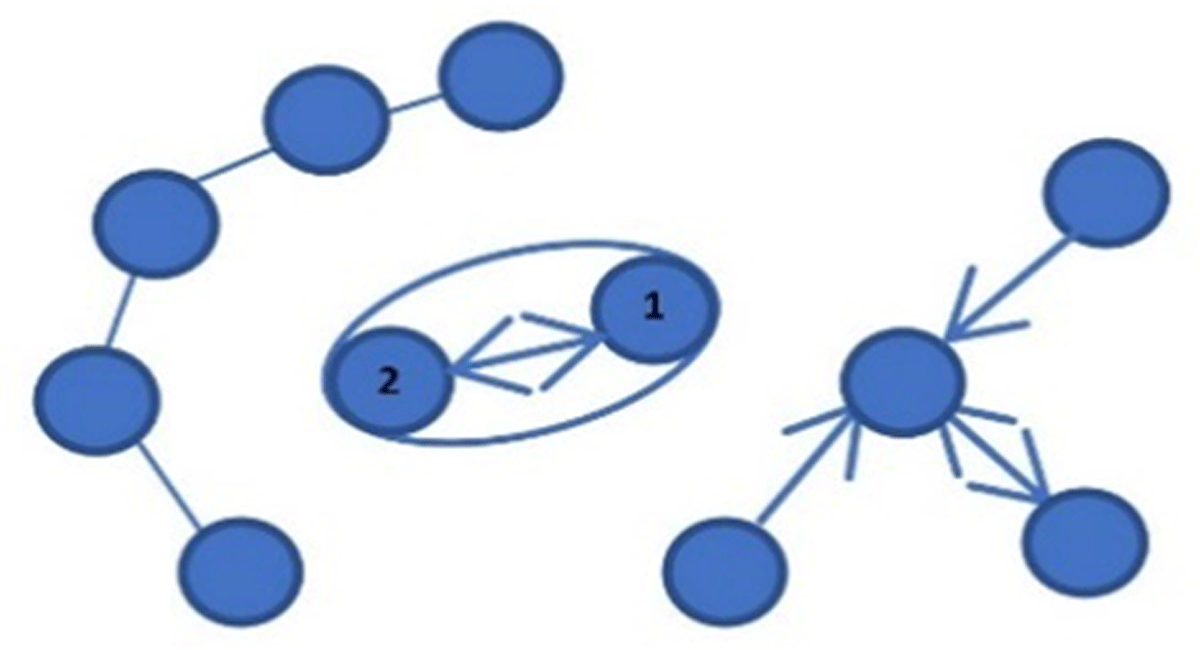

Challenges may arise when two data points designate each other as their nearest neighbor, leading to the complete separation of these points from the cluster. This situation causes the cluster to split into two new clusters. Figure 1 provides a clear example of such a case, where datapoint 1 designates datapoint 2 as its nearest neighbor, and vice versa.

Reciprocal nearest neighbor relationship between datapoint 1 and datapoint 2.

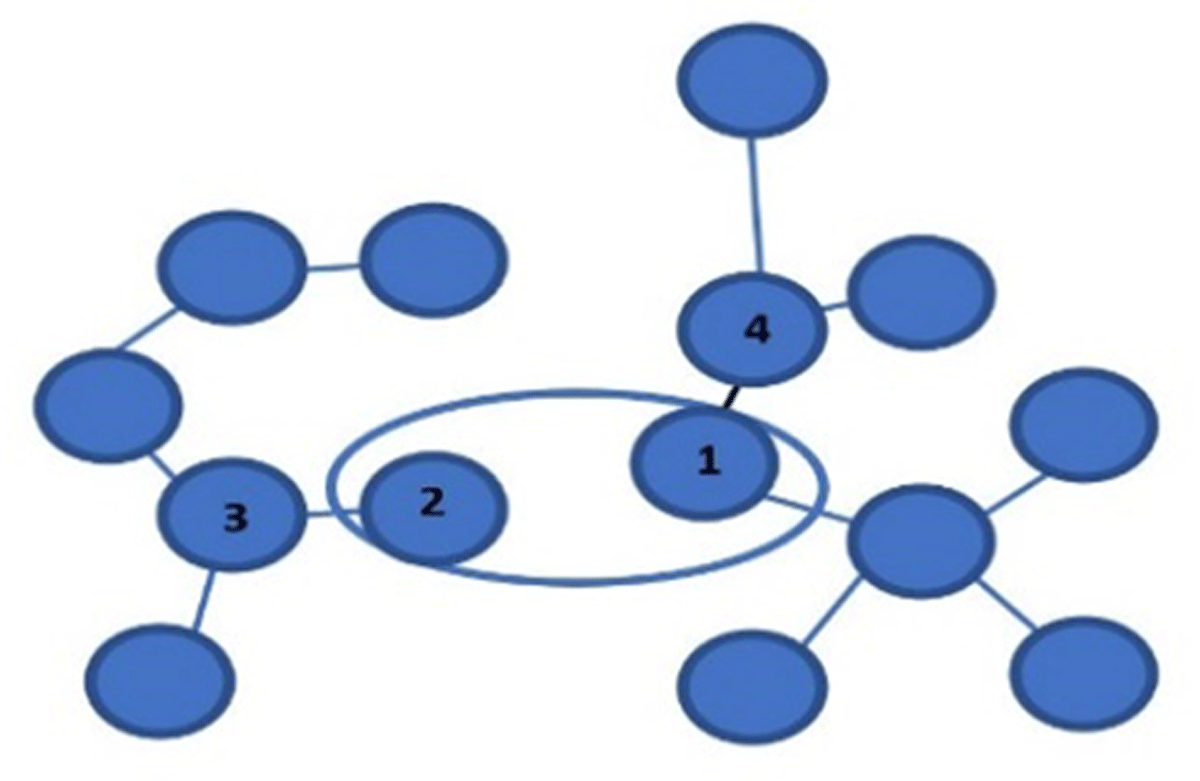

Another challenge arises when a data point, X, has nearby neighbors that are closely grouped together, leading to the splitting of the cluster into two separate clusters. This situation is illustrated in Figure 2 , where data point 1 identifies data point 4 as its nearest neighbor, while data point 2 identifies data point 3 as its nearest neighbor. As a result, data point 3 forms new connections with other data points that are unrelated to the neighbors of data point 4, leading to the formation of two isolated subgroups. Ideally, these isolated subgroups should be classified as a single cluster rather than multiple new subclusters.

Formation of isolated subgroups within a cluster due to neighbor relations.

To effectively tackle these challenges without introducing additional parameters, we introduce two innovative algorithms, namely “Move_data_points” and “Shrink.”

The Move_data_points algorithm is designed to optimize the relationships between data points. It achieves this by relocating the second, third, fourth, and fifth nearest neighboring points (referred to as fifth-level neighbors) either to the given data point or one of its adjacent points. This adjustment serves to refine the connections among neighboring points and enhance the overall clustering structure. For example, in Figure 2 , datapoint 2 will have connections with datapoints 1, 3, and 4, resulting in a single cluster.

However, it’s worth noting that this approach can occasionally lead to the unintended merging of clusters, particularly when noise points act as connectors between distinct clusters. In response to this challenge, we present the “Shrink” algorithm as a complementary solution. The Shrink algorithm operates by transforming data points to positions closer to their nearest neighbors if the distance between them exceeds twice the mean distance between neighboring points. This strategic relocation effectively brings noisy points into closer proximity to their respective nearest clusters while simultaneously disrupting any spurious connections that may exist between clusters.

Together, these two algorithms, Move_data_points and Shrink, work in tandem to refine the clustering results and mitigate potential challenges arising from noisy or poorly connected data points.

As a final step, we evaluate the effectiveness of our black hole clustering approach by comparing it to other widely used clustering algorithms such as K-means, DBSCAN, and OPTICS. The proposed black hole clustering approach offers a promising alternative method for clustering without the need for predefined parameters. This method excels at identifying clusters with non-linear boundaries and can be applied to various data types, including high-dimensional data. Further research can explore the efficiency and effectiveness of this method and its potential for real-world applications.

C. Proposed Algorithm

The BHC-Clustering algorithm starts by loading the dataset and creating a matrix called Z. It then calculates the Euclidean distance between each pair of data points in Z, resulting in a distance matrix called d ecu . The distances are sorted in ascending order, and the indices of the sorted distances are stored in an S indices array. By using this matrix, we can determine the fifth-level neighbors datapoints for each row-datapoint. The next step is to apply the Shrink function to Z matrix using the distance matrix d ecu and the S indices array. As mentioned before, we use the Shrink algorithm to eliminate or reduce the impact of noise datapoints. This step modifies the positions of the data points in Z matrix and creates a modified matrix called X. The algorithm continues by repeatedly iterating through the points in X matrix that have not been moved. It identifies the parent data point (P dp ), which is a datapoint that is marked as the nearest datapoint and as large as possible, with the highest recurrence in the first column of the S indices array, and then we move the associated data points in X using the “Move_data_points” algorithm. This process continues until all data points have been moved. Finally, the algorithm returns the modified X matrix, which represents the clustered data points based on the BHC-Clustering approach.

The “Move_data_points” algorithm operates on a dataset and performs the following steps without explicitly referring to individual data points:

For each data point in the dataset, designate a specific data point, referred to as P dp , as its nearest neighbor.

Iterate through the dataset again, and for each data point encountered, set its nearest neighbor to P dp .

Consider P dp as the second, third, fourth, and fifth nearest neighbor for each data point in the dataset. Update the data points accordingly by assigning P dp as their nearest neighbor.

Finally, return the modified dataset after applying these updates.

In summary, the “Move_data_points” algorithm operates on a dataset, establishing P dp as the nearest neighbor for each data point, and extends this association to the second, third, fourth, and fifth nearest neighbors. The algorithm then updates the data points based on these assignments before returning the modified dataset.

The Shrink algorithm takes a dataset Z, a distance matrix d ecu , and the indices of the nearest neighbors for each data point as input. It performs the following steps:

First, it calculates the mean distance between each data point and its nearest neighbor and stores these mean distances in a variable called mean_dist. Then, for each data point, it checks if the distance between the current data point and its nearest neighbor is greater than three times the mean distance. If this condition is true, it moves the current data point to the position of its nearest neighbor.

In summary, the Shrink algorithm adjusts the positions of data points based on their distances to their nearest neighbors. It ensures that any data point with a distance significantly larger than the mean distance is moved closer to its nearest neighbor. This process enhances the clustering results by bringing scattered points, which may act as outliers, closer to their neighbors.

Source code available on Google Colap at https://colab.research.google.com/drive/1gVMiNf4KPyUCdqqFDQk-5xP_fogHkLaC .

D. Complexity Analysis

In Algorithm 1, BHC-Clustering, the primary factors contributing to time complexity are the nested loops used for distance calculations and data shrinking. Specifically, the time complexity is O(n 2 *d) due to these nested operations. While in the Move_data_points algorithm, it involves iterating over points and potentially reassigning them, resulting in a time complexity of O(n 2 ). In the Shrink algorithm, the primary time-consuming tasks are computing mean distances and shrinking points. The overall time complexity is O(n 2 *d).

It’s noteworthy that the dominant factor in the time complexity of these algorithms is the nested loops, which encompass iterating over the dataset and evaluating distances between data points. This leads to a combined time complexity of O(n 2 *d) for these algorithms.

In summary, the nested loops involved in dataset traversal and distance computations are the key contributors to the overall time complexity of these algorithms, resulting in a common time complexity of O(n 2 *d).

IV. Experiments and Results

We conducted various experiments using synthetic and real-world datasets to demonstrate the effectiveness of BHC-Clustering and compare it against K-means, DBSCAN, OPTICS, and BIRCH algorithms. Synthetic datasets included both Gaussian and non-Gaussian data, while real-world datasets were also utilized. The experiments were performed using Python version 3.8 on a Windows 10 Education system with an Intel Core i5-10500H CPU running at 2.50GHz and 16 gigabytes of memory. The synthetic datasets were two-dimensional and had varying numbers of true clusters.

In these experiments, we employed BHC-Clustering to determine the optimal number of clusters and then compared the results with the aforementioned clustering methods. The goal was to evaluate the performance of each method in clustering the data and assess the effectiveness of BHC-Clustering in particular.

A. Synthetic Data Sets

We conducted our experiments using two synthetic data sets. The first data set contained two clusters, while the second data set consisted of 15 clusters. Initially, we applied our proposed method to automatically determine the number of clusters, and our approach successfully predicted the correct number of clusters.

To compare the performance of our proposed algorithm, BHC-Clustering, we also utilized several other popular clustering algorithms, namely K-means, DBSCAN, OPTICS, and Birch. We applied these algorithms to the data sets and evaluated their results against our proposed method.

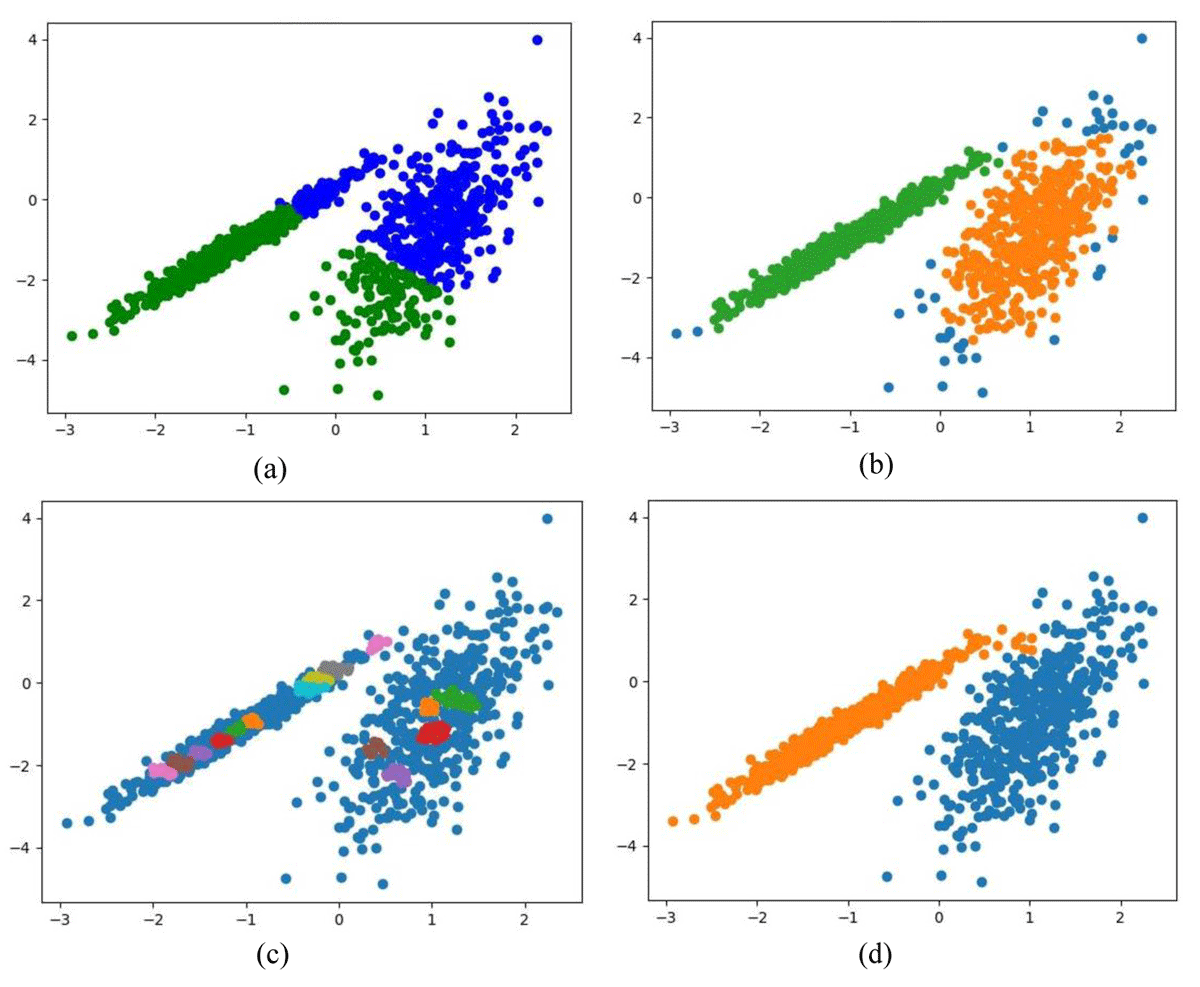

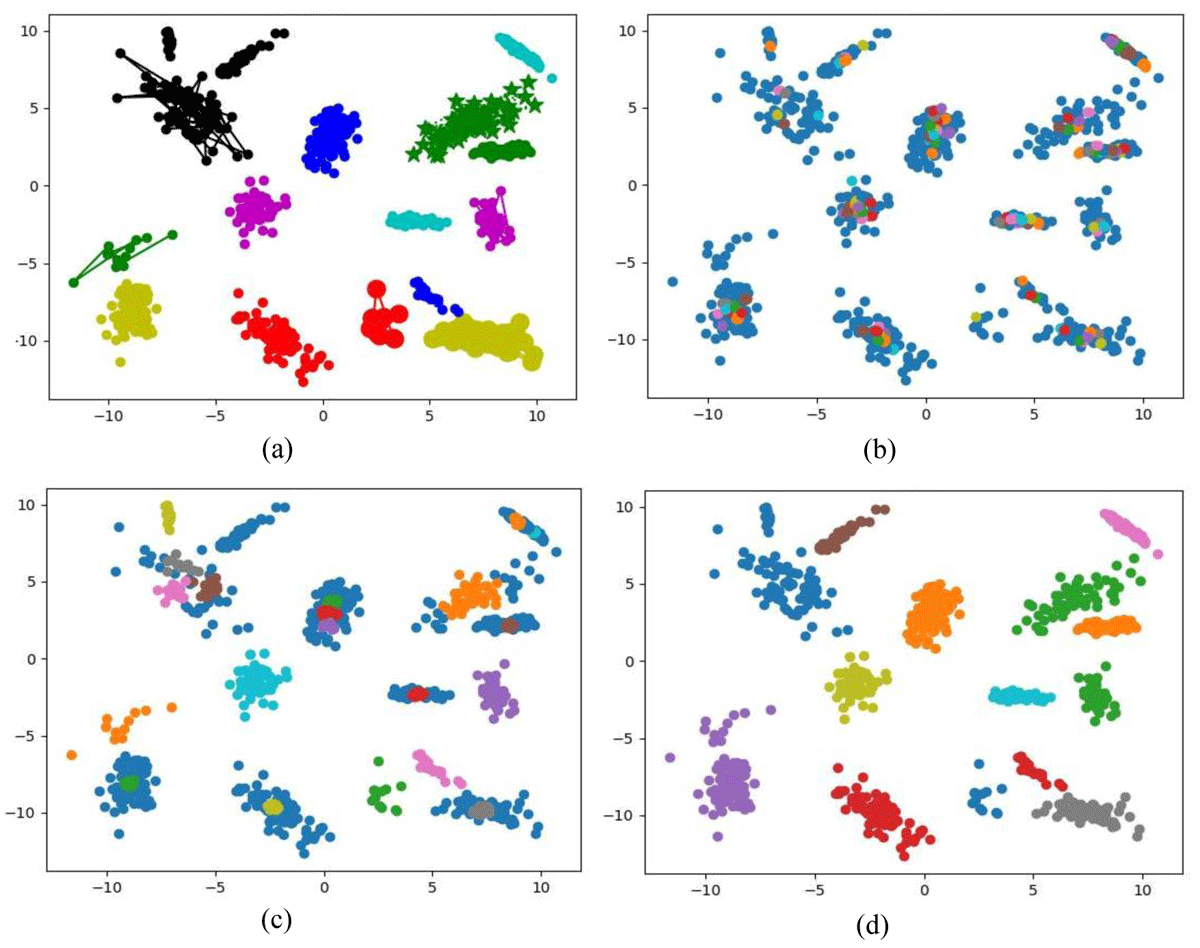

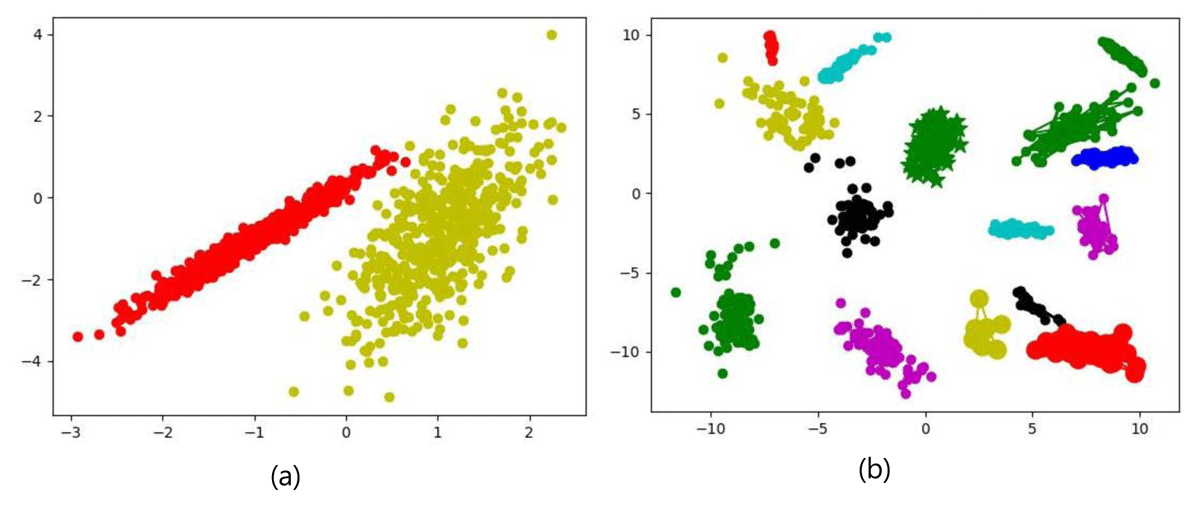

Figures 3 and 4 depict the clustering results obtained from applying the mentioned algorithms to the 2D-synthetic data sets. Specifically, when utilizing K-means clustering ( Figures 3(a) and 4(a) ), the algorithm exhibited unsatisfactory performance across the datasets. It incorrectly merged half of each cluster with half of the others, resulting in inaccurate classifications.

Comparison of clustering algorithms on 2D-synthetic data sets with two clusters (a) K-means clustering results, (b) DBSCAN clustering results, (c) OPTICS clustering results, and (d) Birch clustering results.

Comparison of clustering algorithms on 2D- synthetic data sets with 15 clusters (a) K-means clustering results, (b) DBSCAN clustering results, (c) OPTICS clustering results, and (d) Birch clustering results.

Similarly, the application of DBSCAN ( Figures 3(b) and 4(b) ) showed unsatisfactory performance across the datasets, leading to an incorrect number of clusters. This resulted in the misclassification of data points and the formation of spurious clusters. Evidence of this can be observed from the presence of misclassified data points and the existence of scattered points that should have been grouped together in coherent clusters.

Likewise, OPTICS ( Figures 3(c) and 4(c) ) also demonstrated poor performance. It frequently led to an excessive increase in the number of clusters and caused the fragmentation of clusters into multiple smaller clusters in certain cases. As a result, OPTICS consistently produced inaccurate classifications across the datasets. On the other hand, the Birch algorithm yielded significantly better results in clustering, as evident in Figures 3(d) and 4(d) . However, it classified the data set into 14 clusters instead of the expected 15 clusters.

To provide a comprehensive comparison, Figure 5 presents the performance of our proposed BHC-Clustering approach against the aforementioned algorithms. The results clearly demonstrate that our proposed method outperformed the other algorithms in terms of clustering accuracy and overall performance.

Performance comparison of BHC-Clustering against other algorithms.

Our contribution lies in the accurate prediction of the number of clusters in the data. Determining the optimal number of clusters is a crucial step in clustering analysis, as it directly affects the quality of the results. Traditional clustering algorithms, such as K-means, often require the number of clusters to be specified in advance, which can be challenging, especially when working with unfamiliar or complex datasets. This advancement in cluster prediction enhances the accuracy and reliability of clustering results. It enables us to uncover the underlying structure of the data more effectively. Moreover, our approach reduces the burden on users by automating the process of selecting the number of clusters, making it more accessible and efficient for various applications in data analysis and machine learning.

Overall, the experiments conducted on synthetic data sets provide valuable insights into the performance and suitability of BHC-Clustering for different clustering tasks.

B. Real world Data Sets

In addition to the synthetic data sets, we also tested BHC-Clustering on real-world datasets to assess its performance. Table 1 summarizes the characteristics of these real datasets. They serve as practical benchmarks for evaluating BHC-Clustering in complex real-world scenarios.

Characteristics of real-world datasets.

The first data set, Iris Plants, consists of 150 instances and belongs to three different classes. It has four dimensions, capturing various features of iris plants. This dataset is one of the earliest datasets used in the literature on classification methods and is widely used in statistics and machine learning. The data set contains three classes of 50 instances each, where each class refers to a type of iris plant. One class is linearly separable from the other two; the latter are not linearly separable from each other ( Fisher 1988 ).

The second dataset, Wine, contains 178 instances and is divided into three classes. It is a high-dimensional dataset with 13 dimensions, representing different chemical properties of wines. These data are the results of a chemical analysis of wines grown in the same region in Italy but derived from three different cultivars. The analysis determined the quantities of 13 constituents found in each of the three types of wines. This dataset is selected for its complexity, allowing us to assess the proposed BHC algorithm’s performance in handling high-dimensional data with distinct attributes ( Aeberhard et al. 1991 ).

The third data set, Breast Cancer (BC), is composed of 569 instances and has two classes. It is a particularly challenging data set due to its high dimensionality, with 30 different attributes related to breast cancer diagnosis. These attributes are derived from digitized images of fine needle aspirates (FNA) of breast masses, describing characteristics of cell nuclei within the images ( Wolberg et al. 1995 ). The selection of this dataset was motivated by its high dimensionality and real-world relevance, rendering it a valuable testbed for our clustering algorithm in a healthcare context.

The fourth data set, Seeds-Dataset (SD), contains measurements of the geometrical properties of kernels belonging to three different varieties of wheat. A soft X-ray technique and GRAINS package were used to construct all seven real-valued attributes. The examined group comprised kernels belonging to three different varieties of wheat: Kama, Rosa, and Canadian, 70 elements each, randomly selected for the experiment. High quality visualization of the internal kernel structure was detected using a soft X-ray technique. It is non-destructive and considerably cheaper than other more sophisticated imaging techniques like scanning microscopy or laser technology. The images were recorded on 13x18 cm X-ray KODAK plates. Studies were conducted using combine harvested wheat grain originating from experimental fields explored at the Institute of Agrophysics of the Polish Academy of Sciences in Lublin ( Charytanowicz et al. 2012 ).

The fifth dataset is the Glass dataset, providing a more realistic scenario with 214 instances and 10 attributes. Each instance in this dataset represents a unique piece of glass, and the class attribute indicates the type of glass based on the manufacturing process. There are six distinct types of glass, representing different manufacturing techniques ( German 1987 ). The study of the classification of types of glass was motivated by a criminological investigation. At the scene of the crime, the glass left can be used as evidence, making this dataset particularly relevant for forensic and investigative applications.

These real-world data sets serve as practical benchmarks to assess the effectiveness and applicability of BHC-Clustering in diverse and complex real-world scenarios. The subsequent sections will present the experimental results and comparisons for each of these data sets.

The results presented in Table 2 demonstrate the accuracy rates of various clustering algorithms, namely BHC-Clustering, DBSCAN, OPTICS, and K-means, applied to five real-world data sets: Iris, Wine, Breast Cancer, Seeds-Dataset, and Glass.

Predicted and actual number of classes and accuracy rates of clustering algorithms on real-world datasets.

C. Evaluation metrics

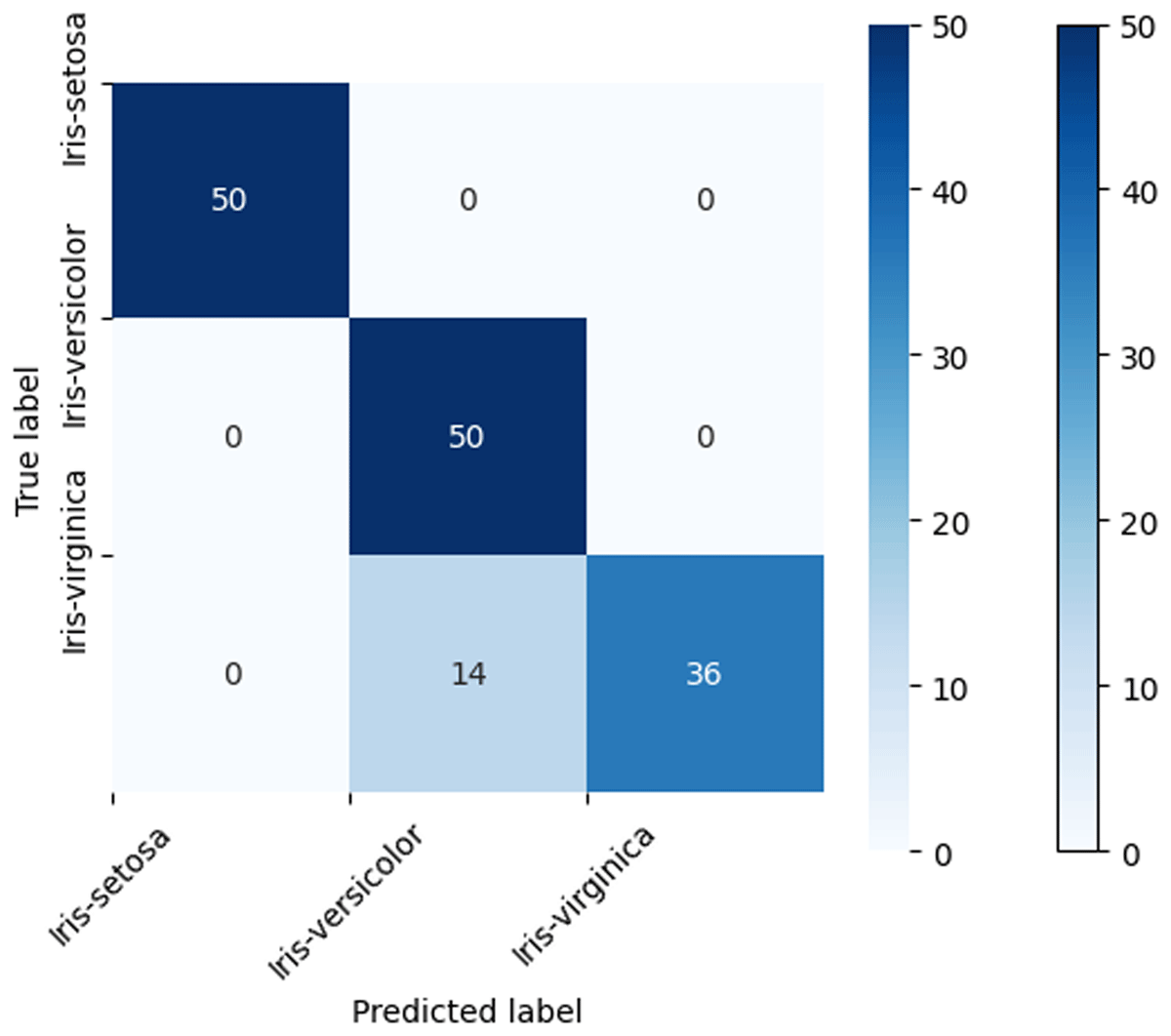

To assess the BHC algorithm’s effectiveness, we utilized confusion matrices as our primary evaluation tool. A confusion matrix is a valuable resource in clustering and unsupervised learning. It aids in gauging how effectively data points are grouped into clusters by comparing assigned cluster labels to actual cluster memberships. This matrix tallies the instances that were correctly and incorrectly assigned to clusters, offering insights into the algorithm’s performance.

The diagonal values within the matrix represent correctly clustered instances. To compute the overall accuracy, we divided these diagonal values by the total number of instances. For visual clarity, Figure 6 illustrates the BHC algorithm’s classification of the Iris dataset. The accompanying confusion matrix reveals that out of a total of 150 instances, 136 were correctly classified, resulting in an accuracy rate of 90.7%.

Confusion matrix for Iris dataset clustering using BHC algorithm.

D. Experimental Results and Evaluation

Notably, the proposed method achieved remarkable success in accurately predicting the number of clusters, as indicated by the column “Pred.” It consistently achieved a perfect prediction rate, highlighting its significance in effectively determining the true number of clusters.

For the Iris data set, BHC-Clustering achieved an accuracy rate of 90.7%, outperforming the other algorithms. DBSCAN and OPTICS had relatively lower accuracy rates of 66% and 67%, respectively, while K-means performed poorly with an accuracy rate of only 24%.

In the case of the Wine data set, BHC-Clustering achieved a moderate accuracy rate of 62%, surpassing DBSCAN (33%) and K-means (16%) but falling behind OPTICS (67%). It is worth noting that none of the algorithms achieved high accuracy on this particular data set.

For the Breast Cancer (BC) data set, BHC-Clustering achieved a decent accuracy rate of 70.3%. DBSCAN (63%) and OPTICS (72%) also performed reasonably well, but K-means excelled with an accuracy rate of 85%.

For the Seed-dataset (SD), BHC-Clustering achieved a moderate accuracy rate of 63%. However, it outperformed the other algorithms in this case as well. DBSCAN and OPTICS had significantly lower accuracy rates of 28% and 18%, respectively, while K-means performed slightly better with an accuracy rate of 26%.

In the Glass Identification (GI) data set, BHC-Clustering achieved an accuracy rate of 76.2%, demonstrating its effectiveness in clustering this particular data set. DBSCAN and OPTICS had lower accuracy rates of 23.8% and 16%, respectively, while K-means performed relatively better with an accuracy rate of 45%.

Overall, the results suggest that BHC-Clustering exhibits competitive performance compared to the other algorithms in terms of clustering accuracy. However, the performance varies depending on the data set, indicating the importance of considering the characteristics and complexity of the data when selecting a suitable clustering algorithm. The proposed method’s success in accurately predicting the number of clusters, demonstrates its potential for enhancing the clustering process.

V. Conclusion

The proposed BHC-Clustering method has been extensively investigated and applied to synthetic and real-world datasets. This approach utilizes the concept of black holes to attract nearby data points and form clusters. The method exhibits robust performance in accurately predicting the number of clusters and achieving competitive clustering accuracy rates.

Comparative evaluations against popular clustering algorithms, such as K-means, DBSCAN, OPTICS, and BIRCH, demonstrate that BHC-Clustering outperforms K-means and achieves comparable or superior results compared to DBSCAN and OPTICS. Although BIRCH shows promise, it has lower accuracy on one of the datasets.

Furthermore, the application of BHC-Clustering on real-world datasets, including Iris, Wine, Breast Cancer, Seeds-Dataset, and Glass, showcases its effectiveness across different domains. It demonstrates varying levels of performance, depending on the characteristics of the dataset. The findings emphasize the reliability and effectiveness of BHC-Clustering as a clustering approach and encourage further research to refine the method, assess its efficiency, and explore its applicability in diverse applications. Overall, BHC-Clustering offers a promising alternative for clustering tasks, providing accurate cluster prediction and competitive clustering accuracy on a variety of datasets, including real-world scenarios.

However, it is important to acknowledge the challenge posed by the algorithm’s complexity, which scales as O(n 2 *d), particularly when confronted with multiple noise points in real-world scenarios. The need for further research in this direction is evident. Future work in this field should focus on:

Complexity enhancement: Addressing the complexity of the BHC-Clustering method O(n 2 *d) to improve its efficiency and scalability, especially when dealing with large datasets and intricate cluster structures.

Noise handling: Developing advanced mechanisms to enhance the algorithm’s ability to identify and manage multiple noise points effectively. This will bolster its applicability in noisy, real-world environments and ensure more efficient clustering outcomes.

Competing Interests

The authors have no competing interests to declare.

Aeberhard, M, Stefan, M and Forina, M 1991. Wine. UCI Machine Learning Repository . DOI: https://doi.org/10.24432/C5PC7J

Agrawal, R, Gehrke, J, Gunopulos, D and Raghavan, P 1998. Automatic subspace clustering of high dimensional data for data mining applications. ACM SIGMOD Record , 27(2): 94–105. DOI: https://doi.org/10.1145/276305.276314

Ankerst, M, Breunig, MM, Kriegel, HP and Sande, J 1999. Optics: ordering points to identify the clustering structure. ACM SIGMOD Record , 28(2): 49–60. DOI: https://doi.org/10.1145/304181.304187

Birant, D and Kut, A 2007. St-dbscan: An algorithm for clustering spatial–temporal data. Data & Knowledge Engineering , 60(1): 208–221. DOI: https://doi.org/10.1016/j.datak.2006.01.013

Cadiou, E, Sarzi, M and Dubois, Y 2020. Gravitational clustering of stars and gas in galaxy simulations. Monthly Notices of the Royal Astronomical Society , 496(4): 4986–5001.

Cai, B, Huang, G, Yong, X, Jing, H, Huang, GL, Ke, D, et al. 2018. Clustering of multiple density peaks. In: 22nd Pacific–Asia Conference, PAKDD 2018, Melbourne, Australia on 3–6 June 2018, 413–425. DOI: https://doi.org/10.1007/978-3-319-93040-4_33

Cai, J, Hao, J, Yang, H, Zhao, X, Yang, Y, et al. 2023. A review on semi-supervised clustering. Information Sciences , 632: 164–200. DOI: https://doi.org/10.1016/j.ins.2023.02.088

Charytanowicz, M, Jerzy, N, Piotr, K, Piotr, K, Szymon, L, et al. 2012. Seeds. UCI Machine Learning Repository . DOI: https://doi.org/10.24432/C5H30K

Chen, X, Wu, H, Lichti, D, Han, X, Ban, Y, Li, P, Deng, H, et al. 2022. Extraction of indoor objects based on the exponential function density clustering model. Information Sciences , 607: 1111–1135. DOI: https://doi.org/10.1016/j.ins.2022.06.032

Das, S, Abraham, A and Konar, A 2008. Automatic kernel clustering with a multi-elitist particle swarm optimization algorithm. Pattern Recognition Letters , 29(5): 688–699. DOI: https://doi.org/10.1016/j.patrec.2007.12.002

Dhawan, AP and Dai, S 2018. Clustering and pattern classification. In: Dhawan, AP, Huang, HK, and Kim, DS (eds.), Principles and Advanced Methods in Medical Imaging and Image Analysis . Singapore: World Scientific. pp. 229–265. DOI: https://doi.org/10.1142/9789812814807_0010

Elfarra, BK, El Khateeb, TJ and Ashour, WM 2013. BH-centroids: A new efficient clustering algorithm. Work , 1(1): 15–24. DOI: https://doi.org/10.14257/ijaiasd.2013.1.1.02

Ertöz, L, Steinbach, M and Kumar, V 2003. Finding clusters of different sizes shapes and densities in noisy high dimensional data. In: The 2003 SIAM International Conference on Data Mining, San Francisco, CA on 1–3 May 2003, pp. 47–58. DOI: https://doi.org/10.1137/1.9781611972733.5

Ester, M, Kriegel, HP, Sander, J and Xu, X 1996. A density-based algorithm for discovering clusters in large spatial databases with noise. In: The 2nd International Conference on Knowledge Discovery and Data Mining, Portland, Oregon on 2–4 August 1996, pp. 226–231.

Fisher, RA 1988. Iris. UCI Machine Learning Repository . DOI: https://doi.org/10.24432/C56C76

German, B 1987. Glass Identification. UCI Machine Learning Repository . DOI: https://doi.org/10.24432/C5WW2P

Ghazal, T, Hussain MZ, Said, RA and Nadeem, A 2021. Performances of K-means clustering algorithm with Different Distance Metrics. Intelligent Automation and Soft Computing , 30(2): 735–742. DOI: https://doi.org/10.32604/iasc.2021.019067

Hai-Feng, Y, Xiao-Na, Y, Jiang-Hui, C, Yu-Qing, Y, et al. 2023. An in-depth exploration of LAMOST unknown spectra based on density clustering. Research in Astronomy and Astrophysics , 23(5). DOI: https://doi.org/10.1088/1674-4527/acc507

He, Y, Tan, H, Luo, W, Mao, H, Ma, D, Feng, S, Fan, J et al. 2011. Mr-dbscan: An efficient parallel density-based clustering algorithm using mapreduce. In: 2011 IEEE 17th International Conference on Parallel and Distrubuted Systems, Tianan, Taiwan on 7–9 December 2011, pp. 473–480. DOI: https://doi.org/10.1109/ICPADS.2011.83

Hinneburg, A and Keim, DA 1998. An efficient approach to clustering in large multimedia databases with noise. In: KDD ’98: Proceedings of the Fourth International Conference on Knowledge Discovery and Data Mining, New York, NY on 27–31 August 1998, pp. 58–65.

Huang, Y, Yang, H and Zhang, L 2019. A novel clustering algorithm based on gravity. Journal of Ambient Intelligence and Humanized Computing , 10(6):2461–2470.

Jankowiak, M, Kaczmarek, M, Wozniak, M and Wojciechowski, K 2017. Gravity-based clustering of time series data. Information Sciences , 385-386: 52–64.

Jarvis, RA and Patrick, EA 1973. Clustering using a similarity measure based on shared near neighbors. IEEE Transactions on Computers , C–22(11): 1025–1034. DOI: https://doi.org/10.1109/T-C.1973.223640

Kuwil, FH, Atila, Ü, Abu-Issa, R and Murtagh, F 2020. A novel data clustering algorithm based on gravity center methodology. Expert Systems with Applications , 156: 113435. DOI: https://doi.org/10.1016/j.eswa.2020.113435

Liu, P, Zhou, D and Wu, N 2007. VDBSCAN: varied density based spatial clustering of applications with noise. In: International Conference on Service Systems and Service Management, Chengdu, China on 9–11 June 2007, pp. 1–4. DOI: https://doi.org/10.1109/ICSSSM.2007.4280175

Liu, R, Wang, H and Yu, X 2018. Shared-nearest-neighbor-based clustering by fast search and find of density peaks. Information Sciiences , 450: 200–226. DOI: https://doi.org/10.1016/j.ins.2018.03.031

Liu, W and Hou, J 2016. Study on a density peak based clustering algorithm. In: 7th International Conference on Intelligent Control and Information Processing (ICICIP), Siem Reap, Cambodia on 1–4 December 2016, pp. 60–67. DOI: https://doi.org/10.1109/ICICIP.2016.7885877

Louhichi, S, Gzara, M and Ben-Abdallah, H 2017. Unsupervised varied density based clustering algorithm using spline. Pattern Recognition Letters , 93: 48–57. DOI: https://doi.org/10.1016/j.patrec.2016.10.014

Ni, L, Luo, W, Zhu, W and Liu, W 2019. Clustering by finding prominent peaks in density space. Engineering Applications Of Artifical Intelligence , 85: 727–739. DOI: https://doi.org/10.1016/j.engappai.2019.07.015

Rodriguez, A and Laio, A 2014. Clustering by fast search and find of density peaks. Science , 344(6191): 1492–1496. DOI: https://doi.org/10.1126/science.1242072

Wolberg, W, Mangasarian, O, Street, N and Street, W 1995. Breast Cancer Wisconsin (Diagnostic). UCI Machine Learning Repository . DOI: https://doi.org/10.24432/C5DW2B

Xu, R and Wunsch, D, II 2005. Survey of clustering algorithms. IEEE Transactions on Neural Networks , 16(3): 645–678. DOI: https://doi.org/10.1109/TNN.2005.845141

Xu, X, Ding, S, Du, M and Xue, Y 2016. DPCG: An efficient density peaks clustering algorithm based on grid. International Journal of Machine Learnning and Cybernetics , 9: 743–754. DOI: https://doi.org/10.1007/s13042-016-0603-2

Yan, Z, Luo, W, Bu, C and Ni, L 2016. Clustering spatial data by the neighbors intersection and the density difference. In: UCC’16: 9th International Conference on Utility and Cloud Computing, Shanghai, China on 6–9 December 2016, pp. 217–226.

data mining techniques Recently Published Documents

Total documents.

- Latest Documents

- Most Cited Documents

- Contributed Authors

- Related Sources

- Related Keywords

Prediction of Skin Diseases Using Machine Learning

Skin disease rates have been increasing over the past few decades. It has led to both fatal and non-fatal disabilities all around the world, especially in those areas where medical resources are not good enough. Early diagnosis of skin diseases increases the chances of cure significantly. Therefore, this work is comparing six machine learning algorithms, namely KNN, random forest, neural network, naïve bayes, logistic regression, and SVM, for the prediction of the skin diseases. The information gain, gain ratio, gini decrease, chi-square, and relieff are used to rank the features. This work comprises the introduction, literature review, and proposed methodology parts. In this research paper, a new method of analyzing skin disease has been proposed in which six different data mining techniques are used to develop an ensemble method that integrates all the six data mining techniques as a single one. The ensemble method used on the dermatology dataset gives improved result with 94% accuracy in comparison to other classifier algorithms and hence is more effective in this area.

A Survey on Building Recommendation Systems Using Data Mining Techniques

Classification is a data mining technique or approach used to estimate the grouped membership of items on a basis of a common feature. This technique is virtuous for future planning and discovering new knowledge about a specific dataset. An in-depth study of previous pieces of literature implementing data mining techniques in the design of recommender systems was performed. This chapter provides a broad study of the way of designing recommender systems using various data mining classification techniques of machine learning and also exploiting their methodological decisions in four aspects, the recommendation approaches, data mining techniques, recommendation types, and performance measures. This study focused on some selected classification methods and can be so supportive for both the researchers and the students in the field of computer science and machine learning in strengthening their knowledge about the machine learning hypothesis and data mining.

A Classification and Clustering Approach Using Data Mining Techniques in Analysing Gastrointestinal Tract

Diagnosis and detection of plant diseases using data mining techniques, location-based crime prediction using multiclass classification data mining techniques, an effective approach to test suite reduction and fault detection using data mining techniques.

Software testing is used to find bugs in the software to provide a quality product to the end users. Test suites are used to detect failures in software but it may be redundant and it takes a lot of time for the execution of software. In this article, an enormous number of test cases are created using combinatorial test design algorithms. Attribute reduction is an important preprocessing task in data mining. Attributes are selected by removing all weak and irrelevant attributes to reduce complexity in data mining. After preprocessing, it is not necessary to test the software with every combination of test cases, since the test cases are large and redundant, the healthier test cases are identified using a data mining techniques algorithm. This is healthier and the final test suite will identify the defects in the software, it will provide better coverage analysis and reduces execution time on the software.

Applying data mining techniques to classify patients with suspected hepatitis C virus infection

Dengue fever prediction modelling using data mining techniques, fake news detection using data mining techniques.

Nowadays, internet has been well known as an information source where the information might be real or fake. Fake news over the web exist since several years. The main challenge is to detect the truthfulness of the news. The motive behind writing and publishing the fake news is to mislead the people. It causes damage to an agency, entity or person. This paper aims to detect fake news using semantic search.

A Leading Indicator Approach with Data Mining Techniques in Analysing Bitcoin Market Value

Export citation format, share document.

DBSCAN Clustering Algorithm Based on Density

Ieee account.

- Change Username/Password

- Update Address

Purchase Details

- Payment Options

- Order History

- View Purchased Documents

Profile Information

- Communications Preferences

- Profession and Education

- Technical Interests

- US & Canada: +1 800 678 4333

- Worldwide: +1 732 981 0060

- Contact & Support

- About IEEE Xplore

- Accessibility

- Terms of Use

- Nondiscrimination Policy

- Privacy & Opting Out of Cookies

A not-for-profit organization, IEEE is the world's largest technical professional organization dedicated to advancing technology for the benefit of humanity. © Copyright 2024 IEEE - All rights reserved. Use of this web site signifies your agreement to the terms and conditions.

A comprehensive and analytical review of text clustering techniques

- Published: 08 April 2024

Cite this article

- Vivek Mehta 1 ,

- Mohit Agarwal 1 &

- Rohit Kumar Kaliyar 1

106 Accesses

Explore all metrics

Document clustering involves grouping together documents so that similar documents are grouped together in the same cluster and different documents in the different clusters. Clustering of documents is considered a fundamental problem in the field of text mining. With a high rise in textual content over the Internet in recent years makes this problem more and more challenging. For example, SpringerNature has alone published more than 67,000 articles in the last few years just on the topic of COVID-19. This high volume leads to the challenge of very high dimensionality in analyzing the textual datasets. In this review paper, several text clustering techniques are reviewed and analyzed theoretically as well as experimentally. The reviewed techniques range from traditional non-semantic to some state-of-the-art semantic text clustering techniques. The individual performances of these techniques are experimentally compared and analyzed on several datasets using different performance measures such as purity, Silhouette coefficient, and adjusted rand index. Additionally, significant research gaps are also presented to give the readers a direction for future research.

This is a preview of subscription content, log in via an institution to check access.

Access this article

Price includes VAT (Russian Federation)

Instant access to the full article PDF.

Rent this article via DeepDyve

Institutional subscriptions

Similar content being viewed by others

An Analytical Approach to Document Clustering Techniques

Combining semantic and term frequency similarities for text clustering

Text Clustering Using Novel Hybrid Algorithm

Data availability.

All the datasets analyzed in this paper are publicly available, links to which have been included as footnotes in the Sect. 3.1 .

https://wordnet.princeton.edu/ .

https://nlp.stanford.edu/projects/glove/ .

https://fasttext.cc/ .

https://github.com/facebookresearch/fastText .

https://github.com/google-research/bert .

Available at: https://vhasoares.github.io/downloads.html . Accessed: 2022-04-08.

Available at: http://qwone.com/~jason/20Newsgroups/ . Accessed: 2022-04-08.

Abbasi-Moud, Z., Vahdat-Nejad, H., Sadri, J.: Tourism recommendation system based on semantic clustering and sentiment analysis. Expert Syst. Appl. 167 , 114324 (2021)

Article Google Scholar

Agrawal, R., Gehrke, J., Gunopulos, D., Raghavan, P.: Automatic subspace clustering of high dimensional data. Data Min. Knowl. Disc. 11 (1), 5–33 (2005)

Article MathSciNet Google Scholar

Alammar, J.: The illustrated Bert, Elmo, and Co. http://jalammar.github.io/illustrated-bert/ (2018). Accessed 25 Jan 2021

Almeida, F., Xexéo, G.: Word embeddings: a survey (2019). arXiv preprint arXiv:1901.09069

Altınçay, H., Erenel, Z.: Analytical evaluation of term weighting schemes for text categorization. Pattern Recogn. Lett. 31 (11), 1310–1323 (2010)

Ankerst, M., Breunig, M.M., Kriegel, H.P., Sander, J.: Optics: ordering points to identify the clustering structure. In: ACM Sigmod Record, vol. 28, pp. 49–60. ACM (1999)

Baghel, R., Dhir, R.: Text document clustering based on frequent concepts. In: 2010 First International Conference On Parallel, Distributed and Grid Computing (PDGC 2010), pp. 366–371. IEEE (2010)

Bakarov, A.: A survey of word embeddings evaluation methods (2018). arXiv preprint arXiv:1801.09536

Beyer, K., Goldstein, J., Ramakrishnan, R., Shaft, U.: When is “nearest neighbor” meaningful? In: International Conference on Database Theory, pp. 217–235. Springer, Berlin (1999)

Bezdek, J.C., Ehrlich, R., Full, W.: FCM: the fuzzy c-means clustering algorithm. Comput. Geosci. 10 (2–3), 191–203 (1984)

Blei, D.M., Ng, A.Y., Jordan, M.I.: Latent Dirichlet allocation. J. Mach. Learn. Res. 3 (Jan), 993–1022 (2003)

Google Scholar

Bojanowski, P., Grave, E., Joulin, A., Mikolov, T.: Enriching word vectors with subword information. Trans. Assoc. Comput. Linguist. 5 , 135–146 (2017)

Bottou, L.: Stochastic gradient descent tricks. In: Neural networks: Tricks of the Trade, pp. 421–436. Springer, Berlin (2012)

Bouras, C., Tsogkas, V.: A clustering technique for news articles using wordnet. Knowl.-Based Syst. 36 , 115–128 (2012)

Brainard, J.: Scientists are drowning in covid-19 papers. can new tools keep them afloat. Science 13 (10), 1126 (2020)

Covid-19 research highlights. https://www.springernature.com/in/researchers/campaigns/coronavirus (2020). Accessed 06 May 2022

Camacho-Collados, J., Pilehvar, M.T.: From word to sense embeddings: a survey on vector representations of meaning. J. Artif. Intell. Res. 63 , 743–788 (2018)

Cecchini, F.M., Riedl, M., Fersini, E., Biemann, C.: A comparison of graph-based word sense induction clustering algorithms in a pseudoword evaluation framework. Lang. Resour. Eval. 52 , 733–770 (2018)

Deerwester, S., Dumais, S.T., Furnas, G.W., Landauer, T.K., Harshman, R.: Indexing by latent semantic analysis. J. Am. Soc. Inf. Sci. 41 (6), 391–407 (1990)

Dempster, A.P., Laird, N.M., Rubin, D.B.: Maximum likelihood from incomplete data via the EM algorithm. J. R. Stat. Soc. Ser. B (Methodol.) 1–38 (1977)

Devlin, J., Chang, M.W., Lee, K., Toutanova, K.: Bert: Pre-training of deep bidirectional transformers for language understanding (2018). arXiv preprint arXiv:1810.04805

Dey, A., Bhattacharyya, S., Dey, S., Platos, J., Snasel, V.: A quantum inspired differential evolution algorithm for automatic clustering of real life datasets. Multimedia Tools Appl. 1–30 (2023)

Duan, T., Lou, Q., Srihari, S.N., Xie, X.: Sequential embedding induced text clustering, a non-parametric Bayesian approach. In: Pacific-Asia Conference on Knowledge Discovery and Data Mining, pp. 68–80. Springer, Berlin (2019)

Elhadad, M.K., Badran, K.M., Salama, G.I.: A novel approach for ontology-based dimensionality reduction for web text document classification. Int. J. Software Innov. (IJSI) 5 (4), 44–58 (2017)

Ester, M., Kriegel, H.P., Sander, J., Xu, X., et al.: A density-based algorithm for discovering clusters in large spatial databases with noise. In: KDD, vol. 96, pp. 226–231 (1996)

Firth, J.R.: A synopsis of linguistic theory, 1930-1955. Studies in linguistic analysis (1957)

Fisher, D.H.: Knowledge acquisition via incremental conceptual clustering. Mach. Learn. 2 (2), 139–172 (1987)

Fodeh, S., Punch, B., Tan, P.N.: On ontology-driven document clustering using core semantic features. Knowl. Inf. Syst. 28 (2), 395–421 (2011)

Gennari, J.H., Langley, P., Fisher, D.: Models of incremental concept formation. Artif. Intell. 40 (1–3), 11–61 (1989)

Guha, S., Rastogi, R., Shim, K.: Cure: an efficient clustering algorithm for large databases. In: ACM Sigmod Record, vol. 27, pp. 73–84. ACM (1998)

Guha, S., Rastogi, R., Shim, K.: ROCK: a robust clustering algorithm for categorical attributes. Inf. Syst. 25 (5), 345–366 (2000)

Han, J., Pei, J., Kamber, M.: Data Mining: Concepts and Techniques. Elsevier, New York (2011)

Harris, Z.S.: Distributional structure. Word 10 (2–3), 146–162 (1954)

Hinneburg, A., Keim, D.A.: Optimal grid-clustering: towards breaking the curse of dimensionality in high-dimensional clustering. In: Proceedings of the 25th International Conference on Very Large Databases, pp. 506–517 (1999)

Hinneburg, A., Keim, D.A., et al.: An efficient approach to clustering in large multimedia databases with noise. In: KDD, vol. 98, pp. 58–65 (1998)

Hirst, G., St-Onge, D., et al.: Lexical chains as representations of context for the detection and correction of malapropisms. WordNet Electronic Lexical Database 305 , 305–332 (1998)

Hofmann, T.: Probabilistic latent semantic analysis (2013). arXiv preprint arXiv:1301.6705

Hosseini, S., Varzaneh, Z.A.: Deep text clustering using stacked AutoEncoder. Multimedia Tools Appl. 81 (8), 10861–10881 (2022)

Hotho, A., Staab, S., Stumme, G.: Ontologies improve text document clustering. In: Third IEEE International Conference on Data Mining, pp. 541–544. IEEE (2003)

Huang, A., Milne, D., Frank, E., Witten, I.H.: Clustering documents using a wikipedia-based concept representation. In: Pacific-Asia Conference on Knowledge Discovery and Data Mining, pp. 628–636. Springer, Berlin (2009)

Huang, Z.: A fast clustering algorithm to cluster very large categorical data sets in data mining. DMKD 3 (8), 34–39 (1997)

Hubert, L., Arabie, P.: Comparing partitions. J Classif 2 , 193–218 (1985)

Jain, A.K., Dubes, R.C.: Algorithms for Clustering Data. Prentice Hall, Englewood Cliffs (1988)

Jain, D., Borah, M.D., Biswas, A.: A sentence is known by the company it keeps: improving legal document summarization using deep clustering. Artif. Intell. Law 1–36 (2023)

Jardine, N., van Rijsbergen, C.J.: The use of hierarchic clustering in information retrieval. Inf. Storage Retrieval 7 (5), 217–240 (1971)

Jasinska-Piadlo, A., Bond, R., Biglarbeigi, P., Brisk, R., Campbell, P., Browne, F., McEneaneny, D.: Data-driven versus a domain-led approach to k-means clustering on an open heart failure dataset. Int. J. Data Sci. Anal. 15 (1), 49–66 (2023)

Jayarajan, D., Deodhare, D., Ravindran, B.: Document clustering using lexical chains (2007)

Jayarajan, D., Deodhare, D., Ravindran, B.: Lexical chains as document features. In: Proceedings of the Third International Joint Conference on Natural Language Processing: Volume-I (2008)

Joulin, A., Grave, E., Bojanowski, P., Mikolov, T.: Bag of tricks for efficient text classification (2016). arXiv preprint arXiv:1607.01759

Karypis, G., Han, E.H., Kumar, V.: Chameleon: Hierarchical clustering using dynamic modeling. Computer 32 (8), 68–75 (1999)

Kim, J., Yoon, J., Park, E., Choi, S.: Patent document clustering with deep embeddings. Scientometrics 1–15 (2020)

Kohonen, T.: The self-organizing map. Neurocomputing 21 (1–3), 1–6 (1998)

Lan, M., Sung, S.Y., Low, H.B., Tan, C.L.: A comparative study on term weighting schemes for text categorization. In: Proceedings. 2005 IEEE International Joint Conference on Neural Networks, 2005., vol. 1, pp. 546–551. IEEE (2005)

Li, Y., Cai, J., Wang, J.: A text document clustering method based on weighted Bert model. In: 2020 IEEE 4th Information Technology, Networking, Electronic and Automation Control Conference (ITNEC), vol. 1, pp. 1426–1430. IEEE (2020)

Li, Y., Luo, C., Chung, S.M.: A parallel text document clustering algorithm based on neighbors. Clust. Comput. 18 (2), 933–948 (2015)

Liu, Z., Lin, Y., Sun, M.: Representation Learning for Natural Language Processing. Springer Nature, Berlin (2020)

Book Google Scholar

Luo, C., Li, Y., Chung, S.M.: Text document clustering based on neighbors. Data Knowl. Eng. 68 (11), 1271–1288 (2009)

MacQueen, J., et al.: Some methods for classification and analysis of multivariate observations. In: Proceedings of the Fifth Berkeley Symposium on Mathematical Statistics and Probability, vol. 1, pp. 281–297. Oakland, CA, USA (1967)

Manning, C.D., Schütze, H., Raghavan, P.: Introduction to Information Retrieval. Cambridge University Press, Cambridge (2008)

McInnes, L., Healy, J., Astels, S.: hdbscan: Hierarchical density based clustering. J. Open Source Softw. 2 (11), 205 (2017)

Mehta, V., Bawa, S., Singh, J.: Stamantic clustering: Combining statistical and semantic features for clustering of large text datasets. Expert Syst. Appl. 174 , 114710 (2021)

Mehta, V., Bawa, S., Singh, J.: Weclustering: word embeddings based text clustering technique for large datasets. Complex Intell. Syst. 7 (6), 3211–3224 (2021)

Mikolov, T., Chen, K., Corrado, G., Dean, J.: Efficient estimation of word representations in vector space (2013). arXiv preprint arXiv:1301.3781

Mikolov, T., Sutskever, I., Chen, K., Corrado, G.S., Dean, J.: Distributed representations of words and phrases and their compositionality. Adv. Neural. Inf. Process. Syst. 26 , 3111–3119 (2013)

Miller, G.A.: Wordnet: a lexical database for English. Commun. ACM 38 (11), 39–41 (1995)

Morris, J., Hirst, G.: Lexical cohesion computed by thesaural relations as an indicator of the structure of text. Comput. Linguist. 17 (1), 21–48 (1991)

Mustafi, D., Mustafi, A.: A differential evolution based algorithm to cluster text corpora using lazy re-evaluation of fringe points. Multimedia Tools Appl. 1–25 (2023)

Naik, A., Maeda, H., Kanojia, V., Fujita, S.: Scalable Twitter user clustering approach boosted by Personalized PageRank. Int. J. Data Sci. Anal. 6 (4), 297–309 (2018)

Nasir, J.A., Varlamis, I., Karim, A., Tsatsaronis, G.: Semantic smoothing for text clustering. Knowl.-Based Syst. 54 , 216–229 (2013)

Ng, R.T., Han, J.: Efficient and effective clustering methods for spatial data mining. In: Proceedings of VLDB, pp. 144–155 (1994)

Ng, R.T., Han, J.: CLARANS: a method for clustering objects for spatial data mining. IEEE Trans. Knowl. Data Eng. 14 (5), 1003–1016 (2002)

Park, H.S., Jun, C.H.: A simple and fast algorithm for k-medoids clustering. Expert Syst. Appl. 36 (2), 3336–3341 (2009)

Park, J., Park, C., Kim, J., Cho, M., Park, S.: ADC: advanced document clustering using contextualized representations. Expert Syst. Appl. 137 , 157–166 (2019)

Patil, L.H., Atique, M.: A semantic approach for effective document clustering using wordnet (2013). arXiv preprint arXiv:1303.0489

Pennington, J., Socher, R., Manning, C.D.: Glove: Global vectors for word representation. In: Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 1532–1543 (2014)

Peters, M.E., Neumann, M., Iyyer, M., Gardner, M., Clark, C., Lee, K., Zettlemoyer, L.: Deep contextualized word representations (2018). arXiv preprint arXiv:1802.05365

Recupero, D.R.: A new unsupervised method for document clustering by using wordnet lexical and conceptual relations. Inf. Retrieval 10 (6), 563–579 (2007)

Robertson, S.: Understanding inverse document frequency: on theoretical arguments for IDF. J. Doc. 60 (5), 503–520 (2004)

Roul, R.K.: An effective approach for semantic-based clustering and topic-based ranking of web documents. Int. J. Data Sci. Anal. 5 , 269–284 (2018)

Schubert, E., Sander, J., Ester, M., Kriegel, H.P., Xu, X.: DBSCAN revisited, revisited: why and how you should (still) use DBSCAN. ACM Trans. Database Syst. (TODS) 42 (3), 1–21 (2017)

Sedding, J., Kazakov, D.: Wordnet-based text document clustering. In: proceedings of the 3rd Workshop on Robust Methods in Analysis of Natural Language Data, pp. 104–113. Association for Computational Linguistics (2004)

Sehgal, G., Garg, D.K.: Comparison of various clustering algorithms. Int. J. Comput. Sci. Inf. Technol. 5 (3), 3074–3076 (2014)

Sheikholeslami, G., Chatterjee, S., Zhang, A.: Wavecluster: a multi-resolution clustering approach for very large spatial databases. In: VLDB, vol. 98, pp. 428–439 (1998)

Shi, H., Wang, C., Sakai, T.: Self-supervised document clustering based on Bert with data augment (2020). arXiv preprint arXiv:2011.08523

Shirkhorshidi, A.S., Aghabozorgi, S., Wah, T.Y.: A comparison study on similarity and dissimilarity measures in clustering continuous data. PLoS ONE 10 (12), e0144059 (2015)

Sinoara, R.A., Camacho-Collados, J., Rossi, R.G., Navigli, R., Rezende, S.O.: Knowledge-enhanced document embeddings for text classification. Knowl.-Based Syst. 163 , 955–971 (2019)

Steinbach, M., Ertöz, L., Kumar, V.: The challenges of clustering high dimensional data. In: New Directions in Statistical Physics, pp. 273–309. Springer, Berlin (2004)

Steinbach, M., Karypis, G., Kumar, V., et al.: A comparison of document clustering techniques. In: KDD Workshop on Text Mining, vol. 400, pp. 525–526. Boston (2000)

Urkude, G., Pandey, M.: Design and development of density-based effective document clustering method using ontology. Multimedia Tools Appl. 81 (23), 32995–33015 (2022)

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A.N., Kaiser, Ł., Polosukhin, I.: Attention is all you need. In: Advances in Neural Information Processing Systems, vol. 30 (2017)

Wang, S., Zhou, W., Jiang, C.: A survey of word embeddings based on deep learning. Computing 102 (3), 717–740 (2020)

Wang, W., Yang, J., Muntz, R., et al.: Sting: a statistical information grid approach to spatial data mining. In: VLDB, vol. 97, pp. 186–195 (1997)

Wei, T., Lu, Y., Chang, H., Zhou, Q., Bao, X.: A semantic approach for text clustering using wordnet and lexical chains. Expert Syst. Appl. 42 (4), 2264–2275 (2015)

Xu, D., Tian, Y.: A comprehensive survey of clustering algorithms. Ann. Data Sci. 2 (2), 165–193 (2015)

Xu, X., Ester, M., Kriegel, H.P., Sander, J.: A distribution-based clustering algorithm for mining in large spatial databases. In: 14th International Conference on Data Engineering, 1998. Proceedings, pp. 324–331. IEEE (1998)

Yue, L., Zuo, W., Peng, T., Wang, Y., Han, X.: A fuzzy document clustering approach based on domain-specified ontology. Data Knowl. Eng. 100 , 148–166 (2015)

Zhang, T., Ramakrishnan, R., Livny, M.: Birch: an efficient data clustering method for very large databases. In: ACM Sigmod Record, vol. 25, pp. 103–114. ACM (1996)

Download references

Author information

Authors and affiliations.

Bennett University, Greater Noida, Uttar Pradesh, 201310, India

Vivek Mehta, Mohit Agarwal & Rohit Kumar Kaliyar

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Vivek Mehta .

Ethics declarations

Conflict of interest.

On behalf of all authors, the corresponding author states that there is no conflict of interest.

Additional information

Publisher's note.

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

Reprints and permissions

About this article

Mehta, V., Agarwal, M. & Kaliyar, R.K. A comprehensive and analytical review of text clustering techniques. Int J Data Sci Anal (2024). https://doi.org/10.1007/s41060-024-00540-x

Download citation

Received : 06 October 2023

Accepted : 18 March 2024

Published : 08 April 2024

DOI : https://doi.org/10.1007/s41060-024-00540-x

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Text clustering

- Semantic text clustering

- Word Embeddings

- Curse of dimensionality

- Find a journal

- Publish with us

- Track your research

IMAGES

VIDEO

COMMENTS

Clustering is an essential tool in data mining research and applications. It is the subject of active research in many fields of study, such as computer science, data science, statistics, pattern recognition, artificial intelligence, and machine learning. ... much has been achieved regarding clustering with new emerging research directions in ...

Despite these limitations, the K-means clustering algorithm is credited with flexibility, efficiency, and ease of implementation. It is also among the top ten clustering algorithms in data mining [59], [217], [105], [94].The simplicity and low computational complexity have given the K-means clustering algorithm a wide acceptance in many domains for solving clustering problems.

The components of data clustering are the steps needed to perform a clustering task. Different taxonomies have been used in the classification of data clustering algorithms Some words commonly used are approaches, methods or techniques (Jain et al. 1999; Liao 2005; Bulò and Pelillo 2017; Govender and Sivakumar 2020).However, clustering algorithms have the tendency of being grouped or ...

The CODATA Data Science Journal is a peer-reviewed, open access, electronic journal, publishing papers on the management, dissemination, use and reuse of research data and databases across all research domains, including science, technology, the humanities and the arts. The scope of the journal includes descriptions of data systems, their implementations and their publication, applications ...

Clustering is an essential tool in data mining research and applications. It is the subject of active research in many fields of study, such as computer science, data science, statistics, pattern recognition, artificial intelligence, and machine learning.Several clustering techniques have been proposed and implemented, and most of them successfully find excellent quality or optimal clustering ...

Optimized data mining and clustering models can provide an insightful information about the transmission pattern of COVID-19 outbreak . Among all the improved and efficient k-means clustering algorithms proposed previously, they take the initial center by randomization [15, 17] or the k-means++ algorithm [15, 32]. Those processes of selecting ...

Cluster analysis is an essential tool in data mining. Several clustering algorithms have been proposed and implemented, most of which are able to find good quality clustering results. However, the majority of the traditional clustering algorithms, such as the K-means, K-medoids, and Chameleon, still depend on being provided a priori with the number of clusters and may struggle to deal with ...

Abstract: Clustering is a necessary data pre-processing method in data mining research, which aims to obtain the intrinsic distribution structure of valuable datasets from unlabeled datasets and thus simplify the description of the datasets. Data mining technology can discover potential and valuable knowledge from a large amount of data, giving a new meaning to the massive amount of data ...

Data mining (DM) is a practice in which large data stores are searched automatically to find designs as well as trends that go beyond simple analyses. Data mining is also known as the data clustering of knowledge discovery (KDD), where similar items are grouped into clusters. Clustering is one of the main analytical methods in DM. The clustering method directly influences clustering results ...

This systematic review aims to summarize the cluster analysis and grouping techniques used to date and make recommendations for future developments. Clustering is a necessary data pre-processing method in data mining research, which aims to obtain the intrinsic distribution structure of valuable datasets from unlabeled datasets and thus simplify the description of the datasets.

The foremost illustrative task in data mining process is clustering. It plays an exceedingly important role in the entire KDD process also as categorizing data is one of the most rudimentary steps in knowledge discovery. It is an unsupervised learning task used for exploratory data analysis to find some unrevealed patterns which are present in data but cannot be categorized clearly. Sets of ...

Data mining is an analytical approach that contributes to achieving a solution to many problems by extracting previously unknown, fascinating, nontrivial, and potentially valuable information from massive datasets. Clustering in data mining is used for splitting or segmenting data items/points into meaningful groups and clusters by grouping the items that are near to each other based on ...

This paper uses the K-means algorithm to optimize the neural network clustering data mining algorithm, and designs experiments to verify the neural network data mining clustering optimization algorithm proposed in this paper. The experimental research results in this paper show that on four UCI datasets, the mean MP values of the neural network ...

Epidemic diseases can be extremely dangerous with its hazarding influences. They may have negative effects on economies, businesses, environment, humans, and workforce. In this paper, some of the factors that are interrelated with COVID-19 pandemic have been examined using data mining methodologies and approaches.

The applications of clustering in image segmentation, object and character recognition, information retrieval and data mining are highlighted in the paper. Of course there is an abundant amount of literature available in clustering and its applications; it is not possible to cover that entirely, only basic and few important methods are included ...

Abstract: Clustering analysis method is one of the main analytical methods in data mining, the method of clustering algorithm will influence the clustering results directly. This paper discusses the standard k-means clustering algorithm and analyzes the shortcomings of standard k-means algorithm, such as the k-means clustering algorithm has to calculate the distance between each data object ...

Optimized data mining and clustering models can provide an insightful information about the transmission pattern of COVID-19 outbreak . Among all the improved and efficient k-means clustering algorithms proposed previously, they take the initial center by randomization [15, 17] or the k-means++ algorithm [15, 32]. Those processes of selecting ...

Hierarchical Agglomerative Clustering. Correctly defining and grouping electrical feeders is of great importance for electrical system operators. In this paper, we compare two different clustering techniques, K-means and hierarchical agglomerative clustering, applied to real data from the east region of Paraguay.

Abstract. Data Mining is the procedure of extracting information from a data set and transforms information into comprehensible structure for processing. Clustering is data mining technique used to process data elements into their related groups or partition. Thus, the process of partitioning data objects into subclasses is term as 'cluster'.

The information gain, gain ratio, gini decrease, chi-square, and relieff are used to rank the features. This work comprises the introduction, literature review, and proposed methodology parts. In this research paper, a new method of analyzing skin disease has been proposed in which six different data mining techniques are used to develop an ...

Clustering technology has important applications in data mining, pattern recognition, machine learning and other fields. However, with the explosive growth of data, traditional clustering algorithm is more and more difficult to meet the needs of big data analysis. How to improve the traditional clustering algorithm and ensure the quality and efficiency of clustering under the background of big ...

This paper presents a new large-text data mining method, the random walk algorithm, which accurately extracts two basic and complementary words from numerous text data. ... The scientific significance of this paper lies in the research of clustering algorithm, bringing forth new ideas and putting forward new text data processing algorithm. ...

Clustering analysis has been a major topic of data mining research for many years. Among them, clustering analysis based on distance is the main content of scholars' research. K-medoids algorithm, K-means algorithm and other clustering algorithm based on clustering mining tools are widely used in many statistical analysis software or system ...

In order to do that, the IS group helps organizations to: (i) understand the business needs and value propositions and accordingly design the required business and information system architecture; (ii) design, implement, and improve the operational processes and supporting (information) systems that address the business need, and (iii) use advanced data analytics methods and techniques to ...

Document clustering involves grouping together documents so that similar documents are grouped together in the same cluster and different documents in the different clusters. Clustering of documents is considered a fundamental problem in the field of text mining. With a high rise in textual content over the Internet in recent years makes this problem more and more challenging. For example ...