Educational resources and simple solutions for your research journey

What is a Research Problem? Characteristics, Types, and Examples

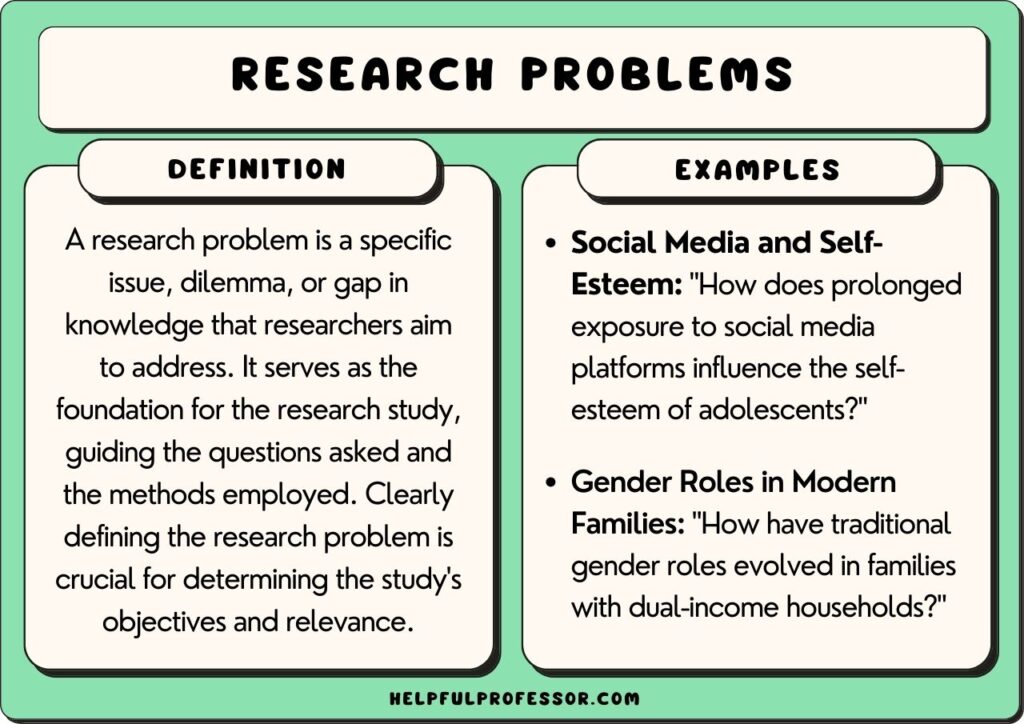

A research problem is a gap in existing knowledge, a contradiction in an established theory, or a real-world challenge that a researcher aims to address in their research. It is at the heart of any scientific inquiry, directing the trajectory of an investigation. The statement of a problem orients the reader to the importance of the topic, sets the problem into a particular context, and defines the relevant parameters, providing the framework for reporting the findings. Therein lies the importance of research problem s.

The formulation of well-defined research questions is central to addressing a research problem . A research question is a statement made in a question form to provide focus, clarity, and structure to the research endeavor. This helps the researcher design methodologies, collect data, and analyze results in a systematic and coherent manner. A study may have one or more research questions depending on the nature of the study.

Identifying and addressing a research problem is very important. By starting with a pertinent problem , a scholar can contribute to the accumulation of evidence-based insights, solutions, and scientific progress, thereby advancing the frontier of research. Moreover, the process of formulating research problems and posing pertinent research questions cultivates critical thinking and hones problem-solving skills.

Table of Contents

What is a Research Problem ?

Before you conceive of your project, you need to ask yourself “ What is a research problem ?” A research problem definition can be broadly put forward as the primary statement of a knowledge gap or a fundamental challenge in a field, which forms the foundation for research. Conversely, the findings from a research investigation provide solutions to the problem .

A research problem guides the selection of approaches and methodologies, data collection, and interpretation of results to find answers or solutions. A well-defined problem determines the generation of valuable insights and contributions to the broader intellectual discourse.

Characteristics of a Research Problem

Knowing the characteristics of a research problem is instrumental in formulating a research inquiry; take a look at the five key characteristics below:

Novel : An ideal research problem introduces a fresh perspective, offering something new to the existing body of knowledge. It should contribute original insights and address unresolved matters or essential knowledge.

Significant : A problem should hold significance in terms of its potential impact on theory, practice, policy, or the understanding of a particular phenomenon. It should be relevant to the field of study, addressing a gap in knowledge, a practical concern, or a theoretical dilemma that holds significance.

Feasible: A practical research problem allows for the formulation of hypotheses and the design of research methodologies. A feasible research problem is one that can realistically be investigated given the available resources, time, and expertise. It should not be too broad or too narrow to explore effectively, and should be measurable in terms of its variables and outcomes. It should be amenable to investigation through empirical research methods, such as data collection and analysis, to arrive at meaningful conclusions A practical research problem considers budgetary and time constraints, as well as limitations of the problem . These limitations may arise due to constraints in methodology, resources, or the complexity of the problem.

Clear and specific : A well-defined research problem is clear and specific, leaving no room for ambiguity; it should be easily understandable and precisely articulated. Ensuring specificity in the problem ensures that it is focused, addresses a distinct aspect of the broader topic and is not vague.

Rooted in evidence: A good research problem leans on trustworthy evidence and data, while dismissing unverifiable information. It must also consider ethical guidelines, ensuring the well-being and rights of any individuals or groups involved in the study.

Types of Research Problems

Across fields and disciplines, there are different types of research problems . We can broadly categorize them into three types.

- Theoretical research problems

Theoretical research problems deal with conceptual and intellectual inquiries that may not involve empirical data collection but instead seek to advance our understanding of complex concepts, theories, and phenomena within their respective disciplines. For example, in the social sciences, research problem s may be casuist (relating to the determination of right and wrong in questions of conduct or conscience), difference (comparing or contrasting two or more phenomena), descriptive (aims to describe a situation or state), or relational (investigating characteristics that are related in some way).

Here are some theoretical research problem examples :

- Ethical frameworks that can provide coherent justifications for artificial intelligence and machine learning algorithms, especially in contexts involving autonomous decision-making and moral agency.

- Determining how mathematical models can elucidate the gradual development of complex traits, such as intricate anatomical structures or elaborate behaviors, through successive generations.

- Applied research problems

Applied or practical research problems focus on addressing real-world challenges and generating practical solutions to improve various aspects of society, technology, health, and the environment.

Here are some applied research problem examples :

- Studying the use of precision agriculture techniques to optimize crop yield and minimize resource waste.

- Designing a more energy-efficient and sustainable transportation system for a city to reduce carbon emissions.

- Action research problems

Action research problems aim to create positive change within specific contexts by involving stakeholders, implementing interventions, and evaluating outcomes in a collaborative manner.

Here are some action research problem examples :

- Partnering with healthcare professionals to identify barriers to patient adherence to medication regimens and devising interventions to address them.

- Collaborating with a nonprofit organization to evaluate the effectiveness of their programs aimed at providing job training for underserved populations.

These different types of research problems may give you some ideas when you plan on developing your own.

How to Define a Research Problem

You might now ask “ How to define a research problem ?” These are the general steps to follow:

- Look for a broad problem area: Identify under-explored aspects or areas of concern, or a controversy in your topic of interest. Evaluate the significance of addressing the problem in terms of its potential contribution to the field, practical applications, or theoretical insights.

- Learn more about the problem: Read the literature, starting from historical aspects to the current status and latest updates. Rely on reputable evidence and data. Be sure to consult researchers who work in the relevant field, mentors, and peers. Do not ignore the gray literature on the subject.

- Identify the relevant variables and how they are related: Consider which variables are most important to the study and will help answer the research question. Once this is done, you will need to determine the relationships between these variables and how these relationships affect the research problem .

- Think of practical aspects : Deliberate on ways that your study can be practical and feasible in terms of time and resources. Discuss practical aspects with researchers in the field and be open to revising the problem based on feedback. Refine the scope of the research problem to make it manageable and specific; consider the resources available, time constraints, and feasibility.

- Formulate the problem statement: Craft a concise problem statement that outlines the specific issue, its relevance, and why it needs further investigation.

- Stick to plans, but be flexible: When defining the problem , plan ahead but adhere to your budget and timeline. At the same time, consider all possibilities and ensure that the problem and question can be modified if needed.

Key Takeaways

- A research problem concerns an area of interest, a situation necessitating improvement, an obstacle requiring eradication, or a challenge in theory or practical applications.

- The importance of research problem is that it guides the research and helps advance human understanding and the development of practical solutions.

- Research problem definition begins with identifying a broad problem area, followed by learning more about the problem, identifying the variables and how they are related, considering practical aspects, and finally developing the problem statement.

- Different types of research problems include theoretical, applied, and action research problems , and these depend on the discipline and nature of the study.

- An ideal problem is original, important, feasible, specific, and based on evidence.

Frequently Asked Questions

Why is it important to define a research problem?

Identifying potential issues and gaps as research problems is important for choosing a relevant topic and for determining a well-defined course of one’s research. Pinpointing a problem and formulating research questions can help researchers build their critical thinking, curiosity, and problem-solving abilities.

How do I identify a research problem?

Identifying a research problem involves recognizing gaps in existing knowledge, exploring areas of uncertainty, and assessing the significance of addressing these gaps within a specific field of study. This process often involves thorough literature review, discussions with experts, and considering practical implications.

Can a research problem change during the research process?

Yes, a research problem can change during the research process. During the course of an investigation a researcher might discover new perspectives, complexities, or insights that prompt a reevaluation of the initial problem. The scope of the problem, unforeseen or unexpected issues, or other limitations might prompt some tweaks. You should be able to adjust the problem to ensure that the study remains relevant and aligned with the evolving understanding of the subject matter.

How does a research problem relate to research questions or hypotheses?

A research problem sets the stage for the study. Next, research questions refine the direction of investigation by breaking down the broader research problem into manageable components. Research questions are formulated based on the problem , guiding the investigation’s scope and objectives. The hypothesis provides a testable statement to validate or refute within the research process. All three elements are interconnected and work together to guide the research.

R Discovery is a literature search and research reading platform that accelerates your research discovery journey by keeping you updated on the latest, most relevant scholarly content. With 250M+ research articles sourced from trusted aggregators like CrossRef, Unpaywall, PubMed, PubMed Central, Open Alex and top publishing houses like Springer Nature, JAMA, IOP, Taylor & Francis, NEJM, BMJ, Karger, SAGE, Emerald Publishing and more, R Discovery puts a world of research at your fingertips.

Try R Discovery Prime FREE for 1 week or upgrade at just US$72 a year to access premium features that let you listen to research on the go, read in your language, collaborate with peers, auto sync with reference managers, and much more. Choose a simpler, smarter way to find and read research – Download the app and start your free 7-day trial today !

Related Posts

Turabian Format: A Beginner’s Guide

Discussion vs Conclusion: What is the Difference?

Root out friction in every digital experience, super-charge conversion rates, and optimize digital self-service

Uncover insights from any interaction, deliver AI-powered agent coaching, and reduce cost to serve

Increase revenue and loyalty with real-time insights and recommendations delivered to teams on the ground

Know how your people feel and empower managers to improve employee engagement, productivity, and retention

Take action in the moments that matter most along the employee journey and drive bottom line growth

Whatever they’re are saying, wherever they’re saying it, know exactly what’s going on with your people

Get faster, richer insights with qual and quant tools that make powerful market research available to everyone

Run concept tests, pricing studies, prototyping + more with fast, powerful studies designed by UX research experts

Track your brand performance 24/7 and act quickly to respond to opportunities and challenges in your market

Explore the platform powering Experience Management

- Free Account

- For Digital

- For Customer Care

- For Human Resources

- For Researchers

- Financial Services

- All Industries

Popular Use Cases

- Customer Experience

- Employee Experience

- Net Promoter Score

- Voice of Customer

- Customer Success Hub

- Product Documentation

- Training & Certification

- XM Institute

- Popular Resources

- Customer Stories

- Artificial Intelligence

- Market Research

- Partnerships

- Marketplace

The annual gathering of the experience leaders at the world’s iconic brands building breakthrough business results, live in Salt Lake City.

- English/AU & NZ

- Español/Europa

- Español/América Latina

- Português Brasileiro

- REQUEST DEMO

Academic Experience

How to identify and resolve research problems

Updated July 12, 2023

In this article, we’re going to take you through one of the most pertinent parts of conducting research: a research problem (also known as a research problem statement).

When trying to formulate a good research statement, and understand how to solve it for complex projects, it can be difficult to know where to start.

Not only are there multiple perspectives (from stakeholders to project marketers who want answers), you have to consider the particular context of the research topic: is it timely, is it relevant and most importantly of all, is it valuable?

In other words: are you looking at a research worthy problem?

The fact is, a well-defined, precise, and goal-centric research problem will keep your researchers, stakeholders, and business-focused and your results actionable.

And when it works well, it's a powerful tool to identify practical solutions that can drive change and secure buy-in from your workforce.

Free eBook: The ultimate guide to market research

What is a research problem?

In social research methodology and behavioral sciences , a research problem establishes the direction of research, often relating to a specific topic or opportunity for discussion.

For example: climate change and sustainability, analyzing moral dilemmas or wage disparity amongst classes could all be areas that the research problem focuses on.

As well as outlining the topic and/or opportunity, a research problem will explain:

- why the area/issue needs to be addressed,

- why the area/issue is of importance,

- the parameters of the research study

- the research objective

- the reporting framework for the results and

- what the overall benefit of doing so will provide (whether to society as a whole or other researchers and projects).

Having identified the main topic or opportunity for discussion, you can then narrow it down into one or several specific questions that can be scrutinized and answered through the research process.

What are research questions?

Generating research questions underpinning your study usually starts with problems that require further research and understanding while fulfilling the objectives of the study.

A good problem statement begins by asking deeper questions to gain insights about a specific topic.

For example, using the problems above, our questions could be:

"How will climate change policies influence sustainability standards across specific geographies?"

"What measures can be taken to address wage disparity without increasing inflation?"

Developing a research worthy problem is the first step - and one of the most important - in any kind of research.

It’s also a task that will come up again and again because any business research process is cyclical. New questions arise as you iterate and progress through discovering, refining, and improving your products and processes. A research question can also be referred to as a "problem statement".

Note: good research supports multiple perspectives through empirical data. It’s focused on key concepts rather than a broad area, providing readily actionable insight and areas for further research.

Research question or research problem?

As we've highlighted, the terms “research question” and “research problem” are often used interchangeably, becoming a vague or broad proposition for many.

The term "problem statement" is far more representative, but finds little use among academics.

Instead, some researchers think in terms of a single research problem and several research questions that arise from it.

As mentioned above, the questions are lines of inquiry to explore in trying to solve the overarching research problem.

Ultimately, this provides a more meaningful understanding of a topic area.

It may be useful to think of questions and problems as coming out of your business data – that’s the O-data (otherwise known as operational data) like sales figures and website metrics.

What's an example of a research problem?

Your overall research problem could be: "How do we improve sales across EMEA and reduce lost deals?"

This research problem then has a subset of questions, such as:

"Why do sales peak at certain times of the day?"

"Why are customers abandoning their online carts at the point of sale?"

As well as helping you to solve business problems, research problems (and associated questions) help you to think critically about topics and/or issues (business or otherwise). You can also use your old research to aid future research -- a good example is laying the foundation for comparative trend reports or a complex research project.

(Also, if you want to see the bigger picture when it comes to research problems, why not check out our ultimate guide to market research? In it you'll find out: what effective market research looks like, the use cases for market research, carrying out a research study, and how to examine and action research findings).

The research process: why are research problems important?

A research problem has two essential roles in setting your research project on a course for success.

1. They set the scope

The research problem defines what problem or opportunity you’re looking at and what your research goals are. It stops you from getting side-tracked or allowing the scope of research to creep off-course .

Without a strong research problem or problem statement, your team could end up spending resources unnecessarily, or coming up with results that aren’t actionable - or worse, harmful to your business - because the field of study is too broad.

2. They tie your work to business goals and actions

To formulate a research problem in terms of business decisions means you always have clarity on what’s needed to make those decisions. You can show the effects of what you’ve studied using real outcomes.

Then, by focusing your research problem statement on a series of questions tied to business objectives, you can reduce the risk of the research being unactionable or inaccurate.

It's also worth examining research or other scholarly literature (you’ll find plenty of similar, pertinent research online) to see how others have explored specific topics and noting implications that could have for your research.

Four steps to defining your research problem

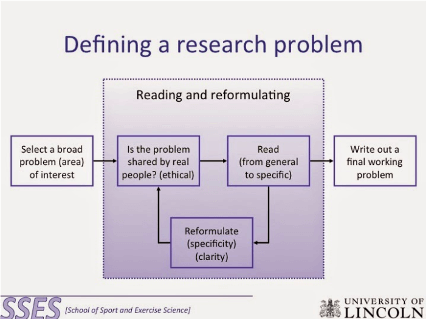

Image credit: http://myfreeschooltanzania.blogspot.com/2014/11/defining-research-problem.html

1. Observe and identify

Businesses today have so much data that it can be difficult to know which problems to address first. Researchers also have business stakeholders who come to them with problems they would like to have explored. A researcher’s job is to sift through these inputs and discover exactly what higher-level trends and key concepts are worth investing in.

This often means asking questions and doing some initial investigation to decide which avenues to pursue. This could mean gathering interdisciplinary perspectives identifying additional expertise and contextual information.

Sometimes, a small-scale preliminary study might be worth doing to help get a more comprehensive understanding of the business context and needs, and to make sure your research problem addresses the most critical questions.

This could take the form of qualitative research using a few in-depth interviews , an environmental scan, or reviewing relevant literature.

The sales manager of a sportswear company has a problem: sales of trail running shoes are down year-on-year and she isn’t sure why. She approaches the company’s research team for input and they begin asking questions within the company and reviewing their knowledge of the wider market.

2. Review the key factors involved

As a marketing researcher, you must work closely with your team of researchers to define and test the influencing factors and the wider context involved in your study. These might include demographic and economic trends or the business environment affecting the question at hand. This is referred to as a relational research problem.

To do this, you have to identify the factors that will affect the research and begin formulating different methods to control them.

You also need to consider the relationships between factors and the degree of control you have over them. For example, you may be able to control the loading speed of your website but you can’t control the fluctuations of the stock market.

Doing this will help you determine whether the findings of your project will produce enough information to be worth the cost.

You need to determine:

- which factors affect the solution to the research proposal.

- which ones can be controlled and used for the purposes of the company, and to what extent.

- the functional relationships between the factors.

- which ones are critical to the solution of the research study.

The research team at the running shoe company is hard at work. They explore the factors involved and the context of why YoY sales are down for trail shoes, including things like what the company’s competitors are doing, what the weather has been like – affecting outdoor exercise – and the relative spend on marketing for the brand from year to year.

The final factor is within the company’s control, although the first two are not. They check the figures and determine marketing spend has a significant impact on the company.

3. Prioritize

Once you and your research team have a few observations, prioritize them based on their business impact and importance. It may be that you can answer more than one question with a single study, but don’t do it at the risk of losing focus on your overarching research problem.

Questions to ask:

- Who? Who are the people with the problem? Are they end-users, stakeholders, teams within your business? Have you validated the information to see what the scale of the problem is?

- What? What is its nature and what is the supporting evidence?

- Why? What is the business case for solving the problem? How will it help?

- Where? How does the problem manifest and where is it observed?

To help you understand all dimensions, you might want to consider focus groups or preliminary interviews with external (including consumers and existing customers) and internal (salespeople, managers, and other stakeholders) parties to provide what is sometimes much-needed insight into a particular set of questions or problems.

After observing and investigating, the running shoe researchers come up with a few candidate questions, including:

- What is the relationship between US average temperatures and sales of our products year on year?

- At present, how does our customer base rank Competitor X and Competitor Y’s trail running shoe compared to our brand?

- What is the relationship between marketing spend and trail shoe product sales over the last 12 months?

They opt for the final question, because the variables involved are fully within the company’s control, and based on their initial research and stakeholder input, seem the most likely cause of the dive in sales. The research question is specific enough to keep the work on course towards an actionable result, but it allows for a few different avenues to be explored, such as the different budget allocations of offline and online marketing and the kinds of messaging used.

Get feedback from the key teams within your business to make sure everyone is aligned and has the same understanding of the research problem and questions, and the actions you hope to take based on the results. Now is also a good time to demonstrate the ROI of your research and lay out its potential benefits to your stakeholders.

Different groups may have different goals and perspectives on the issue. This step is vital for getting the necessary buy-in and pushing the project forward.

The running shoe company researchers now have everything they need to begin. They call a meeting with the sales manager and consult with the product team, marketing team, and C-suite to make sure everyone is aligned and has bought into the direction of the research topic. They identify and agree that the likely course of action will be a rethink of how marketing resources are allocated, and potentially testing out some new channels and messaging strategies .

Can you explore a broad area and is it practical to do so?

A broader research problem or report can be a great way to bring attention to prevalent issues, societal or otherwise, but are often undertaken by those with the resources to do so.

Take a typical government cybersecurity breach survey, for example. Most of these reports raise awareness of cybercrime, from the day-to-day threats businesses face to what security measures some organizations are taking. What these reports don't do, however, is provide actionable advice - mostly because every organization is different.

The point here is that while some researchers will explore a very complex issue in detail, others will provide only a snapshot to maintain interest and encourage further investigation. The "value" of the data is wholly determined by the recipients of it - and what information you choose to include.

To summarize, it can be practical to undertake a broader research problem, certainly, but it may not be possible to cover everything or provide the detail your audience needs. Likewise, a more systematic investigation of an issue or topic will be more valuable, but you may also find that you cover far less ground.

It's important to think about your research objectives and expected findings before going ahead.

Ensuring your research project is a success

A complex research project can be made significantly easier with clear research objectives, a descriptive research problem, and a central focus. All of which we've outlined in this article.

If you have previous research, even better. Use it as a benchmark

Remember: what separates a good research paper from an average one is actually very simple: valuable, empirical data that explores a prevalent societal or business issue and provides actionable insights.

And we can help.

Sophisticated research made simple with Qualtrics

Trusted by the world's best brands, our platform enables researchers from academic to corporate to tackle the hardest challenges and deliver the results that matter.

Our CoreXM platform supports the methods that define superior research and delivers insights in real-time. It's easy to use (thanks to drag-and-drop functionality) and requires no coding, meaning you'll be capturing data and gleaning insights in no time.

It also excels in flexibility; you can track consumer behavior across segments , benchmark your company versus competitors , carry out complex academic research, and do much more, all from one system.

It's one platform with endless applications, so no matter your research problem, we've got the tools to help you solve it. And if you don't have a team of research experts in-house, our market research team has the practical knowledge and tools to help design the surveys and find the respondents you need.

Of course, you may want to know where to begin with your own market research . If you're struggling, make sure to download our ultimate guide using the link below.

It's got everything you need and there’s always information in our research methods knowledge base.

Scott Smith

Scott Smith, Ph.D. is a contributor to the Qualtrics blog.

Related Articles

April 1, 2023

How to write great survey questions (with examples)

February 8, 2023

Smoothing the transition from school to work with work-based learning

December 6, 2022

How customer experience helps bring Open Universities Australia’s brand promise to life

August 18, 2022

School safety, learning gaps top of mind for parents this back-to-school season

August 9, 2022

3 things that will improve your teachers’ school experience

August 2, 2022

Why a sense of belonging at school matters for K-12 students

July 14, 2022

Improve the student experience with simplified course evaluations

March 17, 2022

Understanding what’s important to college students

Stay up to date with the latest xm thought leadership, tips and news., request demo.

Ready to learn more about Qualtrics?

45 Research Problem Examples & Inspiration

Chris Drew (PhD)

Dr. Chris Drew is the founder of the Helpful Professor. He holds a PhD in education and has published over 20 articles in scholarly journals. He is the former editor of the Journal of Learning Development in Higher Education. [Image Descriptor: Photo of Chris]

Learn about our Editorial Process

A research problem is an issue of concern that is the catalyst for your research. It demonstrates why the research problem needs to take place in the first place.

Generally, you will write your research problem as a clear, concise, and focused statement that identifies an issue or gap in current knowledge that requires investigation.

The problem will likely also guide the direction and purpose of a study. Depending on the problem, you will identify a suitable methodology that will help address the problem and bring solutions to light.

Research Problem Examples

In the following examples, I’ll present some problems worth addressing, and some suggested theoretical frameworks and research methodologies that might fit with the study. Note, however, that these aren’t the only ways to approach the problems. Keep an open mind and consult with your dissertation supervisor!

Psychology Problems

1. Social Media and Self-Esteem: “How does prolonged exposure to social media platforms influence the self-esteem of adolescents?”

- Theoretical Framework : Social Comparison Theory

- Methodology : Longitudinal study tracking adolescents’ social media usage and self-esteem measures over time, combined with qualitative interviews.

2. Sleep and Cognitive Performance: “How does sleep quality and duration impact cognitive performance in adults?”

- Theoretical Framework : Cognitive Psychology

- Methodology : Experimental design with controlled sleep conditions, followed by cognitive tests. Participant sleep patterns can also be monitored using actigraphy.

3. Childhood Trauma and Adult Relationships: “How does unresolved childhood trauma influence attachment styles and relationship dynamics in adulthood?

- Theoretical Framework : Attachment Theory

- Methodology : Mixed methods, combining quantitative measures of attachment styles with qualitative in-depth interviews exploring past trauma and current relationship dynamics.

4. Mindfulness and Stress Reduction: “How effective is mindfulness meditation in reducing perceived stress and physiological markers of stress in working professionals?”

- Theoretical Framework : Humanist Psychology

- Methodology : Randomized controlled trial comparing a group practicing mindfulness meditation to a control group, measuring both self-reported stress and physiological markers (e.g., cortisol levels).

5. Implicit Bias and Decision Making: “To what extent do implicit biases influence decision-making processes in hiring practices?

- Theoretical Framework : Cognitive Dissonance Theory

- Methodology : Experimental design using Implicit Association Tests (IAT) to measure implicit biases, followed by simulated hiring tasks to observe decision-making behaviors.

6. Emotional Regulation and Academic Performance: “How does the ability to regulate emotions impact academic performance in college students?”

- Theoretical Framework : Cognitive Theory of Emotion

- Methodology : Quantitative surveys measuring emotional regulation strategies, combined with academic performance metrics (e.g., GPA).

7. Nature Exposure and Mental Well-being: “Does regular exposure to natural environments improve mental well-being and reduce symptoms of anxiety and depression?”

- Theoretical Framework : Biophilia Hypothesis

- Methodology : Longitudinal study comparing mental health measures of individuals with regular nature exposure to those without, possibly using ecological momentary assessment for real-time data collection.

8. Video Games and Cognitive Skills: “How do action video games influence cognitive skills such as attention, spatial reasoning, and problem-solving?”

- Theoretical Framework : Cognitive Load Theory

- Methodology : Experimental design with pre- and post-tests, comparing cognitive skills of participants before and after a period of action video game play.

9. Parenting Styles and Child Resilience: “How do different parenting styles influence the development of resilience in children facing adversities?”

- Theoretical Framework : Baumrind’s Parenting Styles Inventory

- Methodology : Mixed methods, combining quantitative measures of resilience and parenting styles with qualitative interviews exploring children’s experiences and perceptions.

10. Memory and Aging: “How does the aging process impact episodic memory , and what strategies can mitigate age-related memory decline?

- Theoretical Framework : Information Processing Theory

- Methodology : Cross-sectional study comparing episodic memory performance across different age groups, combined with interventions like memory training or mnemonic strategies to assess potential improvements.

Education Problems

11. Equity and Access : “How do socioeconomic factors influence students’ access to quality education, and what interventions can bridge the gap?

- Theoretical Framework : Critical Pedagogy

- Methodology : Mixed methods, combining quantitative data on student outcomes with qualitative interviews and focus groups with students, parents, and educators.

12. Digital Divide : How does the lack of access to technology and the internet affect remote learning outcomes, and how can this divide be addressed?

- Theoretical Framework : Social Construction of Technology Theory

- Methodology : Survey research to gather data on access to technology, followed by case studies in selected areas.

13. Teacher Efficacy : “What factors contribute to teacher self-efficacy, and how does it impact student achievement?”

- Theoretical Framework : Bandura’s Self-Efficacy Theory

- Methodology : Quantitative surveys to measure teacher self-efficacy, combined with qualitative interviews to explore factors affecting it.

14. Curriculum Relevance : “How can curricula be made more relevant to diverse student populations, incorporating cultural and local contexts?”

- Theoretical Framework : Sociocultural Theory

- Methodology : Content analysis of curricula, combined with focus groups with students and teachers.

15. Special Education : “What are the most effective instructional strategies for students with specific learning disabilities?

- Theoretical Framework : Social Learning Theory

- Methodology : Experimental design comparing different instructional strategies, with pre- and post-tests to measure student achievement.

16. Dropout Rates : “What factors contribute to high school dropout rates, and what interventions can help retain students?”

- Methodology : Longitudinal study tracking students over time, combined with interviews with dropouts.

17. Bilingual Education : “How does bilingual education impact cognitive development and academic achievement?

- Methodology : Comparative study of students in bilingual vs. monolingual programs, using standardized tests and qualitative interviews.

18. Classroom Management: “What reward strategies are most effective in managing diverse classrooms and promoting a positive learning environment?

- Theoretical Framework : Behaviorism (e.g., Skinner’s Operant Conditioning)

- Methodology : Observational research in classrooms , combined with teacher interviews.

19. Standardized Testing : “How do standardized tests affect student motivation, learning, and curriculum design?”

- Theoretical Framework : Critical Theory

- Methodology : Quantitative analysis of test scores and student outcomes, combined with qualitative interviews with educators and students.

20. STEM Education : “What methods can be employed to increase interest and proficiency in STEM (Science, Technology, Engineering, and Mathematics) fields among underrepresented student groups?”

- Theoretical Framework : Constructivist Learning Theory

- Methodology : Experimental design comparing different instructional methods, with pre- and post-tests.

21. Social-Emotional Learning : “How can social-emotional learning be effectively integrated into the curriculum, and what are its impacts on student well-being and academic outcomes?”

- Theoretical Framework : Goleman’s Emotional Intelligence Theory

- Methodology : Mixed methods, combining quantitative measures of student well-being with qualitative interviews.

22. Parental Involvement : “How does parental involvement influence student achievement, and what strategies can schools use to increase it?”

- Theoretical Framework : Reggio Emilia’s Model (Community Engagement Focus)

- Methodology : Survey research with parents and teachers, combined with case studies in selected schools.

23. Early Childhood Education : “What are the long-term impacts of quality early childhood education on academic and life outcomes?”

- Theoretical Framework : Erikson’s Stages of Psychosocial Development

- Methodology : Longitudinal study comparing students with and without early childhood education, combined with observational research.

24. Teacher Training and Professional Development : “How can teacher training programs be improved to address the evolving needs of the 21st-century classroom?”

- Theoretical Framework : Adult Learning Theory (Andragogy)

- Methodology : Pre- and post-assessments of teacher competencies, combined with focus groups.

25. Educational Technology : “How can technology be effectively integrated into the classroom to enhance learning, and what are the potential drawbacks or challenges?”

- Theoretical Framework : Technological Pedagogical Content Knowledge (TPACK)

- Methodology : Experimental design comparing classrooms with and without specific technologies, combined with teacher and student interviews.

Sociology Problems

26. Urbanization and Social Ties: “How does rapid urbanization impact the strength and nature of social ties in communities?”

- Theoretical Framework : Structural Functionalism

- Methodology : Mixed methods, combining quantitative surveys on social ties with qualitative interviews in urbanizing areas.

27. Gender Roles in Modern Families: “How have traditional gender roles evolved in families with dual-income households?”

- Theoretical Framework : Gender Schema Theory

- Methodology : Qualitative interviews with dual-income families, combined with historical data analysis.

28. Social Media and Collective Behavior: “How does social media influence collective behaviors and the formation of social movements?”

- Theoretical Framework : Emergent Norm Theory

- Methodology : Content analysis of social media platforms, combined with quantitative surveys on participation in social movements.

29. Education and Social Mobility: “To what extent does access to quality education influence social mobility in socioeconomically diverse settings?”

- Methodology : Longitudinal study tracking educational access and subsequent socioeconomic status, combined with qualitative interviews.

30. Religion and Social Cohesion: “How do religious beliefs and practices contribute to social cohesion in multicultural societies?”

- Methodology : Quantitative surveys on religious beliefs and perceptions of social cohesion, combined with ethnographic studies.

31. Consumer Culture and Identity Formation: “How does consumer culture influence individual identity formation and personal values?”

- Theoretical Framework : Social Identity Theory

- Methodology : Mixed methods, combining content analysis of advertising with qualitative interviews on identity and values.

32. Migration and Cultural Assimilation: “How do migrants negotiate cultural assimilation and preservation of their original cultural identities in their host countries?”

- Theoretical Framework : Post-Structuralism

- Methodology : Qualitative interviews with migrants, combined with observational studies in multicultural communities.

33. Social Networks and Mental Health: “How do social networks, both online and offline, impact mental health and well-being?”

- Theoretical Framework : Social Network Theory

- Methodology : Quantitative surveys assessing social network characteristics and mental health metrics, combined with qualitative interviews.

34. Crime, Deviance, and Social Control: “How do societal norms and values shape definitions of crime and deviance, and how are these definitions enforced?”

- Theoretical Framework : Labeling Theory

- Methodology : Content analysis of legal documents and media, combined with ethnographic studies in diverse communities.

35. Technology and Social Interaction: “How has the proliferation of digital technology influenced face-to-face social interactions and community building?”

- Theoretical Framework : Technological Determinism

- Methodology : Mixed methods, combining quantitative surveys on technology use with qualitative observations of social interactions in various settings.

Nursing Problems

36. Patient Communication and Recovery: “How does effective nurse-patient communication influence patient recovery rates and overall satisfaction with care?”

- Methodology : Quantitative surveys assessing patient satisfaction and recovery metrics, combined with observational studies on nurse-patient interactions.

37. Stress Management in Nursing: “What are the primary sources of occupational stress for nurses, and how can they be effectively managed to prevent burnout?”

- Methodology : Mixed methods, combining quantitative measures of stress and burnout with qualitative interviews exploring personal experiences and coping mechanisms.

38. Hand Hygiene Compliance: “How effective are different interventions in improving hand hygiene compliance among nursing staff, and what are the barriers to consistent hand hygiene?”

- Methodology : Experimental design comparing hand hygiene rates before and after specific interventions, combined with focus groups to understand barriers.

39. Nurse-Patient Ratios and Patient Outcomes: “How do nurse-patient ratios impact patient outcomes, including recovery rates, complications, and hospital readmissions?”

- Methodology : Quantitative study analyzing patient outcomes in relation to staffing levels, possibly using retrospective chart reviews.

40. Continuing Education and Clinical Competence: “How does regular continuing education influence clinical competence and confidence among nurses?”

- Methodology : Longitudinal study tracking nurses’ clinical skills and confidence over time as they engage in continuing education, combined with patient outcome measures to assess potential impacts on care quality.

Communication Studies Problems

41. Media Representation and Public Perception: “How does media representation of minority groups influence public perceptions and biases?”

- Theoretical Framework : Cultivation Theory

- Methodology : Content analysis of media representations combined with quantitative surveys assessing public perceptions and attitudes.

42. Digital Communication and Relationship Building: “How has the rise of digital communication platforms impacted the way individuals build and maintain personal relationships?”

- Theoretical Framework : Social Penetration Theory

- Methodology : Mixed methods, combining quantitative surveys on digital communication habits with qualitative interviews exploring personal relationship dynamics.

43. Crisis Communication Effectiveness: “What strategies are most effective in managing public relations during organizational crises, and how do they influence public trust?”

- Theoretical Framework : Situational Crisis Communication Theory (SCCT)

- Methodology : Case study analysis of past organizational crises, assessing communication strategies used and subsequent public trust metrics.

44. Nonverbal Cues in Virtual Communication: “How do nonverbal cues, such as facial expressions and gestures, influence message interpretation in virtual communication platforms?”

- Theoretical Framework : Social Semiotics

- Methodology : Experimental design using video conferencing tools, analyzing participants’ interpretations of messages with varying nonverbal cues.

45. Influence of Social Media on Political Engagement: “How does exposure to political content on social media platforms influence individuals’ political engagement and activism?”

- Theoretical Framework : Uses and Gratifications Theory

- Methodology : Quantitative surveys assessing social media habits and political engagement levels, combined with content analysis of political posts on popular platforms.

Before you Go: Tips and Tricks for Writing a Research Problem

This is an incredibly stressful time for research students. The research problem is going to lock you into a specific line of inquiry for the rest of your studies.

So, here’s what I tend to suggest to my students:

- Start with something you find intellectually stimulating – Too many students choose projects because they think it hasn’t been studies or they’ve found a research gap. Don’t over-estimate the importance of finding a research gap. There are gaps in every line of inquiry. For now, just find a topic you think you can really sink your teeth into and will enjoy learning about.

- Take 5 ideas to your supervisor – Approach your research supervisor, professor, lecturer, TA, our course leader with 5 research problem ideas and run each by them. The supervisor will have valuable insights that you didn’t consider that will help you narrow-down and refine your problem even more.

- Trust your supervisor – The supervisor-student relationship is often very strained and stressful. While of course this is your project, your supervisor knows the internal politics and conventions of academic research. The depth of knowledge about how to navigate academia and get you out the other end with your degree is invaluable. Don’t underestimate their advice.

I’ve got a full article on all my tips and tricks for doing research projects right here – I recommend reading it:

- 9 Tips on How to Choose a Dissertation Topic

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd/ 15 Self-Actualization Examples (Maslow's Hierarchy)

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd/ Forest Schools Philosophy & Curriculum, Explained!

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd/ Montessori's 4 Planes of Development, Explained!

- Chris Drew (PhD) https://helpfulprofessor.com/author/chris-drew-phd/ Montessori vs Reggio Emilia vs Steiner-Waldorf vs Froebel

Leave a Comment Cancel Reply

Your email address will not be published. Required fields are marked *

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- J Korean Med Sci

- v.37(16); 2022 Apr 25

A Practical Guide to Writing Quantitative and Qualitative Research Questions and Hypotheses in Scholarly Articles

Edward barroga.

1 Department of General Education, Graduate School of Nursing Science, St. Luke’s International University, Tokyo, Japan.

Glafera Janet Matanguihan

2 Department of Biological Sciences, Messiah University, Mechanicsburg, PA, USA.

The development of research questions and the subsequent hypotheses are prerequisites to defining the main research purpose and specific objectives of a study. Consequently, these objectives determine the study design and research outcome. The development of research questions is a process based on knowledge of current trends, cutting-edge studies, and technological advances in the research field. Excellent research questions are focused and require a comprehensive literature search and in-depth understanding of the problem being investigated. Initially, research questions may be written as descriptive questions which could be developed into inferential questions. These questions must be specific and concise to provide a clear foundation for developing hypotheses. Hypotheses are more formal predictions about the research outcomes. These specify the possible results that may or may not be expected regarding the relationship between groups. Thus, research questions and hypotheses clarify the main purpose and specific objectives of the study, which in turn dictate the design of the study, its direction, and outcome. Studies developed from good research questions and hypotheses will have trustworthy outcomes with wide-ranging social and health implications.

INTRODUCTION

Scientific research is usually initiated by posing evidenced-based research questions which are then explicitly restated as hypotheses. 1 , 2 The hypotheses provide directions to guide the study, solutions, explanations, and expected results. 3 , 4 Both research questions and hypotheses are essentially formulated based on conventional theories and real-world processes, which allow the inception of novel studies and the ethical testing of ideas. 5 , 6

It is crucial to have knowledge of both quantitative and qualitative research 2 as both types of research involve writing research questions and hypotheses. 7 However, these crucial elements of research are sometimes overlooked; if not overlooked, then framed without the forethought and meticulous attention it needs. Planning and careful consideration are needed when developing quantitative or qualitative research, particularly when conceptualizing research questions and hypotheses. 4

There is a continuing need to support researchers in the creation of innovative research questions and hypotheses, as well as for journal articles that carefully review these elements. 1 When research questions and hypotheses are not carefully thought of, unethical studies and poor outcomes usually ensue. Carefully formulated research questions and hypotheses define well-founded objectives, which in turn determine the appropriate design, course, and outcome of the study. This article then aims to discuss in detail the various aspects of crafting research questions and hypotheses, with the goal of guiding researchers as they develop their own. Examples from the authors and peer-reviewed scientific articles in the healthcare field are provided to illustrate key points.

DEFINITIONS AND RELATIONSHIP OF RESEARCH QUESTIONS AND HYPOTHESES

A research question is what a study aims to answer after data analysis and interpretation. The answer is written in length in the discussion section of the paper. Thus, the research question gives a preview of the different parts and variables of the study meant to address the problem posed in the research question. 1 An excellent research question clarifies the research writing while facilitating understanding of the research topic, objective, scope, and limitations of the study. 5

On the other hand, a research hypothesis is an educated statement of an expected outcome. This statement is based on background research and current knowledge. 8 , 9 The research hypothesis makes a specific prediction about a new phenomenon 10 or a formal statement on the expected relationship between an independent variable and a dependent variable. 3 , 11 It provides a tentative answer to the research question to be tested or explored. 4

Hypotheses employ reasoning to predict a theory-based outcome. 10 These can also be developed from theories by focusing on components of theories that have not yet been observed. 10 The validity of hypotheses is often based on the testability of the prediction made in a reproducible experiment. 8

Conversely, hypotheses can also be rephrased as research questions. Several hypotheses based on existing theories and knowledge may be needed to answer a research question. Developing ethical research questions and hypotheses creates a research design that has logical relationships among variables. These relationships serve as a solid foundation for the conduct of the study. 4 , 11 Haphazardly constructed research questions can result in poorly formulated hypotheses and improper study designs, leading to unreliable results. Thus, the formulations of relevant research questions and verifiable hypotheses are crucial when beginning research. 12

CHARACTERISTICS OF GOOD RESEARCH QUESTIONS AND HYPOTHESES

Excellent research questions are specific and focused. These integrate collective data and observations to confirm or refute the subsequent hypotheses. Well-constructed hypotheses are based on previous reports and verify the research context. These are realistic, in-depth, sufficiently complex, and reproducible. More importantly, these hypotheses can be addressed and tested. 13

There are several characteristics of well-developed hypotheses. Good hypotheses are 1) empirically testable 7 , 10 , 11 , 13 ; 2) backed by preliminary evidence 9 ; 3) testable by ethical research 7 , 9 ; 4) based on original ideas 9 ; 5) have evidenced-based logical reasoning 10 ; and 6) can be predicted. 11 Good hypotheses can infer ethical and positive implications, indicating the presence of a relationship or effect relevant to the research theme. 7 , 11 These are initially developed from a general theory and branch into specific hypotheses by deductive reasoning. In the absence of a theory to base the hypotheses, inductive reasoning based on specific observations or findings form more general hypotheses. 10

TYPES OF RESEARCH QUESTIONS AND HYPOTHESES

Research questions and hypotheses are developed according to the type of research, which can be broadly classified into quantitative and qualitative research. We provide a summary of the types of research questions and hypotheses under quantitative and qualitative research categories in Table 1 .

| Quantitative research questions | Quantitative research hypotheses |

|---|---|

| Descriptive research questions | Simple hypothesis |

| Comparative research questions | Complex hypothesis |

| Relationship research questions | Directional hypothesis |

| Non-directional hypothesis | |

| Associative hypothesis | |

| Causal hypothesis | |

| Null hypothesis | |

| Alternative hypothesis | |

| Working hypothesis | |

| Statistical hypothesis | |

| Logical hypothesis | |

| Hypothesis-testing | |

| Qualitative research questions | Qualitative research hypotheses |

| Contextual research questions | Hypothesis-generating |

| Descriptive research questions | |

| Evaluation research questions | |

| Explanatory research questions | |

| Exploratory research questions | |

| Generative research questions | |

| Ideological research questions | |

| Ethnographic research questions | |

| Phenomenological research questions | |

| Grounded theory questions | |

| Qualitative case study questions |

Research questions in quantitative research

In quantitative research, research questions inquire about the relationships among variables being investigated and are usually framed at the start of the study. These are precise and typically linked to the subject population, dependent and independent variables, and research design. 1 Research questions may also attempt to describe the behavior of a population in relation to one or more variables, or describe the characteristics of variables to be measured ( descriptive research questions ). 1 , 5 , 14 These questions may also aim to discover differences between groups within the context of an outcome variable ( comparative research questions ), 1 , 5 , 14 or elucidate trends and interactions among variables ( relationship research questions ). 1 , 5 We provide examples of descriptive, comparative, and relationship research questions in quantitative research in Table 2 .

| Quantitative research questions | |

|---|---|

| Descriptive research question | |

| - Measures responses of subjects to variables | |

| - Presents variables to measure, analyze, or assess | |

| What is the proportion of resident doctors in the hospital who have mastered ultrasonography (response of subjects to a variable) as a diagnostic technique in their clinical training? | |

| Comparative research question | |

| - Clarifies difference between one group with outcome variable and another group without outcome variable | |

| Is there a difference in the reduction of lung metastasis in osteosarcoma patients who received the vitamin D adjunctive therapy (group with outcome variable) compared with osteosarcoma patients who did not receive the vitamin D adjunctive therapy (group without outcome variable)? | |

| - Compares the effects of variables | |

| How does the vitamin D analogue 22-Oxacalcitriol (variable 1) mimic the antiproliferative activity of 1,25-Dihydroxyvitamin D (variable 2) in osteosarcoma cells? | |

| Relationship research question | |

| - Defines trends, association, relationships, or interactions between dependent variable and independent variable | |

| Is there a relationship between the number of medical student suicide (dependent variable) and the level of medical student stress (independent variable) in Japan during the first wave of the COVID-19 pandemic? | |

Hypotheses in quantitative research

In quantitative research, hypotheses predict the expected relationships among variables. 15 Relationships among variables that can be predicted include 1) between a single dependent variable and a single independent variable ( simple hypothesis ) or 2) between two or more independent and dependent variables ( complex hypothesis ). 4 , 11 Hypotheses may also specify the expected direction to be followed and imply an intellectual commitment to a particular outcome ( directional hypothesis ) 4 . On the other hand, hypotheses may not predict the exact direction and are used in the absence of a theory, or when findings contradict previous studies ( non-directional hypothesis ). 4 In addition, hypotheses can 1) define interdependency between variables ( associative hypothesis ), 4 2) propose an effect on the dependent variable from manipulation of the independent variable ( causal hypothesis ), 4 3) state a negative relationship between two variables ( null hypothesis ), 4 , 11 , 15 4) replace the working hypothesis if rejected ( alternative hypothesis ), 15 explain the relationship of phenomena to possibly generate a theory ( working hypothesis ), 11 5) involve quantifiable variables that can be tested statistically ( statistical hypothesis ), 11 6) or express a relationship whose interlinks can be verified logically ( logical hypothesis ). 11 We provide examples of simple, complex, directional, non-directional, associative, causal, null, alternative, working, statistical, and logical hypotheses in quantitative research, as well as the definition of quantitative hypothesis-testing research in Table 3 .

| Quantitative research hypotheses | |

|---|---|

| Simple hypothesis | |

| - Predicts relationship between single dependent variable and single independent variable | |

| If the dose of the new medication (single independent variable) is high, blood pressure (single dependent variable) is lowered. | |

| Complex hypothesis | |

| - Foretells relationship between two or more independent and dependent variables | |

| The higher the use of anticancer drugs, radiation therapy, and adjunctive agents (3 independent variables), the higher would be the survival rate (1 dependent variable). | |

| Directional hypothesis | |

| - Identifies study direction based on theory towards particular outcome to clarify relationship between variables | |

| Privately funded research projects will have a larger international scope (study direction) than publicly funded research projects. | |

| Non-directional hypothesis | |

| - Nature of relationship between two variables or exact study direction is not identified | |

| - Does not involve a theory | |

| Women and men are different in terms of helpfulness. (Exact study direction is not identified) | |

| Associative hypothesis | |

| - Describes variable interdependency | |

| - Change in one variable causes change in another variable | |

| A larger number of people vaccinated against COVID-19 in the region (change in independent variable) will reduce the region’s incidence of COVID-19 infection (change in dependent variable). | |

| Causal hypothesis | |

| - An effect on dependent variable is predicted from manipulation of independent variable | |

| A change into a high-fiber diet (independent variable) will reduce the blood sugar level (dependent variable) of the patient. | |

| Null hypothesis | |

| - A negative statement indicating no relationship or difference between 2 variables | |

| There is no significant difference in the severity of pulmonary metastases between the new drug (variable 1) and the current drug (variable 2). | |

| Alternative hypothesis | |

| - Following a null hypothesis, an alternative hypothesis predicts a relationship between 2 study variables | |

| The new drug (variable 1) is better on average in reducing the level of pain from pulmonary metastasis than the current drug (variable 2). | |

| Working hypothesis | |

| - A hypothesis that is initially accepted for further research to produce a feasible theory | |

| Dairy cows fed with concentrates of different formulations will produce different amounts of milk. | |

| Statistical hypothesis | |

| - Assumption about the value of population parameter or relationship among several population characteristics | |

| - Validity tested by a statistical experiment or analysis | |

| The mean recovery rate from COVID-19 infection (value of population parameter) is not significantly different between population 1 and population 2. | |

| There is a positive correlation between the level of stress at the workplace and the number of suicides (population characteristics) among working people in Japan. | |

| Logical hypothesis | |

| - Offers or proposes an explanation with limited or no extensive evidence | |

| If healthcare workers provide more educational programs about contraception methods, the number of adolescent pregnancies will be less. | |

| Hypothesis-testing (Quantitative hypothesis-testing research) | |

| - Quantitative research uses deductive reasoning. | |

| - This involves the formation of a hypothesis, collection of data in the investigation of the problem, analysis and use of the data from the investigation, and drawing of conclusions to validate or nullify the hypotheses. | |

Research questions in qualitative research

Unlike research questions in quantitative research, research questions in qualitative research are usually continuously reviewed and reformulated. The central question and associated subquestions are stated more than the hypotheses. 15 The central question broadly explores a complex set of factors surrounding the central phenomenon, aiming to present the varied perspectives of participants. 15

There are varied goals for which qualitative research questions are developed. These questions can function in several ways, such as to 1) identify and describe existing conditions ( contextual research question s); 2) describe a phenomenon ( descriptive research questions ); 3) assess the effectiveness of existing methods, protocols, theories, or procedures ( evaluation research questions ); 4) examine a phenomenon or analyze the reasons or relationships between subjects or phenomena ( explanatory research questions ); or 5) focus on unknown aspects of a particular topic ( exploratory research questions ). 5 In addition, some qualitative research questions provide new ideas for the development of theories and actions ( generative research questions ) or advance specific ideologies of a position ( ideological research questions ). 1 Other qualitative research questions may build on a body of existing literature and become working guidelines ( ethnographic research questions ). Research questions may also be broadly stated without specific reference to the existing literature or a typology of questions ( phenomenological research questions ), may be directed towards generating a theory of some process ( grounded theory questions ), or may address a description of the case and the emerging themes ( qualitative case study questions ). 15 We provide examples of contextual, descriptive, evaluation, explanatory, exploratory, generative, ideological, ethnographic, phenomenological, grounded theory, and qualitative case study research questions in qualitative research in Table 4 , and the definition of qualitative hypothesis-generating research in Table 5 .

| Qualitative research questions | |

|---|---|

| Contextual research question | |

| - Ask the nature of what already exists | |

| - Individuals or groups function to further clarify and understand the natural context of real-world problems | |

| What are the experiences of nurses working night shifts in healthcare during the COVID-19 pandemic? (natural context of real-world problems) | |

| Descriptive research question | |

| - Aims to describe a phenomenon | |

| What are the different forms of disrespect and abuse (phenomenon) experienced by Tanzanian women when giving birth in healthcare facilities? | |

| Evaluation research question | |

| - Examines the effectiveness of existing practice or accepted frameworks | |

| How effective are decision aids (effectiveness of existing practice) in helping decide whether to give birth at home or in a healthcare facility? | |

| Explanatory research question | |

| - Clarifies a previously studied phenomenon and explains why it occurs | |

| Why is there an increase in teenage pregnancy (phenomenon) in Tanzania? | |

| Exploratory research question | |

| - Explores areas that have not been fully investigated to have a deeper understanding of the research problem | |

| What factors affect the mental health of medical students (areas that have not yet been fully investigated) during the COVID-19 pandemic? | |

| Generative research question | |

| - Develops an in-depth understanding of people’s behavior by asking ‘how would’ or ‘what if’ to identify problems and find solutions | |

| How would the extensive research experience of the behavior of new staff impact the success of the novel drug initiative? | |

| Ideological research question | |

| - Aims to advance specific ideas or ideologies of a position | |

| Are Japanese nurses who volunteer in remote African hospitals able to promote humanized care of patients (specific ideas or ideologies) in the areas of safe patient environment, respect of patient privacy, and provision of accurate information related to health and care? | |

| Ethnographic research question | |

| - Clarifies peoples’ nature, activities, their interactions, and the outcomes of their actions in specific settings | |

| What are the demographic characteristics, rehabilitative treatments, community interactions, and disease outcomes (nature, activities, their interactions, and the outcomes) of people in China who are suffering from pneumoconiosis? | |

| Phenomenological research question | |

| - Knows more about the phenomena that have impacted an individual | |

| What are the lived experiences of parents who have been living with and caring for children with a diagnosis of autism? (phenomena that have impacted an individual) | |

| Grounded theory question | |

| - Focuses on social processes asking about what happens and how people interact, or uncovering social relationships and behaviors of groups | |

| What are the problems that pregnant adolescents face in terms of social and cultural norms (social processes), and how can these be addressed? | |

| Qualitative case study question | |

| - Assesses a phenomenon using different sources of data to answer “why” and “how” questions | |

| - Considers how the phenomenon is influenced by its contextual situation. | |

| How does quitting work and assuming the role of a full-time mother (phenomenon assessed) change the lives of women in Japan? | |

| Qualitative research hypotheses | |

|---|---|

| Hypothesis-generating (Qualitative hypothesis-generating research) | |

| - Qualitative research uses inductive reasoning. | |

| - This involves data collection from study participants or the literature regarding a phenomenon of interest, using the collected data to develop a formal hypothesis, and using the formal hypothesis as a framework for testing the hypothesis. | |

| - Qualitative exploratory studies explore areas deeper, clarifying subjective experience and allowing formulation of a formal hypothesis potentially testable in a future quantitative approach. | |

Qualitative studies usually pose at least one central research question and several subquestions starting with How or What . These research questions use exploratory verbs such as explore or describe . These also focus on one central phenomenon of interest, and may mention the participants and research site. 15

Hypotheses in qualitative research

Hypotheses in qualitative research are stated in the form of a clear statement concerning the problem to be investigated. Unlike in quantitative research where hypotheses are usually developed to be tested, qualitative research can lead to both hypothesis-testing and hypothesis-generating outcomes. 2 When studies require both quantitative and qualitative research questions, this suggests an integrative process between both research methods wherein a single mixed-methods research question can be developed. 1

FRAMEWORKS FOR DEVELOPING RESEARCH QUESTIONS AND HYPOTHESES

Research questions followed by hypotheses should be developed before the start of the study. 1 , 12 , 14 It is crucial to develop feasible research questions on a topic that is interesting to both the researcher and the scientific community. This can be achieved by a meticulous review of previous and current studies to establish a novel topic. Specific areas are subsequently focused on to generate ethical research questions. The relevance of the research questions is evaluated in terms of clarity of the resulting data, specificity of the methodology, objectivity of the outcome, depth of the research, and impact of the study. 1 , 5 These aspects constitute the FINER criteria (i.e., Feasible, Interesting, Novel, Ethical, and Relevant). 1 Clarity and effectiveness are achieved if research questions meet the FINER criteria. In addition to the FINER criteria, Ratan et al. described focus, complexity, novelty, feasibility, and measurability for evaluating the effectiveness of research questions. 14

The PICOT and PEO frameworks are also used when developing research questions. 1 The following elements are addressed in these frameworks, PICOT: P-population/patients/problem, I-intervention or indicator being studied, C-comparison group, O-outcome of interest, and T-timeframe of the study; PEO: P-population being studied, E-exposure to preexisting conditions, and O-outcome of interest. 1 Research questions are also considered good if these meet the “FINERMAPS” framework: Feasible, Interesting, Novel, Ethical, Relevant, Manageable, Appropriate, Potential value/publishable, and Systematic. 14

As we indicated earlier, research questions and hypotheses that are not carefully formulated result in unethical studies or poor outcomes. To illustrate this, we provide some examples of ambiguous research question and hypotheses that result in unclear and weak research objectives in quantitative research ( Table 6 ) 16 and qualitative research ( Table 7 ) 17 , and how to transform these ambiguous research question(s) and hypothesis(es) into clear and good statements.

| Variables | Unclear and weak statement (Statement 1) | Clear and good statement (Statement 2) | Points to avoid |

|---|---|---|---|

| Research question | Which is more effective between smoke moxibustion and smokeless moxibustion? | “Moreover, regarding smoke moxibustion versus smokeless moxibustion, it remains unclear which is more effective, safe, and acceptable to pregnant women, and whether there is any difference in the amount of heat generated.” | 1) Vague and unfocused questions |

| 2) Closed questions simply answerable by yes or no | |||

| 3) Questions requiring a simple choice | |||

| Hypothesis | The smoke moxibustion group will have higher cephalic presentation. | “Hypothesis 1. The smoke moxibustion stick group (SM group) and smokeless moxibustion stick group (-SLM group) will have higher rates of cephalic presentation after treatment than the control group. | 1) Unverifiable hypotheses |

| Hypothesis 2. The SM group and SLM group will have higher rates of cephalic presentation at birth than the control group. | 2) Incompletely stated groups of comparison | ||

| Hypothesis 3. There will be no significant differences in the well-being of the mother and child among the three groups in terms of the following outcomes: premature birth, premature rupture of membranes (PROM) at < 37 weeks, Apgar score < 7 at 5 min, umbilical cord blood pH < 7.1, admission to neonatal intensive care unit (NICU), and intrauterine fetal death.” | 3) Insufficiently described variables or outcomes | ||

| Research objective | To determine which is more effective between smoke moxibustion and smokeless moxibustion. | “The specific aims of this pilot study were (a) to compare the effects of smoke moxibustion and smokeless moxibustion treatments with the control group as a possible supplement to ECV for converting breech presentation to cephalic presentation and increasing adherence to the newly obtained cephalic position, and (b) to assess the effects of these treatments on the well-being of the mother and child.” | 1) Poor understanding of the research question and hypotheses |

| 2) Insufficient description of population, variables, or study outcomes |

a These statements were composed for comparison and illustrative purposes only.

b These statements are direct quotes from Higashihara and Horiuchi. 16

| Variables | Unclear and weak statement (Statement 1) | Clear and good statement (Statement 2) | Points to avoid |

|---|---|---|---|

| Research question | Does disrespect and abuse (D&A) occur in childbirth in Tanzania? | How does disrespect and abuse (D&A) occur and what are the types of physical and psychological abuses observed in midwives’ actual care during facility-based childbirth in urban Tanzania? | 1) Ambiguous or oversimplistic questions |

| 2) Questions unverifiable by data collection and analysis | |||

| Hypothesis | Disrespect and abuse (D&A) occur in childbirth in Tanzania. | Hypothesis 1: Several types of physical and psychological abuse by midwives in actual care occur during facility-based childbirth in urban Tanzania. | 1) Statements simply expressing facts |